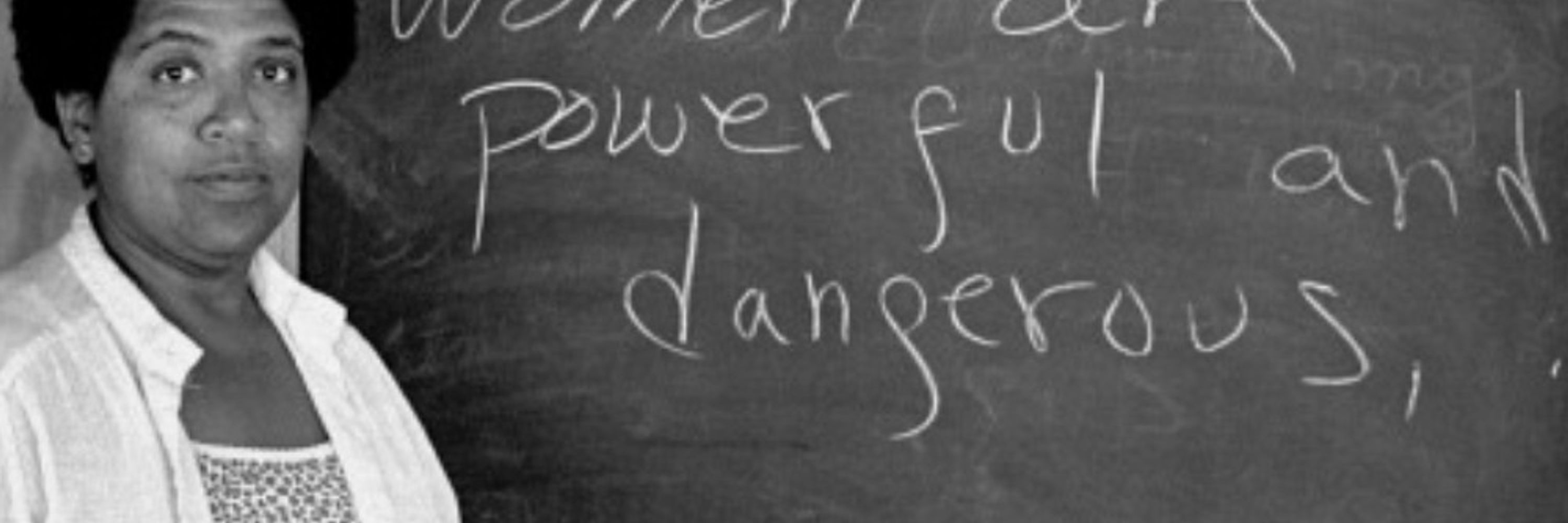

Ex-investigative reporter and editor

Crisis informatics nerd

Makes good trouble

Tell your dog I said "hi"

I think this explains the massive disconnect we see in how CEOs talk about AI versus everyone else. It also raises the question of how useful it truly is for frontline work?

If content (computer-generated or otherwise) is predicated on theft, the resulting output is not ethical, legal or moral. Full stop.

If content (computer-generated or otherwise) is predicated on theft, the resulting output is not ethical, legal or moral. Full stop.

www.youtube.com/watch?v=HvFh...

www.youtube.com/watch?v=HvFh...

The Bridge School Benefit 2010:

Neil Young

Buffalo Springfield

Elton John

Leon Russell

Elvis Costello

Grizzly Bear

Jeff Bridges

Kris Kristofferson

Modest Mouse

Neko Case

Pearl Jam

Ralph Stanley

T Bone Burnett

The Bridge School Benefit 2010:

Neil Young

Buffalo Springfield

Elton John

Leon Russell

Elvis Costello

Grizzly Bear

Jeff Bridges

Kris Kristofferson

Modest Mouse

Neko Case

Pearl Jam

Ralph Stanley

T Bone Burnett

Queen (Night at the Opera tour!)

Talking Heads

Sarah McLachlan

Boz Scaggs

KD Lang

Queen (Night at the Opera tour!)

Talking Heads

Sarah McLachlan

Boz Scaggs

KD Lang

Come along for a story about how a basic community page can shift to hyper local news sharing and mutual aid-centered to reach everyday folks.

Come along for a story about how a basic community page can shift to hyper local news sharing and mutual aid-centered to reach everyday folks.

AI is for surveillance

Chatbots are for surveillance

Wearables are for surveillance

Your data/clicks/behavior/content is collected and analyzed to be used against you in decisions re: hiring, insurance risk, credit cards, loans, and law enforcement.

• Oura rings *don't* end-to-end encrypt users' health data;

• Oura *can* access its users' data;

• Oura told me that the company *has* received U.S. government demands for users' data.

AI is for surveillance

Chatbots are for surveillance

Wearables are for surveillance

Your data/clicks/behavior/content is collected and analyzed to be used against you in decisions re: hiring, insurance risk, credit cards, loans, and law enforcement.

Thanks for sharing!

Some excellent pieces to begin with, please do get involved!

www.history-uk.ac.uk/history-in-p...

Thanks for sharing!

Suarez [et al. (2025, par. 7)]

zenodo.org/records/1567...

/1

/1

Smoothing out these necessary frictions with Gen AI chatbots outputs and AI overviews from search retrieval is dangerously reductive.

"shift of the tool people reach for first for finding information is at the heart of how ChatGPT has changed everyday technology use."

theconversation.com/the-chatgpt-...

Smoothing out these necessary frictions with Gen AI chatbots outputs and AI overviews from search retrieval is dangerously reductive.

".. professional AI use is far from ubiquitous and many respondents expressed skepticism that it would be as revolutionary as some experts expect."

@brookings.edu

www.brookings.edu/articles/how...

".. professional AI use is far from ubiquitous and many respondents expressed skepticism that it would be as revolutionary as some experts expect."

@brookings.edu

www.brookings.edu/articles/how...

This week, the magic word that makes assignment editors weak in the knees is "conscious".

This week, the magic word that makes assignment editors weak in the knees is "conscious".

I hope this constitutes contractual beach and the province can claw back the $1.6M for the “analysis” they got

I hope this constitutes contractual beach and the province can claw back the $1.6M for the “analysis” they got

www.theverge.com/ai-artificia...

www.theverge.com/ai-artificia...

Will come back to dissect.

#GiftLink #GiftArticle

Will come back to dissect.

#GiftLink #GiftArticle