part-time reductionist, full time human

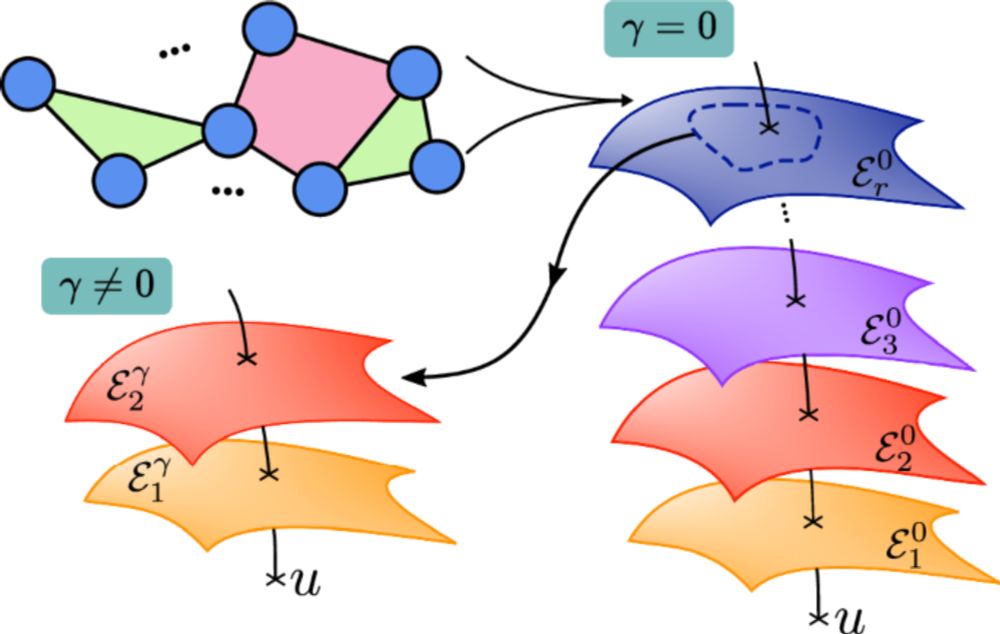

In our new paper, we ask: How do people turn continuous spaces into structured, word-like systems for communication? (1/8)

In our new paper, we ask: How do people turn continuous spaces into structured, word-like systems for communication? (1/8)

w/ @neuranna.bsky.social @evfedorenko.bsky.social @nancykanwisher.bsky.social

arxiv.org/abs/2511.19757

1/n🧵👇

w/ @neuranna.bsky.social @evfedorenko.bsky.social @nancykanwisher.bsky.social

arxiv.org/abs/2511.19757

1/n🧵👇

If many people use AI to do task X, then that tells you that task X is actually just a brainless administrative exercise.

Any such task should probably be eliminated, and if that's not an option, modified to make automation even easier.

References should only be used for short-listed candidates for important positions/awards, and ideally, be done via a call to get the most honest opinion possible.

What's the point of having reference letters anymore if everyone is just having them written by machine?

If many people use AI to do task X, then that tells you that task X is actually just a brainless administrative exercise.

Any such task should probably be eliminated, and if that's not an option, modified to make automation even easier.

It's *scientific publishing*.

We call this the Drain of Scientific Publishing.

Paper: arxiv.org/abs/2511.04820

Background: doi.org/10.1162/qss_...

Thread @markhanson.fediscience.org.ap.brid.gy 👇

It's *scientific publishing*.

We call this the Drain of Scientific Publishing.

Paper: arxiv.org/abs/2511.04820

Background: doi.org/10.1162/qss_...

Thread @markhanson.fediscience.org.ap.brid.gy 👇

LLMs not actually being an example of the bitter lesson was quite a nuance no one saw coming.

youtu.be/21EYKqUsPfg?...

LLMs not actually being an example of the bitter lesson was quite a nuance no one saw coming.

youtu.be/21EYKqUsPfg?...

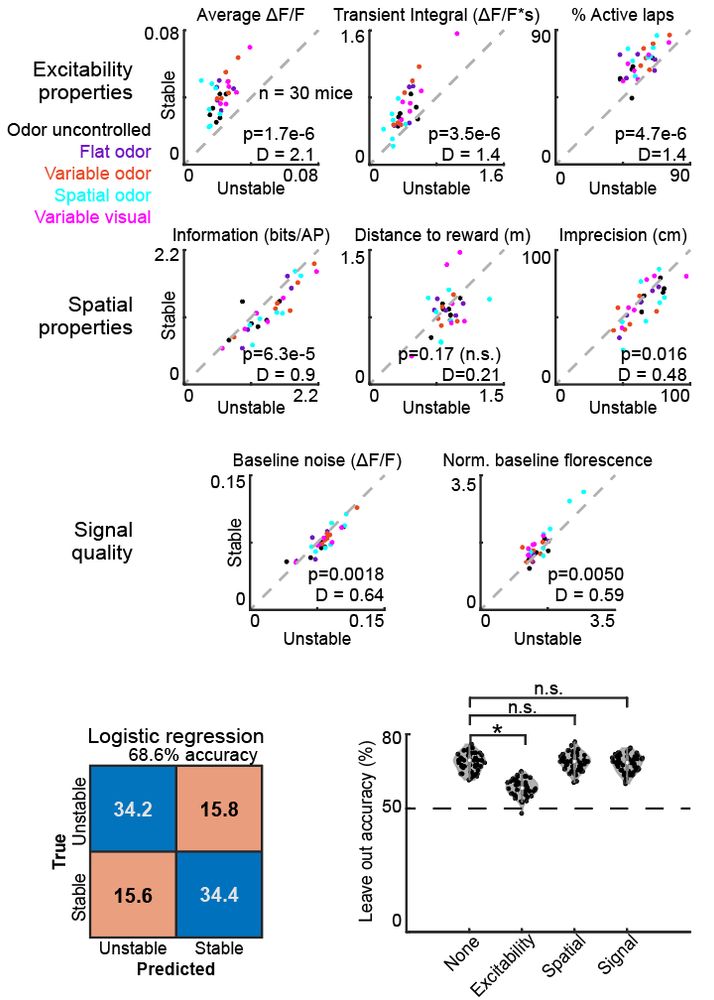

www.biorxiv.org/content/10.1...

www.biorxiv.org/content/10.1...

I could write a whole article about this, but as one example:

“To close observers, the original crisis began well before any of this…”

No. I’m a close observer of science, and this is incorrect.

I could write a whole article about this, but as one example:

“To close observers, the original crisis began well before any of this…”

No. I’m a close observer of science, and this is incorrect.

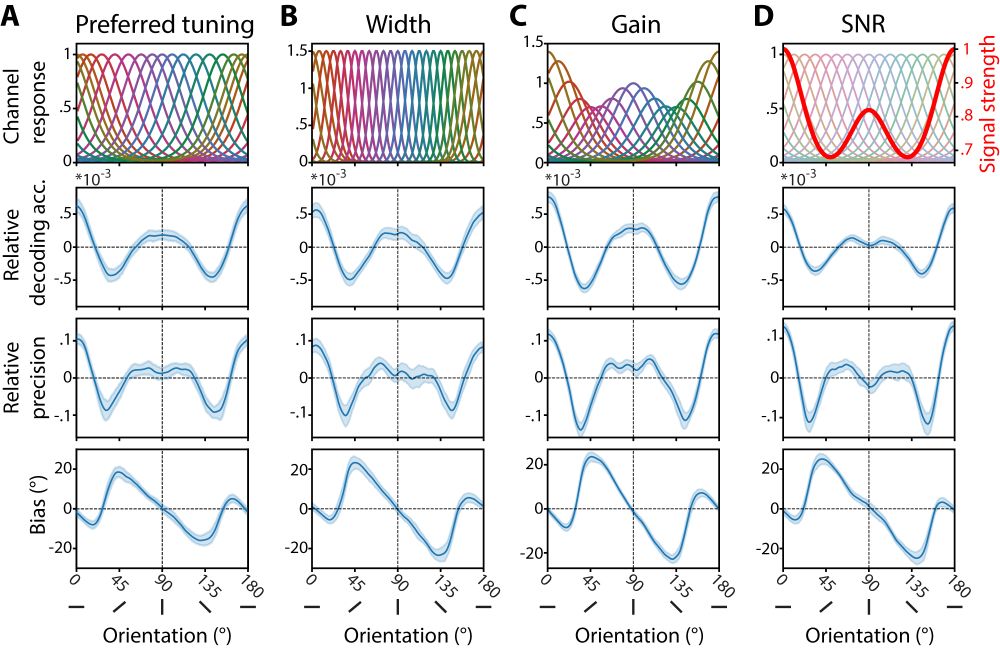

Some thoughts on where neurotheory has and has not taken root within the neuroscience community, how it has shaped those subfields, and where we theorists might look next for fresh adventures.

www.nature.com/articles/s41...

Some thoughts on where neurotheory has and has not taken root within the neuroscience community, how it has shaped those subfields, and where we theorists might look next for fresh adventures.

www.nature.com/articles/s41...

www.nature.com/articles/s41...

We introduce curved neural networks naturally introducing high-order interactions showing:

• explosive phase transitions

• enhanced memory retrieval via self-annealing

• increased memory capacity through geometric curvature

www.nature.com/articles/s41...

We introduce curved neural networks naturally introducing high-order interactions showing:

• explosive phase transitions

• enhanced memory retrieval via self-annealing

• increased memory capacity through geometric curvature

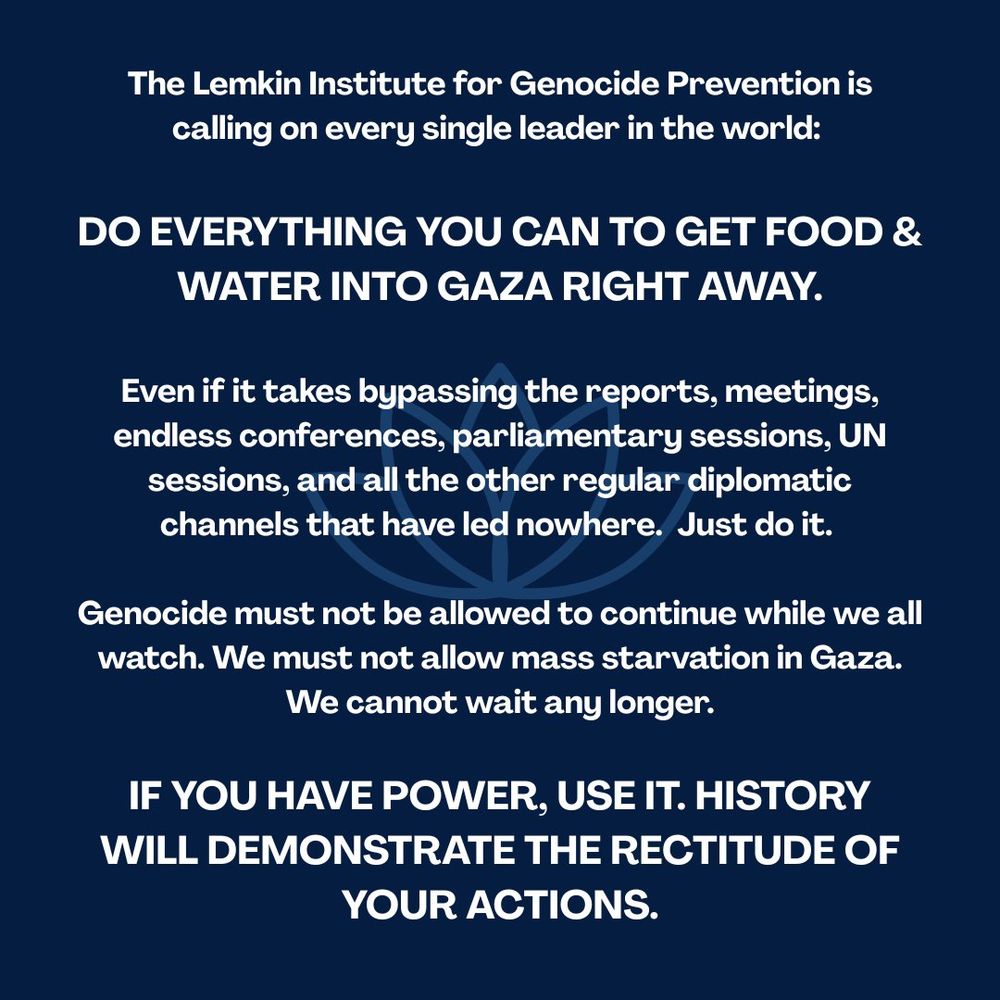

This is from Lemkin Institute begging..... we are all begging.

This is from Lemkin Institute begging..... we are all begging.

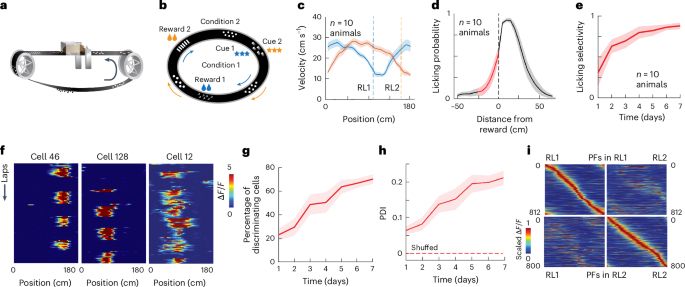

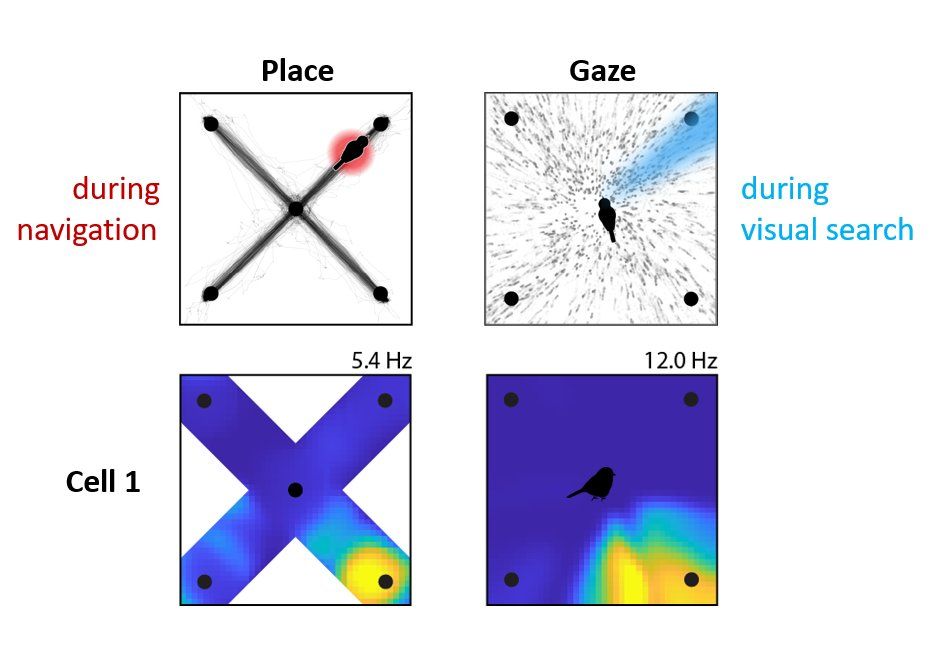

When a chickadee looks at a distant location, the same place cells activate as if it were actually there 👁️

The hippocampus encodes where the bird is looking, AND what it expects to see next -- enabling spatial reasoning from afar

bit.ly/3HvWSum

When a chickadee looks at a distant location, the same place cells activate as if it were actually there 👁️

The hippocampus encodes where the bird is looking, AND what it expects to see next -- enabling spatial reasoning from afar

bit.ly/3HvWSum

Remember when they first came out, people bought microwave cookbooks, and special vented plastic cookware, and they were going to change the way we cooked and ate forever?

Now we use them for defrosting mince, and reheating cold tea.

Remember when they first came out, people bought microwave cookbooks, and special vented plastic cookware, and they were going to change the way we cooked and ate forever?

Now we use them for defrosting mince, and reheating cold tea.

www.biorxiv.org/content/10.1...

careers.peopleclick.com/careerscp/cl...

......

careers.peopleclick.com/careerscp/cl...

......