►Data, Health, & Computer science

►Python coder, (co)founder of scikit-learn, joblib, & @probabl.bsky.social

►Sometimes does art photography

►Physics PhD

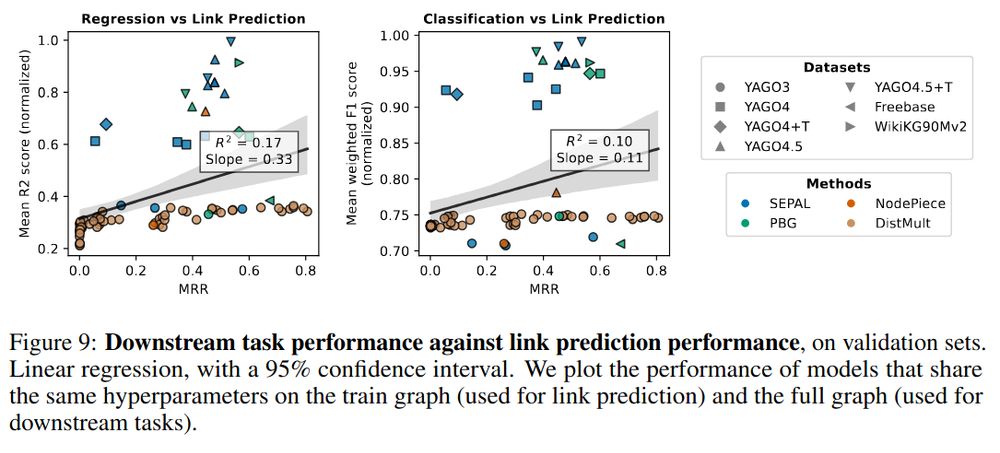

We believe this is because link prediction only needs local structure, unlike downstream tasks

9/10

We believe this is because link prediction only needs local structure, unlike downstream tasks

9/10

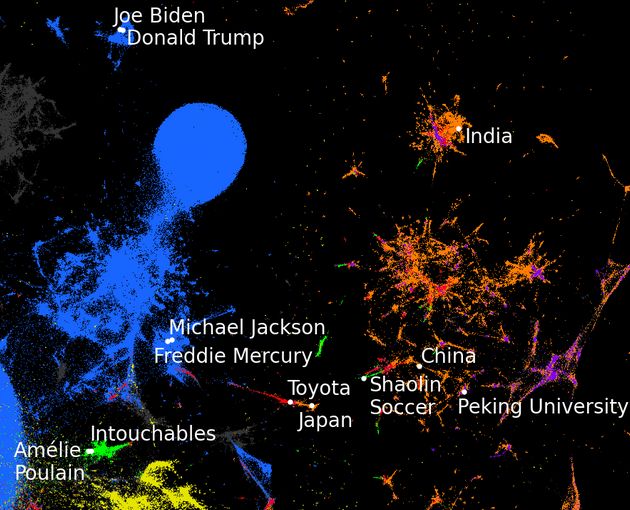

It creates feature vectors that lead to better performance on downstream tasks, and it is more scalable.

Larger knowledge graphs give feature vectors that provide downstream value

8/10

It creates feature vectors that lead to better performance on downstream tasks, and it is more scalable.

Larger knowledge graphs give feature vectors that provide downstream value

8/10

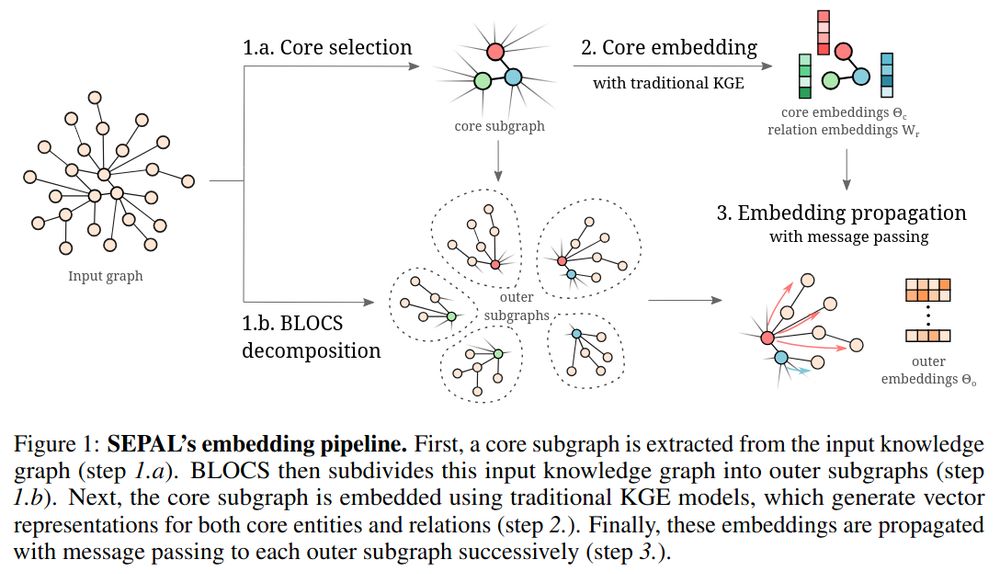

We introduce a procedure that allows for overlap in the blocks, relaxing a lot the difficulty.

7/10

We introduce a procedure that allows for overlap in the blocks, relaxing a lot the difficulty.

7/10

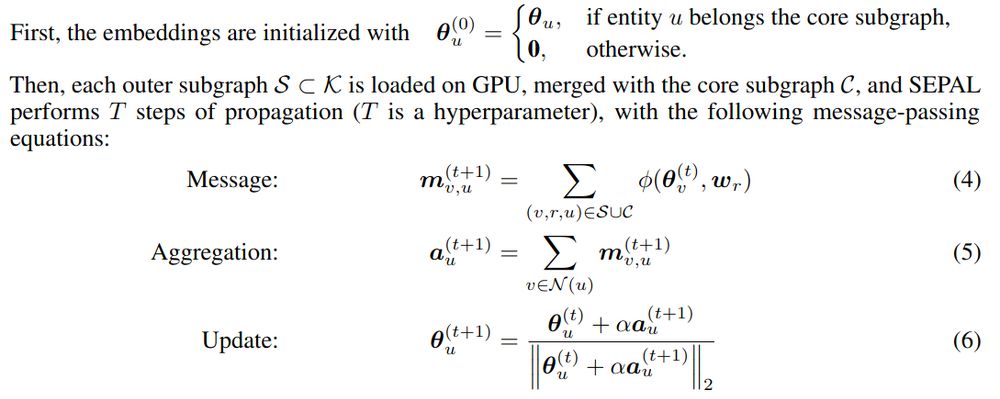

Propagation is then simple in-memory iterations, and we embed huge graphs on a single GPU.

6/10

Propagation is then simple in-memory iterations, and we embed huge graphs on a single GPU.

6/10

For these, we propagate embeddings via the relation operators, in a diffusion-like step, extrapolating from the central entities.

5/10

For these, we propagate embeddings via the relation operators, in a diffusion-like step, extrapolating from the central entities.

5/10

Consistent with knowledge-graph embedding literature, this step represents relations as operators on the embedding space.

It also anchors the central entities.

4/10

Consistent with knowledge-graph embedding literature, this step represents relations as operators on the embedding space.

It also anchors the central entities.

4/10

As opposed to contrastive learning, message passing helps embeddings represent the large-scale structure of the graph (it gives Arnoldi-type iterations).

3/10

As opposed to contrastive learning, message passing helps embeddings represent the large-scale structure of the graph (it gives Arnoldi-type iterations).

3/10

How to distill these into good downstream features, eg for machine learning?

The challenge is to create feature vectors, and for this graph embeddings have been invaluable.

2/10

How to distill these into good downstream features, eg for machine learning?

The challenge is to create feature vectors, and for this graph embeddings have been invaluable.

2/10

Combining contrastive learning and message passing markedly improves features created from embedding graphs, scalable to huge graphs.

It taught us a lot on graph feature learning 👇

1/10

Combining contrastive learning and message passing markedly improves features created from embedding graphs, scalable to huge graphs.

It taught us a lot on graph feature learning 👇

1/10

AdoptAI, a business show: pretty videos, promises about AI, 2.30€ coffee

NeurIPS@Paris, a research conference: equations, free coffee

AI business wouldn't exist without research. Let's not forget it and keep investing in research

AdoptAI, a business show: pretty videos, promises about AI, 2.30€ coffee

NeurIPS@Paris, a research conference: equations, free coffee

AI business wouldn't exist without research. Let's not forget it and keep investing in research

dl.acm.org/doi/10.1145/...

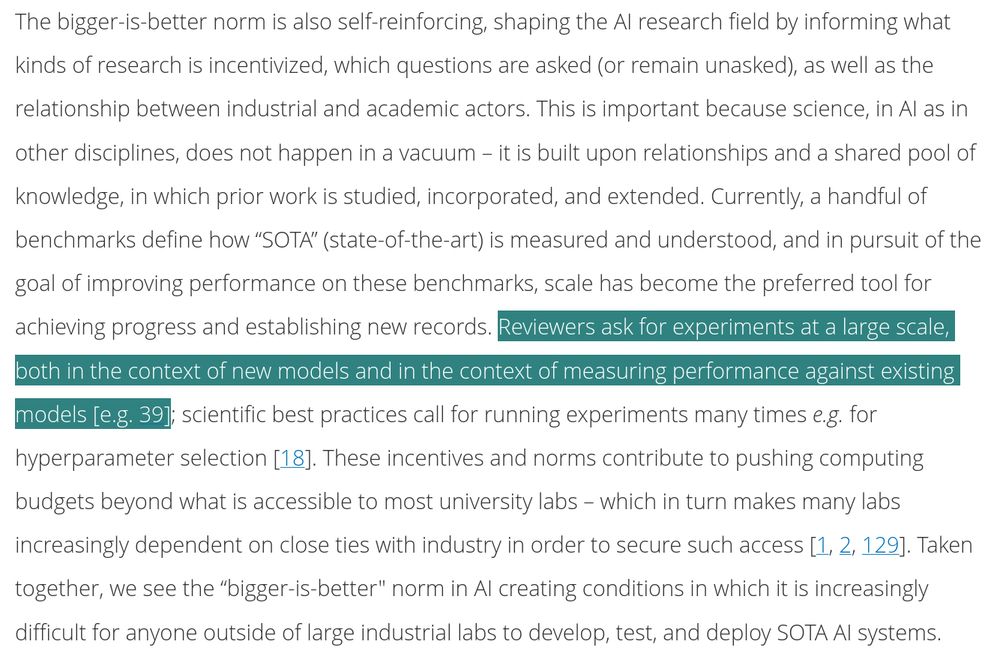

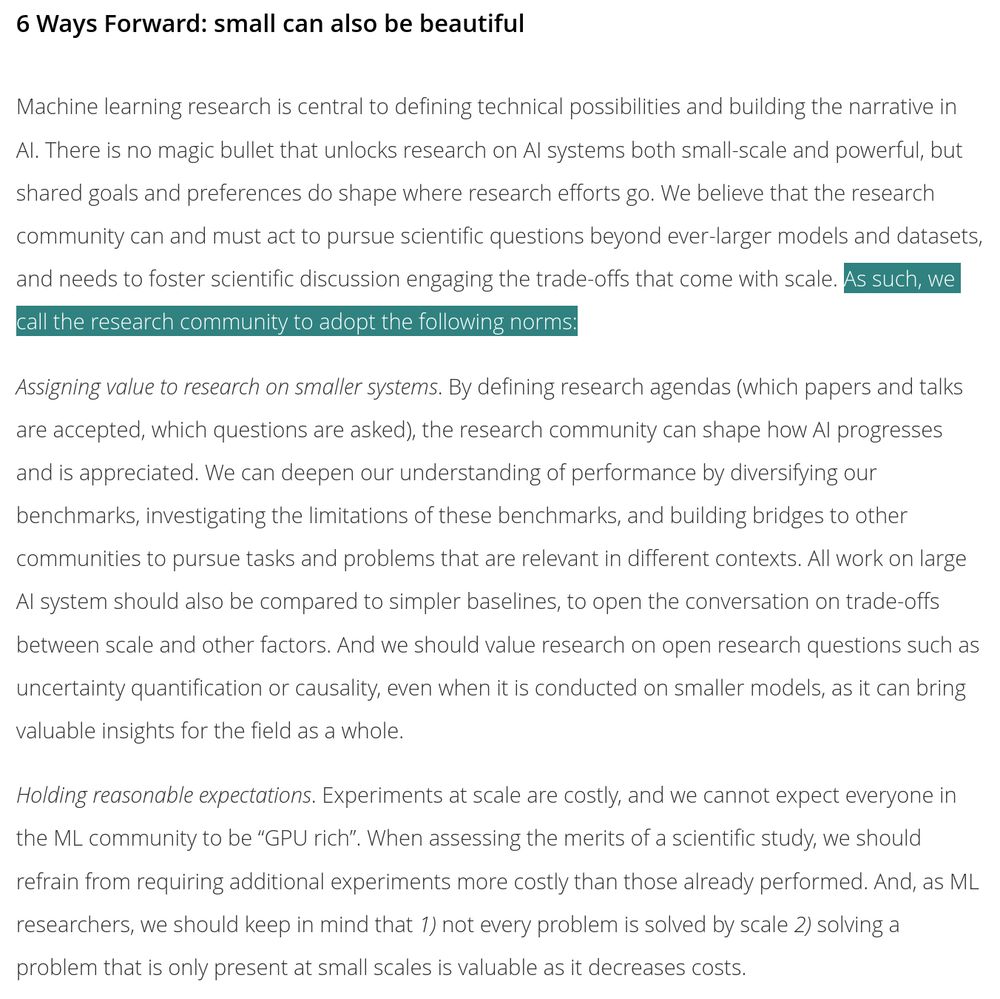

I genuinely think that we need to collectively act to change the narrative and social norms

dl.acm.org/doi/10.1145/...

I genuinely think that we need to collectively act to change the narrative and social norms

C'est gaspiller de l'argent public

C'est gaspiller de l'argent public

Ideal workflow, as far as I am concerned: async, yet interactive, and not needing an infrastructure

Ideal workflow, as far as I am concerned: async, yet interactive, and not needing an infrastructure

gael-varoquaux.info/personnal/a-...

gael-varoquaux.info/personnal/a-...

Define what we're proud of:

Bigger is not better

Simplicity is a virtue

Tech for the many

Define what we're proud of:

Bigger is not better

Simplicity is a virtue

Tech for the many

We need to be careful whom we platform. Tech lords have sometimes the wrong political connections.

We need to be careful whom we platform. Tech lords have sometimes the wrong political connections.

Efficiency improvements are super useful. But demand will increase more, and catch up. Such a rebound effect is very classic with technology, eg with transportation or energy.

It's really the behaviors that condition resource usage (eg bike > SUV)

Efficiency improvements are super useful. But demand will increase more, and catch up. Such a rebound effect is very classic with technology, eg with transportation or energy.

It's really the behaviors that condition resource usage (eg bike > SUV)

Software and algorithms keep getting better, as well as large compute and data infrastructures.

Software and algorithms keep getting better, as well as large compute and data infrastructures.

But high costs are not always a bad thing (if you own nvidia stock)

But high costs are not always a bad thing (if you own nvidia stock)

Well, the cost has been exploding (exponentially indeed).

So it's really about pouring more and more money

Well, the cost has been exploding (exponentially indeed).

So it's really about pouring more and more money

and indeed, the compute used has explode, in a super-exponential growth, going way beyond the daily compute of the biggest computers

and indeed, the compute used has explode, in a super-exponential growth, going way beyond the daily compute of the biggest computers

(look at those studies by microsoft, IBM, Google...)

(look at those studies by microsoft, IBM, Google...)

Well, AI is cool...

it's all over the news, the people on the pictures look healthy and happy (and also white and male), and there is always a big amount of dollars associated

Well, AI is cool...

it's all over the news, the people on the pictures look healthy and happy (and also white and male), and there is always a big amount of dollars associated

What is "normal" is cultural by nature

What is "normal" is cultural by nature

At @pydataparis.bsky.social in a few minutes

At @pydataparis.bsky.social in a few minutes