►Data, Health, & Computer science

►Python coder, (co)founder of scikit-learn, joblib, & @probabl.bsky.social

►Sometimes does art photography

►Physics PhD

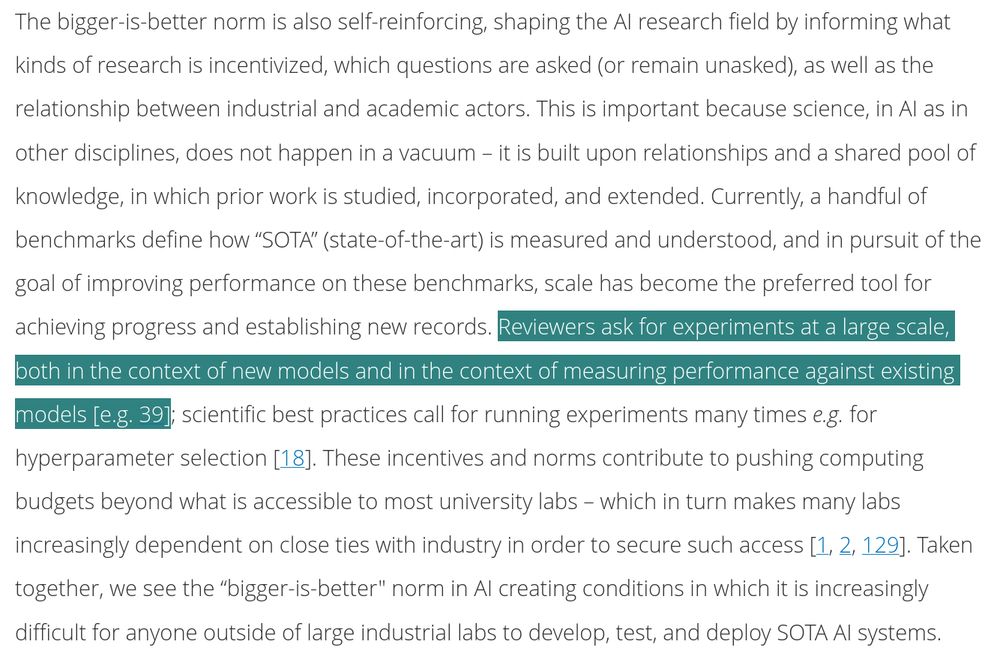

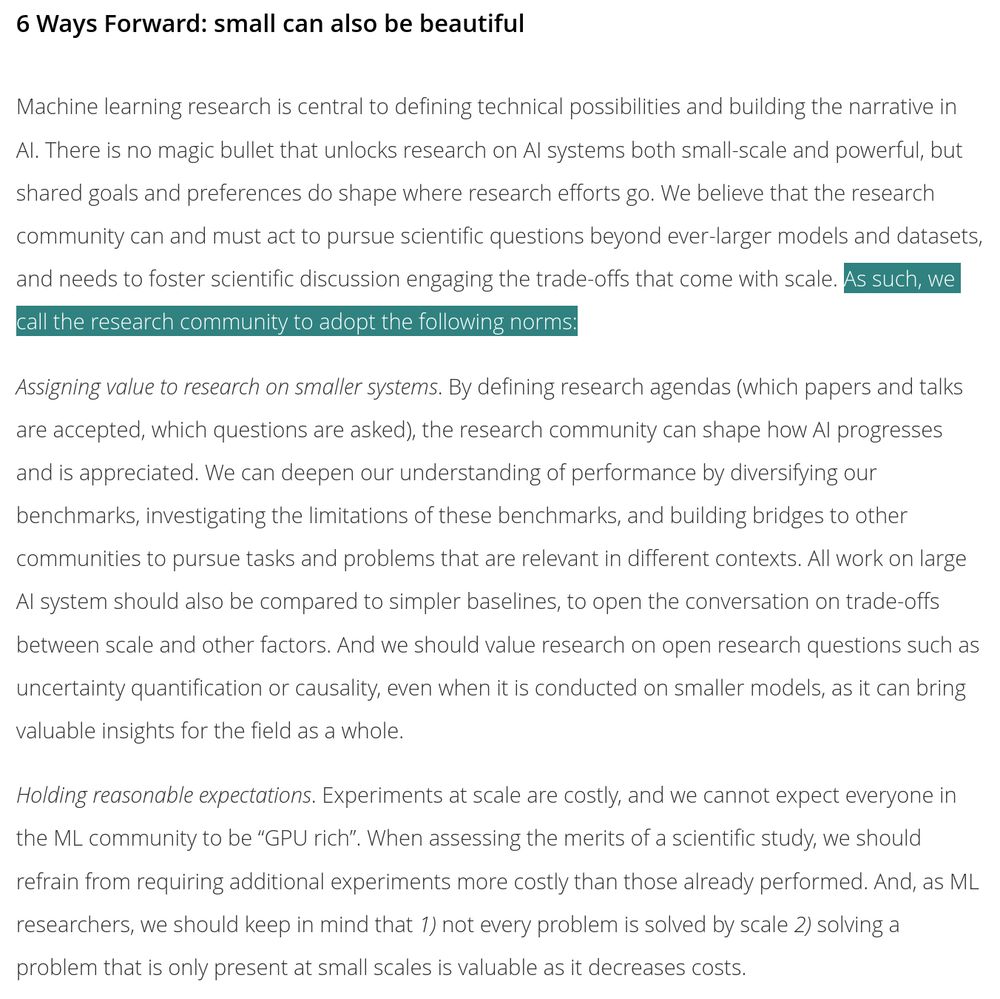

This is what prompted me to write the following paper:

dl.acm.org/doi/full/10....

A lot is explained by narratives and social norms driving research.

We need to build our own narratives, as a research community, this is what we do

This is what prompted me to write the following paper:

dl.acm.org/doi/full/10....

A lot is explained by narratives and social norms driving research.

We need to build our own narratives, as a research community, this is what we do

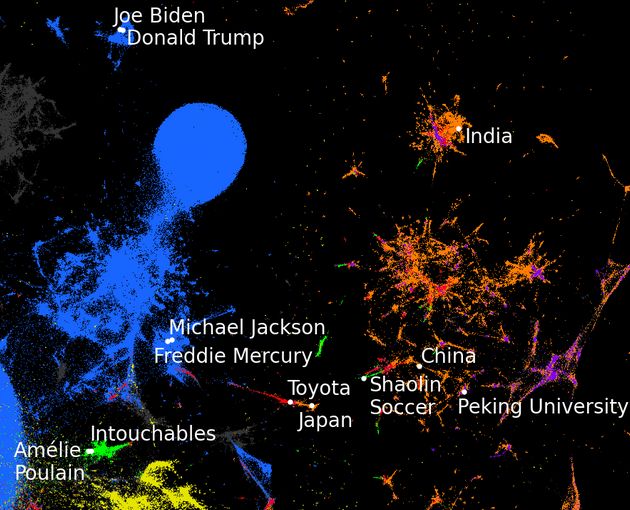

A good example of knowledge graph is Wikidata

A good example of knowledge graph is Wikidata

arxiv.org/abs/2507.00965

The code is here: github.com/soda-inria/s...

The papier is well reproducible, and we hope it will unleash more progress in knowledge graph embedding.

We'll present at #NeurIPS and #Eurips

10/10

arxiv.org/abs/2507.00965

The code is here: github.com/soda-inria/s...

The papier is well reproducible, and we hope it will unleash more progress in knowledge graph embedding.

We'll present at #NeurIPS and #Eurips

10/10

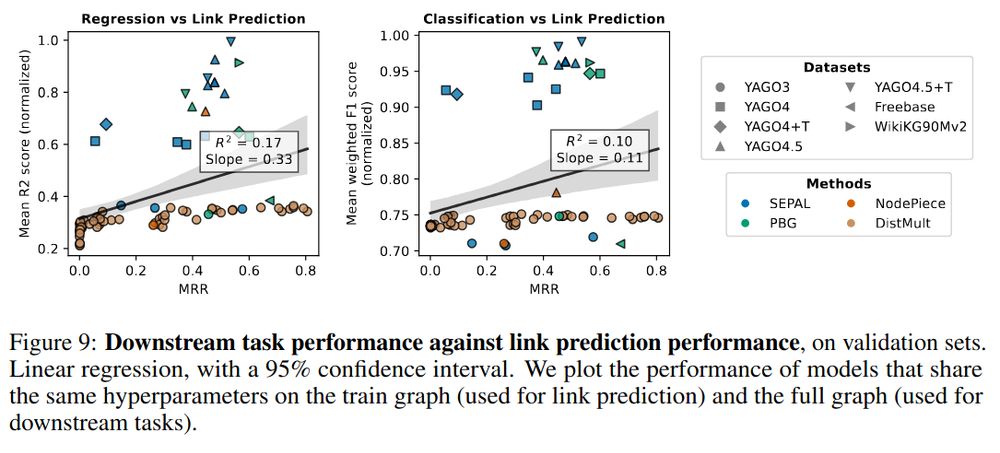

We believe this is because link prediction only needs local structure, unlike downstream tasks

9/10

We believe this is because link prediction only needs local structure, unlike downstream tasks

9/10

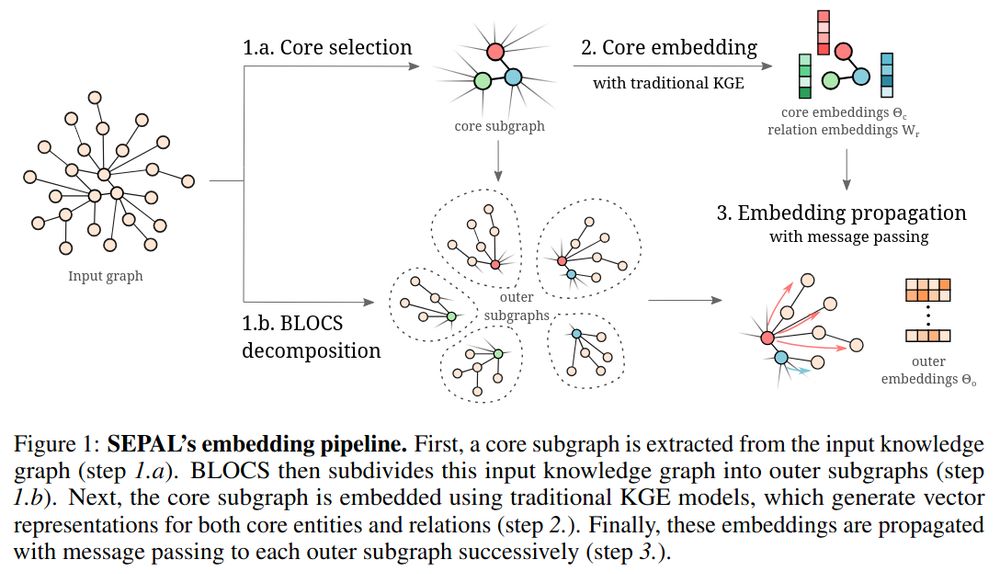

It creates feature vectors that lead to better performance on downstream tasks, and it is more scalable.

Larger knowledge graphs give feature vectors that provide downstream value

8/10

It creates feature vectors that lead to better performance on downstream tasks, and it is more scalable.

Larger knowledge graphs give feature vectors that provide downstream value

8/10

We introduce a procedure that allows for overlap in the blocks, relaxing a lot the difficulty.

7/10

We introduce a procedure that allows for overlap in the blocks, relaxing a lot the difficulty.

7/10

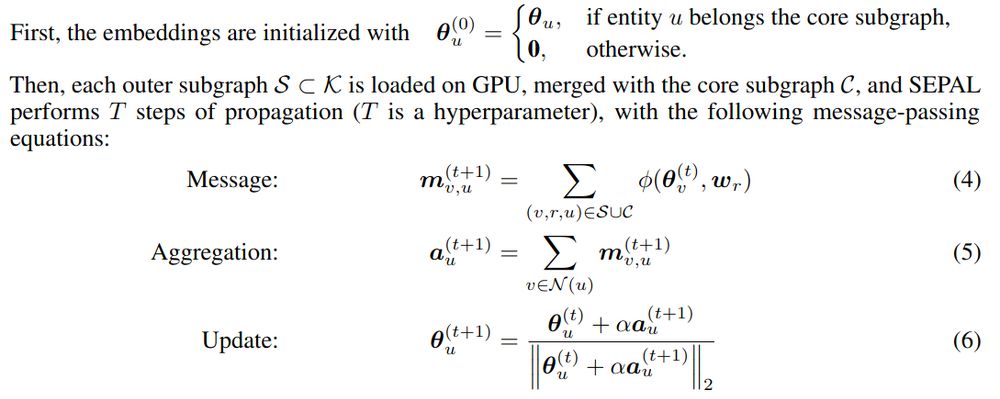

Propagation is then simple in-memory iterations, and we embed huge graphs on a single GPU.

6/10

Propagation is then simple in-memory iterations, and we embed huge graphs on a single GPU.

6/10

For these, we propagate embeddings via the relation operators, in a diffusion-like step, extrapolating from the central entities.

5/10

For these, we propagate embeddings via the relation operators, in a diffusion-like step, extrapolating from the central entities.

5/10

Consistent with knowledge-graph embedding literature, this step represents relations as operators on the embedding space.

It also anchors the central entities.

4/10

Consistent with knowledge-graph embedding literature, this step represents relations as operators on the embedding space.

It also anchors the central entities.

4/10

As opposed to contrastive learning, message passing helps embeddings represent the large-scale structure of the graph (it gives Arnoldi-type iterations).

3/10

As opposed to contrastive learning, message passing helps embeddings represent the large-scale structure of the graph (it gives Arnoldi-type iterations).

3/10

How to distill these into good downstream features, eg for machine learning?

The challenge is to create feature vectors, and for this graph embeddings have been invaluable.

2/10

How to distill these into good downstream features, eg for machine learning?

The challenge is to create feature vectors, and for this graph embeddings have been invaluable.

2/10

(CPU, stockage, réseau, refroidissement... tout cela est inefficace)

(CPU, stockage, réseau, refroidissement... tout cela est inefficace)

radar.inria.fr/report/2024/...

Tout ce temps passé à faire des rapports, c'est du temps que je passe pas à autre chose.

Et qui les lit?

radar.inria.fr/report/2024/...

Tout ce temps passé à faire des rapports, c'est du temps que je passe pas à autre chose.

Et qui les lit?

www.gao.gov/products/gao...

Mais c'est écrit sur les formulaires officiel du gouvernement qu'il ont été evalués

www.gao.gov/products/gao...

Mais c'est écrit sur les formulaires officiel du gouvernement qu'il ont été evalués

Aux US ils ont (avaient ?) plus ou moins une agence qui fait ça, non?

Aux US ils ont (avaient ?) plus ou moins une agence qui fait ça, non?

Mais 1) il faut repasser derrière 2) de faire plus vite et moins bien une tâche qui sert à rien ne la rend pas utile, au contraire.

Mais 1) il faut repasser derrière 2) de faire plus vite et moins bien une tâche qui sert à rien ne la rend pas utile, au contraire.

dl.acm.org/doi/10.1145/...

I genuinely think that we need to collectively act to change the narrative and social norms

dl.acm.org/doi/10.1145/...

I genuinely think that we need to collectively act to change the narrative and social norms

They didn't, it seems 😀

They didn't, it seems 😀