I am on the economic job market 2024/2025.

Currently visiting the EconCS group @ Harvard.

Tfwerner.com

When people are involved, even a ``collusive'' AI can lead to more competition and lower prices .

Full paper: arxiv.org/abs/2510.27636

When people are involved, even a ``collusive'' AI can lead to more competition and lower prices .

Full paper: arxiv.org/abs/2510.27636

Participants frequently override the AI's advice, resulting in longer price wars than in the baseline.

Participants frequently override the AI's advice, resulting in longer price wars than in the baseline.

This is not profitable, but it punishes adopters & discourages delegation. Our evidence suggests that this is driven by spite towards AI, not myopia.

This is not profitable, but it punishes adopters & discourages delegation. Our evidence suggests that this is driven by spite towards AI, not myopia.

We thought the collusive AI would raise prices.

However, by the end, prices are significantly lower in both AI treatments than in the human-only BASELINE.

We thought the collusive AI would raise prices.

However, by the end, prices are significantly lower in both AI treatments than in the human-only BASELINE.

But control matters. Adoption is significantly higher when participants can override the AI (RECOMMENDATION).

But control matters. Adoption is significantly higher when participants can override the AI (RECOMMENDATION).

1️⃣ BASELINE: Humans only.

2️⃣ OUTSOURCING: Full delegation to a collusive AI.

3️⃣ RECOMMENDATION: AI advice, but humans can override it .

1️⃣ BASELINE: Humans only.

2️⃣ OUTSOURCING: Full delegation to a collusive AI.

3️⃣ RECOMMENDATION: AI advice, but humans can override it .

So we ask: Will firms delegate to a collusive AI? And how does this strategic choice change market outcomes?

So we ask: Will firms delegate to a collusive AI? And how does this strategic choice change market outcomes?

We hope this paper encourages discussion, collaboration, and improved safeguards.

🔗 arxiv.org/abs/2508.01390

We hope this paper encourages discussion, collaboration, and improved safeguards.

🔗 arxiv.org/abs/2508.01390

Platforms that advertise "100% human" samples must be held accountable.

Researchers need tools and transparency to protect their work, and in some cases, it may be time to go back to the physical lab!

Platforms that advertise "100% human" samples must be held accountable.

Researchers need tools and transparency to protect their work, and in some cases, it may be time to go back to the physical lab!

Some measures:

- reCAPTCHA and Cloudflare

- Multimodal instructions (images, audio)

- Input restrictions (no paste, voice answers)

- Behavioural logging

- Platform enforcement

Some measures:

- reCAPTCHA and Cloudflare

- Multimodal instructions (images, audio)

- Input restrictions (no paste, voice answers)

- Behavioural logging

- Platform enforcement

🧠 Dampens variance

📈 Inflates effects

📊 Mocks WEIRD norms

🔍 Obscures who your participants really are

… all while staying nearly undetectable!

🧠 Dampens variance

📈 Inflates effects

📊 Mocks WEIRD norms

🔍 Obscures who your participants really are

… all while staying nearly undetectable!

This is contamination, not noise!

This is contamination, not noise!

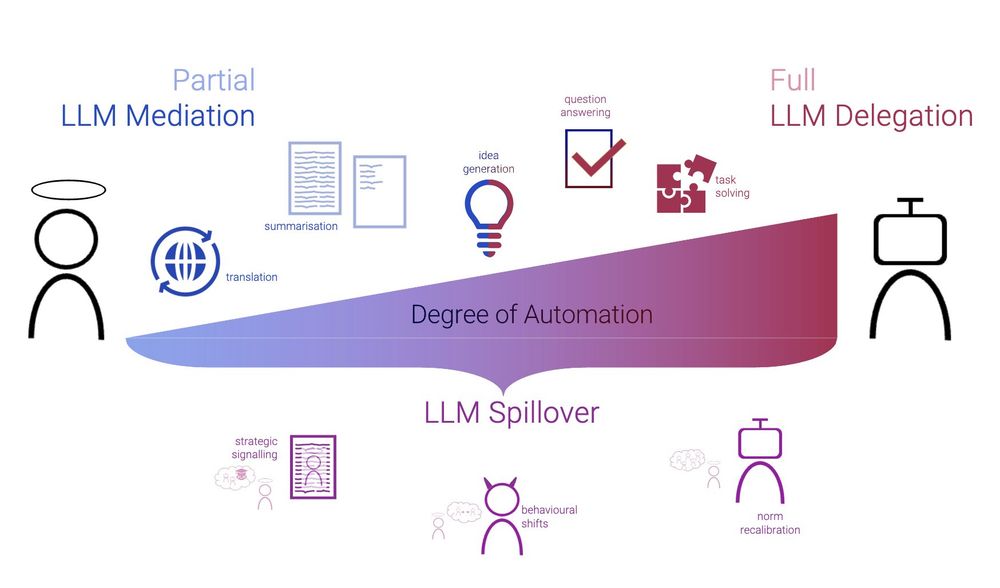

1. Partial Mediation: LLM assists with rephrasing or answering.

2. Full Delegation: Agents like OpenAI Operator handle everything.

3. Spillover: Humans act differently due to bot expectations.

(See figure below.)

1. Partial Mediation: LLM assists with rephrasing or answering.

2. Full Delegation: Agents like OpenAI Operator handle everything.

3. Spillover: Humans act differently due to bot expectations.

(See figure below.)

LLM Pollution is not hypothetical. It is happening now.

We map three variants in the paper 👇

LLM Pollution is not hypothetical. It is happening now.

We map three variants in the paper 👇