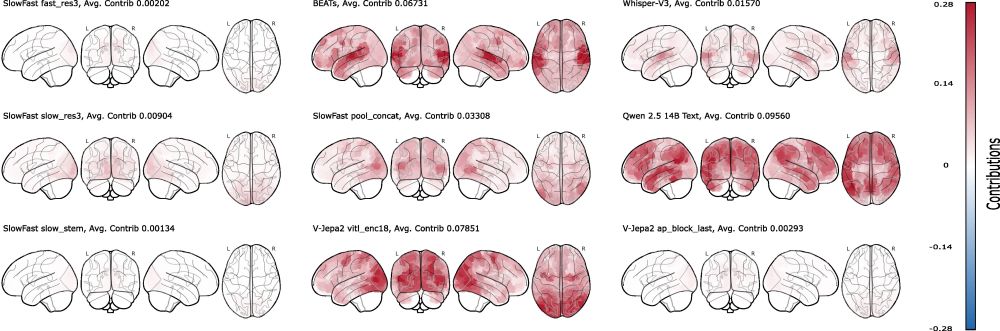

Feature attribution maps align with neuroanatomy, but crazily enough, textual features from the transcripts are the most predictive of all.

Feature attribution maps align with neuroanatomy, but crazily enough, textual features from the transcripts are the most predictive of all.

Competition submission (earlier iteration): r=0.3198 ID / 0.2096 OOD → 1st in Phase-1, 2nd overall.

vs baseline 0.2033/0.0895 → +0.119/+0.123.

Competition submission (earlier iteration): r=0.3198 ID / 0.2096 OOD → 1st in Phase-1, 2nd overall.

vs baseline 0.2033/0.0895 → +0.119/+0.123.

@keckjanis.bsky.social,Viktor Studenyak,Daniel Schad,Aleksandr Shpilevoi. Huge thanks to @andrejbicanski.bsky.social and @doellerlab.bsky.social for support. Report: arxiv.org/abs/2507.17958

@keckjanis.bsky.social,Viktor Studenyak,Daniel Schad,Aleksandr Shpilevoi. Huge thanks to @andrejbicanski.bsky.social and @doellerlab.bsky.social for support. Report: arxiv.org/abs/2507.17958

Pixel-wise Shapley Modes reveal the inverted CNN hierarchy: first transposed-conv layer shapes high-level facial parts; final layer merely renders RGB channels.

Pixel-wise Shapley Modes reveal the inverted CNN hierarchy: first transposed-conv layer shapes high-level facial parts; final layer merely renders RGB channels.

Calculated expert-level contributions of an MOE-based LLM across arithmetic, language ID, and factual recall. Found an expert which was super-important for all domains. Also found redundant experts, removing which does not decrease performance much.

Calculated expert-level contributions of an MOE-based LLM across arithmetic, language ID, and factual recall. Found an expert which was super-important for all domains. Also found redundant experts, removing which does not decrease performance much.

Neural computations within a three-layer MNIST MLP were analysed. L1/L2 regularisation funnels computations into a few neurons. Also, contrary to popular belief, large weights do not equal high importance of neural units.

Neural computations within a three-layer MNIST MLP were analysed. L1/L2 regularisation funnels computations into a few neurons. Also, contrary to popular belief, large weights do not equal high importance of neural units.