"What if we offered Customer 56 a 1-year contract?"

Using fastexplain(

model_results,

method = "counterfactual",

observation = risky_customer

)

Moving from "Month-to-Month" to "One Year" drops their churn risk from ~76% to ~26%.

"What if we offered Customer 56 a 1-year contract?"

Using fastexplain(

model_results,

method = "counterfactual",

observation = risky_customer

)

Moving from "Month-to-Month" to "One Year" drops their churn risk from ~76% to ~26%.

Let's look at Customer 56. They have a 76% probability of churning. Why?

Using

fastexplain(

model_results,

method = "breakdown",

observation = risky_customer),

we see the additive drivers: 🔴 Fiber Optic Internet (+10.8%) 🔴 Low Tenure (+13.4%)

Let's look at Customer 56. They have a 76% probability of churning. Why?

Using

fastexplain(

model_results,

method = "breakdown",

observation = risky_customer),

we see the additive drivers: 🔴 Fiber Optic Internet (+10.8%) 🔴 Low Tenure (+13.4%)

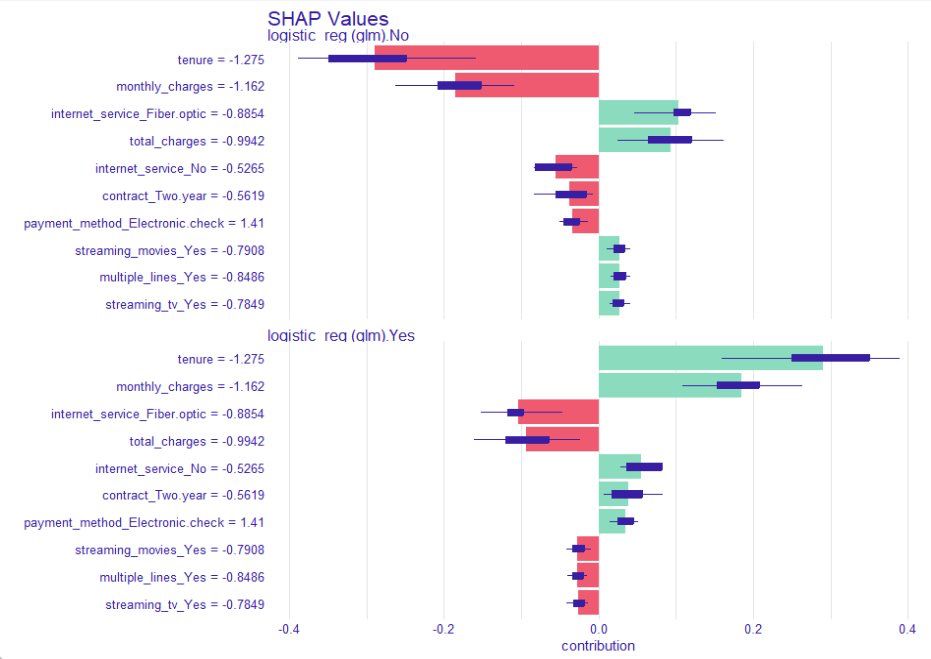

We can’t trust a black box. Running fastexplain(model_results, method = "dalex") reveals the drivers across the whole company.

📉 Tenure and Contract Type are the biggest predictors of churn.

We can’t trust a black box. Running fastexplain(model_results, method = "dalex") reveals the drivers across the whole company.

📉 Tenure and Contract Type are the biggest predictors of churn.

Who won? Surprisingly, Logistic Regression took the crown 👑 with an AUC of 0.846, beating Random Forest and XGBoost.

summary(model_results) gives you metrics, formatted and ready for reporting and plot(model_results, type = "roc") visualizes ROC curves.

Who won? Surprisingly, Logistic Regression took the crown 👑 with an AUC of 0.846, beating Random Forest and XGBoost.

summary(model_results) gives you metrics, formatted and ready for reporting and plot(model_results, type = "roc") visualizes ROC curves.

We pass the raw wa_churn dataset to fastml().

It automatically: ✅ Handles missing values (medianImpute) ✅ Encodes categoricals ✅ Splits data ✅ Runs Bayesian Optimization on XGBoost, RF, and LogReg.

No recipes. No boilerplate. Just results. ⚡️

We pass the raw wa_churn dataset to fastml().

It automatically: ✅ Handles missing values (medianImpute) ✅ Encodes categoricals ✅ Splits data ✅ Runs Bayesian Optimization on XGBoost, RF, and LogReg.

No recipes. No boilerplate. Just results. ⚡️

• Automated model training and tuning

• Leakage-safe resampling by design

• Built-in survival analysis

• Integrated explainability

A streamlined way to build reliable models with minimal code.

#rstats #machinelearning #datascience

• Automated model training and tuning

• Leakage-safe resampling by design

• Built-in survival analysis

• Integrated explainability

A streamlined way to build reliable models with minimal code.

#rstats #machinelearning #datascience