👏Eray Erturk, Saba Hashemi

See Eray at #NeurIPS2025

📍Poster Session 1, Hall C,D,E #2115 | Wed 3 Dec | 11AM - 2PM @neuripsconf.bsky.social

📃 openreview.net/forum?id=hT7...

🖥️ github.com/ShanechiLab/...

👏Eray Erturk, Saba Hashemi

See Eray at #NeurIPS2025

📍Poster Session 1, Hall C,D,E #2115 | Wed 3 Dec | 11AM - 2PM @neuripsconf.bsky.social

📃 openreview.net/forum?id=hT7...

🖥️ github.com/ShanechiLab/...

✅ Substantial decoding performance improvement over several LFP-only baselines

✅ Consistent improvements in unsupervised, supervised & multi-session distillation setups

✅ Generalization to unseen sessions without additional distillation

✅ Spike-aligned LFP latent structure

✅ Substantial decoding performance improvement over several LFP-only baselines

✅ Consistent improvements in unsupervised, supervised & multi-session distillation setups

✅ Generalization to unseen sessions without additional distillation

✅ Spike-aligned LFP latent structure

1️⃣ Pretrains a multi-session spike model

2️⃣ Fine-tunes the multi-session spike model on new spike signals

3️⃣ Trains the Distilled LFP model via cross-modal representation alignment

🔥 This produces spike-informed LFP models with significantly improved decoding.

1️⃣ Pretrains a multi-session spike model

2️⃣ Fine-tunes the multi-session spike model on new spike signals

3️⃣ Trains the Distilled LFP model via cross-modal representation alignment

🔥 This produces spike-informed LFP models with significantly improved decoding.

🧠 LFPs are used in many #BCIs and have high stability, but often underperform for behavior decoding compared with spikes.

❓ We ask: Can we transfer representational knowledge from spike models → LFP models?

✅ Answer: Yes — and the gains are substantial!

🧠 LFPs are used in many #BCIs and have high stability, but often underperform for behavior decoding compared with spikes.

❓ We ask: Can we transfer representational knowledge from spike models → LFP models?

✅ Answer: Yes — and the gains are substantial!

PSID & DPAD (Nat Neuro 2021 & 2024), IPSID (PNAS 2024), PGLDM (NeurIPS 2024), BRAID (ICLR 2025)

📜 Paper: openreview.net/pdf?id=k4KVh...

💻Code: github.com/shanechiLab/...

PSID & DPAD (Nat Neuro 2021 & 2024), IPSID (PNAS 2024), PGLDM (NeurIPS 2024), BRAID (ICLR 2025)

📜 Paper: openreview.net/pdf?id=k4KVh...

💻Code: github.com/shanechiLab/...

✅ Self-attention improves neural-behavior predictions by learning long-range patterns while convolutions learn local ones

✅ Two-stage learning improves behavior prediction by disentangling behaviorally relevant dynamics

✅ Self-attention improves neural-behavior predictions by learning long-range patterns while convolutions learn local ones

✅ Two-stage learning improves behavior prediction by disentangling behaviorally relevant dynamics

✅ Operates directly on raw images & avoids preprocessing.

✅ Combines self-attention and convolutional layers to model both global and local patterns.

✅ Uses two-stage learning of convolutional RNNs (ConvRNNs) to disentangle behaviorally relevant and other neural dynamics.

✅ Operates directly on raw images & avoids preprocessing.

✅ Combines self-attention and convolutional layers to model both global and local patterns.

✅ Uses two-stage learning of convolutional RNNs (ConvRNNs) to disentangle behaviorally relevant and other neural dynamics.

See Parsa Vahidi at #ICLR2025!

📍Poster Session 5, Hall 3+Hall 2B #57 | Sat 4/26 | 10AM - 12:30PM

📜 openreview.net/forum?id=3us...

💻 github.com/ShanechiLab/...

See Parsa Vahidi at #ICLR2025!

📍Poster Session 5, Hall 3+Hall 2B #57 | Sat 4/26 | 10AM - 12:30PM

📜 openreview.net/forum?id=3us...

💻 github.com/ShanechiLab/...

✅ Disentangles intrinsic behaviorally relevant neural dynamics from input, neural-specific & behavior-specific dynamics

✅ Captures nonlinearity

It is a multi-stage RNN: each stage learns a subtype of dynamics & combines a predictor network w/ a generative network to learn intrinsic dynamics.

✅ Disentangles intrinsic behaviorally relevant neural dynamics from input, neural-specific & behavior-specific dynamics

✅ Captures nonlinearity

It is a multi-stage RNN: each stage learns a subtype of dynamics & combines a predictor network w/ a generative network to learn intrinsic dynamics.

📍 Poster Session 1, Hall 3 + Hall 2B, #68 | Thu, Apr 24 | 10 AM - 12:30 PM

Poster: iclr.cc/virtual/2025...

📜 💻Paper and code: openreview.net/pdf?id=mkDam...

📍 Poster Session 1, Hall 3 + Hall 2B, #68 | Thu, Apr 24 | 10 AM - 12:30 PM

Poster: iclr.cc/virtual/2025...

📜 💻Paper and code: openreview.net/pdf?id=mkDam...

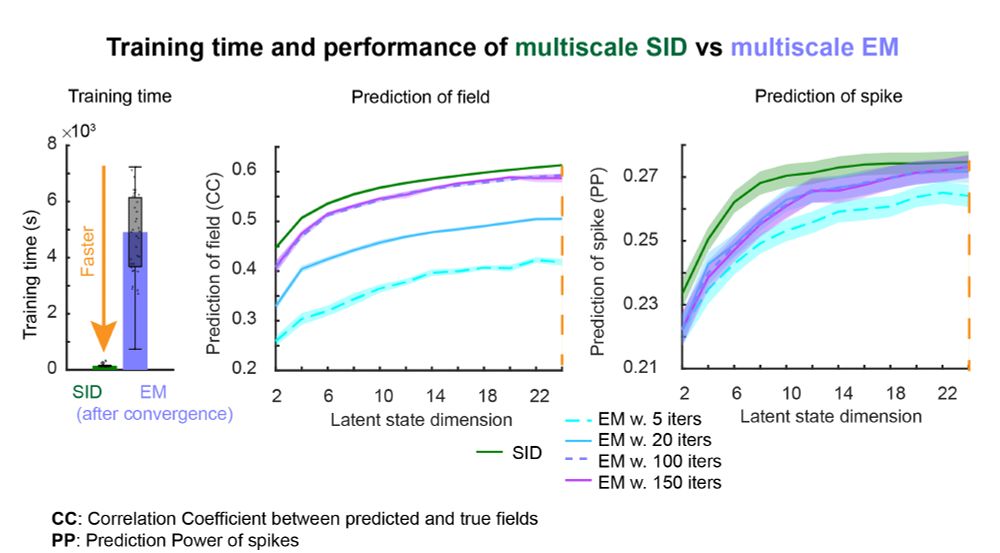

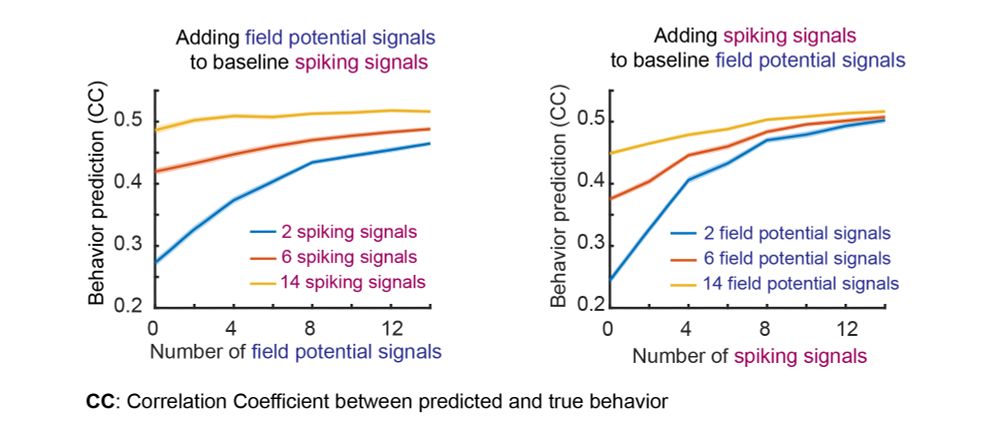

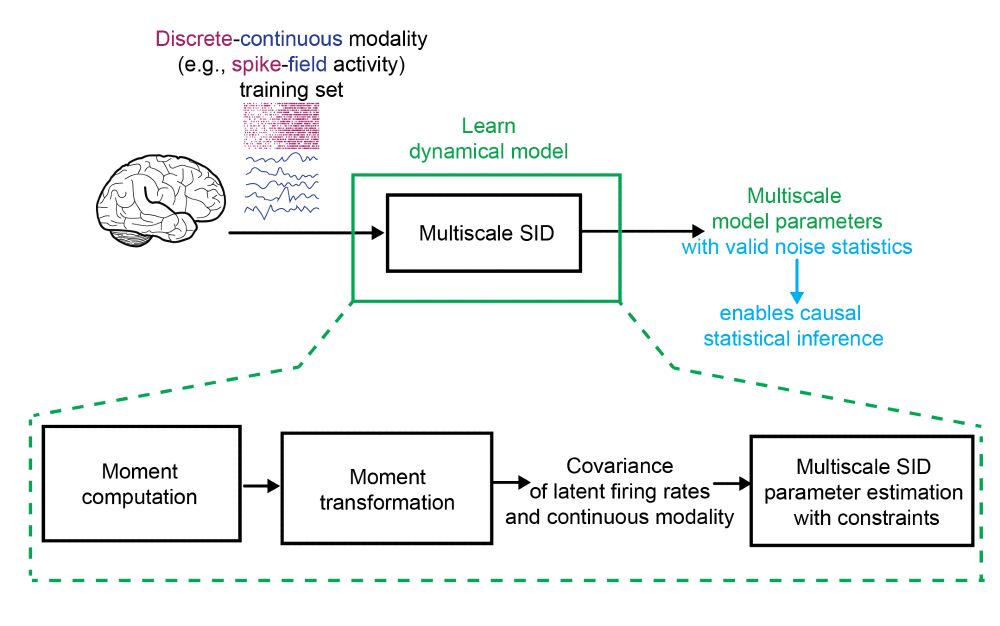

👏 Congrats Parima Ahmadipour & Omid Sani. Thanks to collaborator Bijan Pesaran.

📜Paper: iopscience.iop.org/article/10.1...

💻Code: github.com/ShanechiLab/...

👏 Congrats Parima Ahmadipour & Omid Sani. Thanks to collaborator Bijan Pesaran.

📜Paper: iopscience.iop.org/article/10.1...

💻Code: github.com/ShanechiLab/...