Using complexity theory and formal languages to understand the power and limits of LLMs

https://lambdaviking.com/ https://github.com/viking-sudo-rm

(we’re excited to share more results ourselves soon🤐)

(we’re excited to share more results ourselves soon🤐)

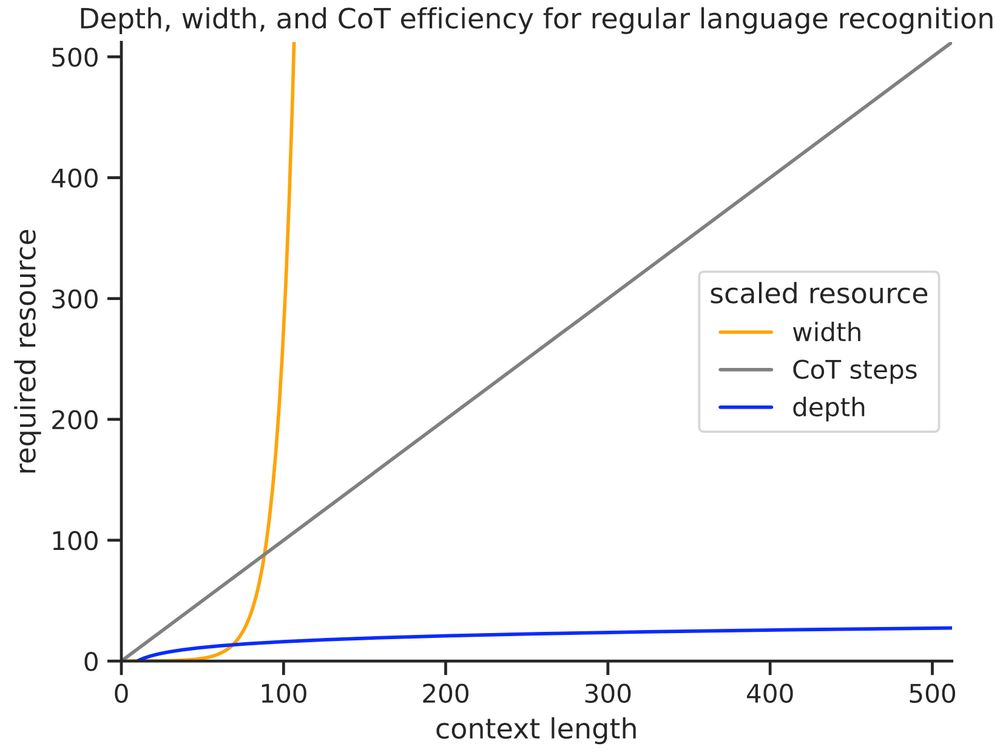

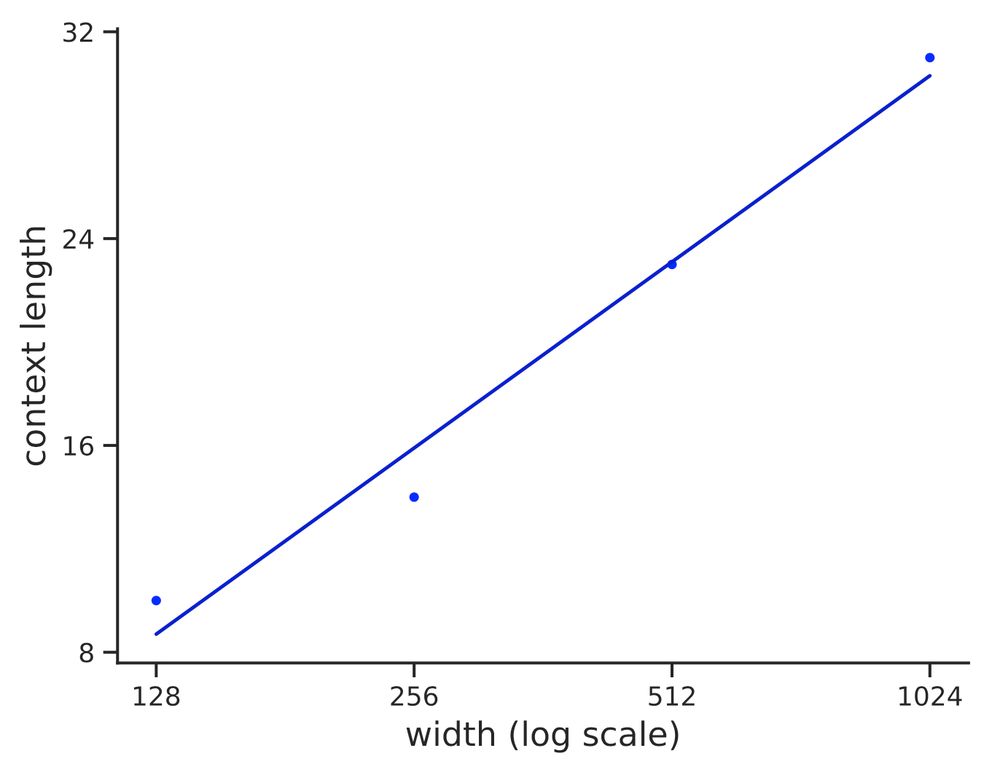

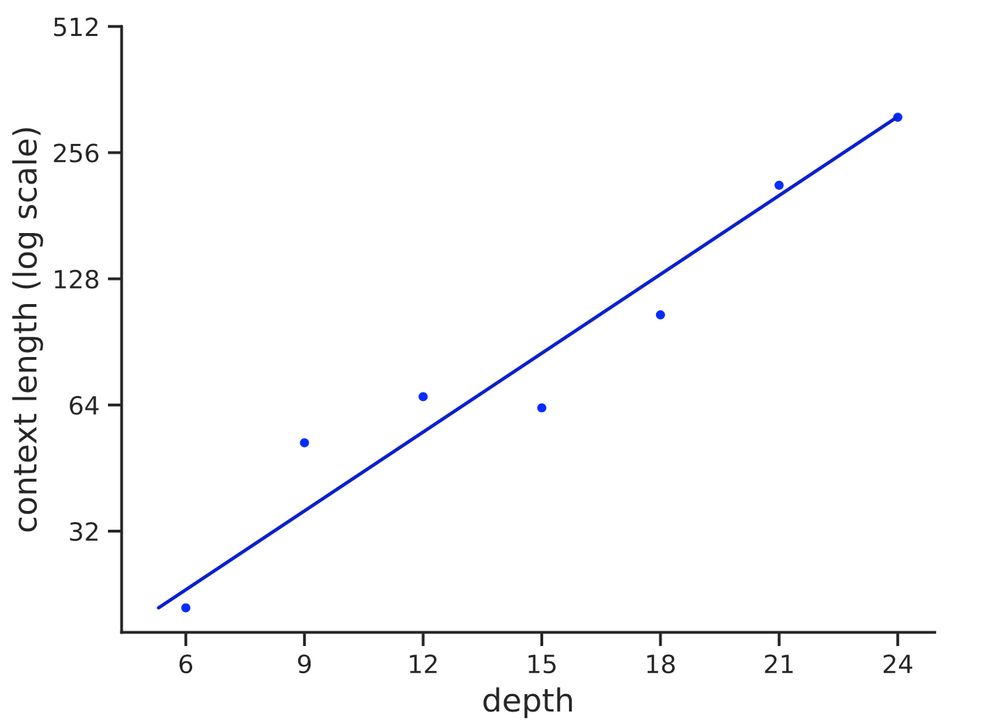

We find the depth required to recognize strings of length n grows ~ log n with r^2=.93. Thus, log depth appears necessary and sufficient to recognize reg languages in practice, matching our theory

We find the depth required to recognize strings of length n grows ~ log n with r^2=.93. Thus, log depth appears necessary and sufficient to recognize reg languages in practice, matching our theory

♟️State tracking (regular languages)

🔍Graph search (connectivity)

♟️State tracking (regular languages)

🔍Graph search (connectivity)

🤔Are log-depth transformers more powerful than fixed-depth transformers?

🤔Are log-depth transformers more powerful than fixed-depth transformers?

But what if we only care about reasoning over short inputs? Or if the transformer’s depth can grow 🤏slightly with input length?

But what if we only care about reasoning over short inputs? Or if the transformer’s depth can grow 🤏slightly with input length?

The Vikings call, say now,

OLMo 2, the ruler of languages.

May your words fly over the seas,

all over the world, for you are wise.

Wordsmith, balanced and aligned,

for you the skalds themselves sing,

your soul, which hears new lifeforms,

may it live long and tell a saga.

The Vikings call, say now,

OLMo 2, the ruler of languages.

May your words fly over the seas,

all over the world, for you are wise.

Wordsmith, balanced and aligned,

for you the skalds themselves sing,

your soul, which hears new lifeforms,

may it live long and tell a saga.

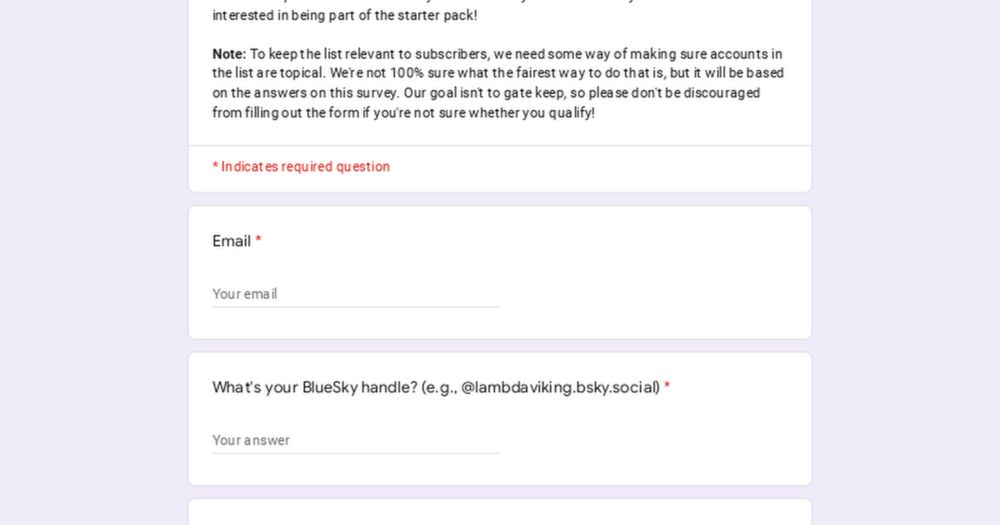

docs.google.com/forms/d/e/1F...

docs.google.com/forms/d/e/1F...