Put me in the middle of nowhere with coffee and I'm happy

jesseba.github.io

To test this, we teleported RS vectors to different positions in PCA space and observed their evolution.

Result? Early layers exhibit attractor-like behavior—pushing activations back toward their “natural” trajectory.

To test this, we teleported RS vectors to different positions in PCA space and observed their evolution.

Result? Early layers exhibit attractor-like behavior—pushing activations back toward their “natural” trajectory.

The CAE finds low-dimensional population dynamics, which showed that early layers are harder to reconstruct, while later layers become lower-dimensional and more structured.

The CAE finds low-dimensional population dynamics, which showed that early layers are harder to reconstruct, while later layers become lower-dimensional and more structured.

Some units spin around a fixed point, others spiral outward. On average, RS units circle ~10 times over 64 layers.

Some units spin around a fixed point, others spiral outward. On average, RS units circle ~10 times over 64 layers.

Translation: The model’s internal representations become more aligned as we move deeper through the model. But they also accelerate!

Translation: The model’s internal representations become more aligned as we move deeper through the model. But they also accelerate!

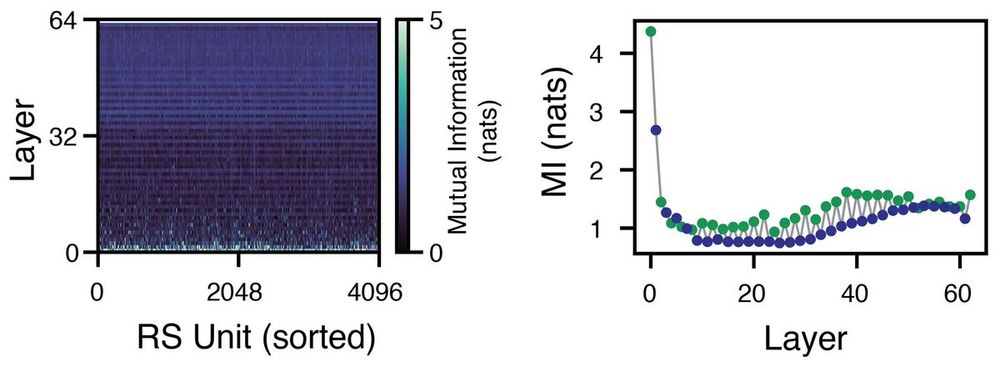

A given unit’s activation is highly correlated across layers—especially within a layer (attention to MLP) vs. across layers.

A given unit’s activation is highly correlated across layers—especially within a layer (attention to MLP) vs. across layers.

Early layers are sparse, later layers are dense. Why? There’s no a priori reason for this—blocks could just as easily write balancing negative values. But they don’t.

Early layers are sparse, later layers are dense. Why? There’s no a priori reason for this—blocks could just as easily write balancing negative values. But they don’t.

and I went on a little side-quest in which we took a page out of neuroscience to see if we can understand AI—LLMs in this case—a little better. 🧵

and I went on a little side-quest in which we took a page out of neuroscience to see if we can understand AI—LLMs in this case—a little better. 🧵