currently doing PhD @uwcse,

prev @usyd @ai2

🇦🇺🇨🇦🇬🇧

ivison.id.au

Many thanks to my collaborators on this, the dream team of @muruzhang.bsky.social, Faeze Brahman, Pang Wei Koh, and @pdasigi.bsky.social!

Many thanks to my collaborators on this, the dream team of @muruzhang.bsky.social, Faeze Brahman, Pang Wei Koh, and @pdasigi.bsky.social!

💻 github.com/hamishivi/au...

📚 huggingface.co/collections/...

📄 arxiv.org/abs/2503.01807

💻 github.com/hamishivi/au...

📚 huggingface.co/collections/...

📄 arxiv.org/abs/2503.01807

While a common baseline, by doing a little tuning, we get stronger performance with this method than more costly alternatives such as LESS.

While a common baseline, by doing a little tuning, we get stronger performance with this method than more costly alternatives such as LESS.

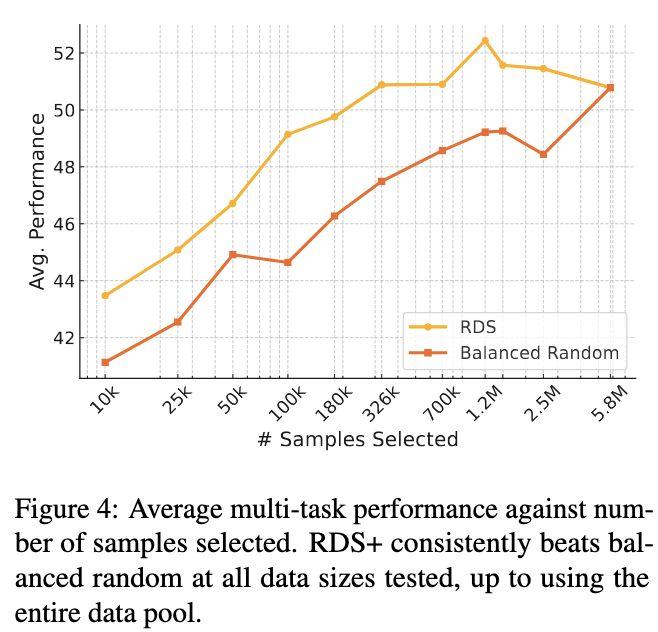

We select 10k samples from a downsampled pool of 200k samples, and then test selecting 10k samples from all 5.8M samples. Surprisingly, many methods drop in performance when the pool size increases!

We select 10k samples from a downsampled pool of 200k samples, and then test selecting 10k samples from all 5.8M samples. Surprisingly, many methods drop in performance when the pool size increases!

📜 Paper: arxiv.org/abs/2502.13917

🧑💻 Code: github.com/hamishivi/te...

🤖 Demo: huggingface.co/spaces/hamis...

🧠 Models: huggingface.co/collections/...

📜 Paper: arxiv.org/abs/2502.13917

🧑💻 Code: github.com/hamishivi/te...

🤖 Demo: huggingface.co/spaces/hamis...

🧠 Models: huggingface.co/collections/...

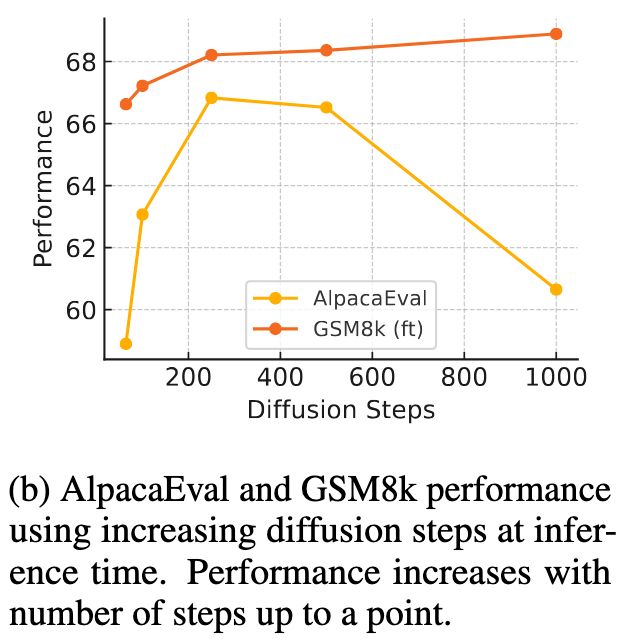

(1) Using more diffusion steps

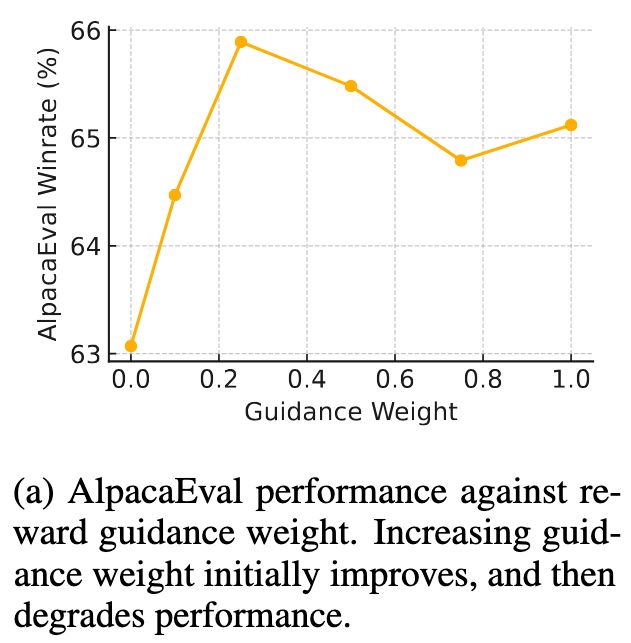

(2) Using reward guidance

Explained below 👇

(1) Using more diffusion steps

(2) Using reward guidance

Explained below 👇

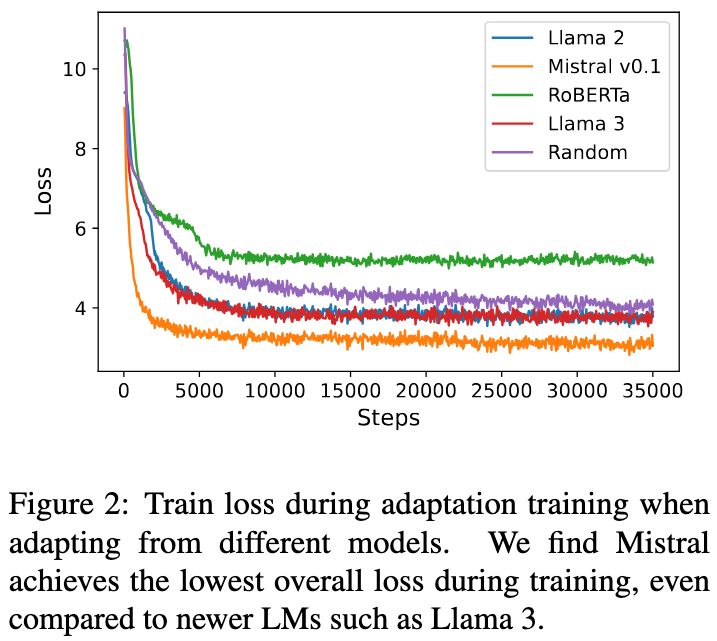

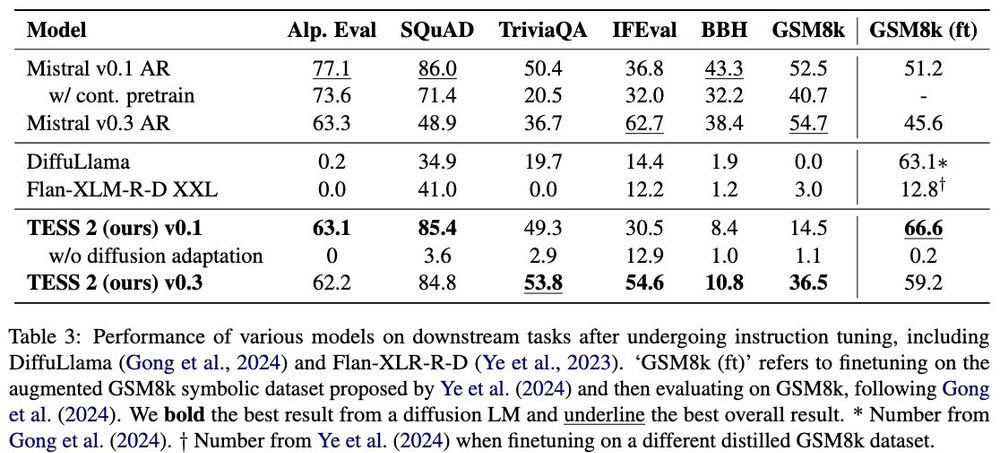

It may be that instruction-tuning mixtures need to be adjusted for diffusion models (we just used Tulu 2/3 off the shelf).

It may be that instruction-tuning mixtures need to be adjusted for diffusion models (we just used Tulu 2/3 off the shelf).