I work on AI, Language, Music, and Neuroscience.

They show how LLMs optimize for statistical compression, while humans for adaptive richness (preserve flexibility and context). Also that smaller models outperformed larger models at forming conceptual categories

#neuroAI

arxiv.org/abs/2505.17117

They show how LLMs optimize for statistical compression, while humans for adaptive richness (preserve flexibility and context). Also that smaller models outperformed larger models at forming conceptual categories

#neuroAI

arxiv.org/abs/2505.17117

New preprint with a former undergrad, Yue Wan.

I'm not totally sure how to talk about these results. They're counterintuitive on the surface, seem somewhat obvious in hindsight, but then there's more to them when you dig deeper.

New preprint with a former undergrad, Yue Wan.

I'm not totally sure how to talk about these results. They're counterintuitive on the surface, seem somewhat obvious in hindsight, but then there's more to them when you dig deeper.

Linguistic typology, data science, spatial analysis, phylogenetic methods, potential fieldwork... REPOST 🙏

www.comparativelinguistics.uzh.ch/en/jobs.html

Linguistic typology, data science, spatial analysis, phylogenetic methods, potential fieldwork... REPOST 🙏

www.comparativelinguistics.uzh.ch/en/jobs.html

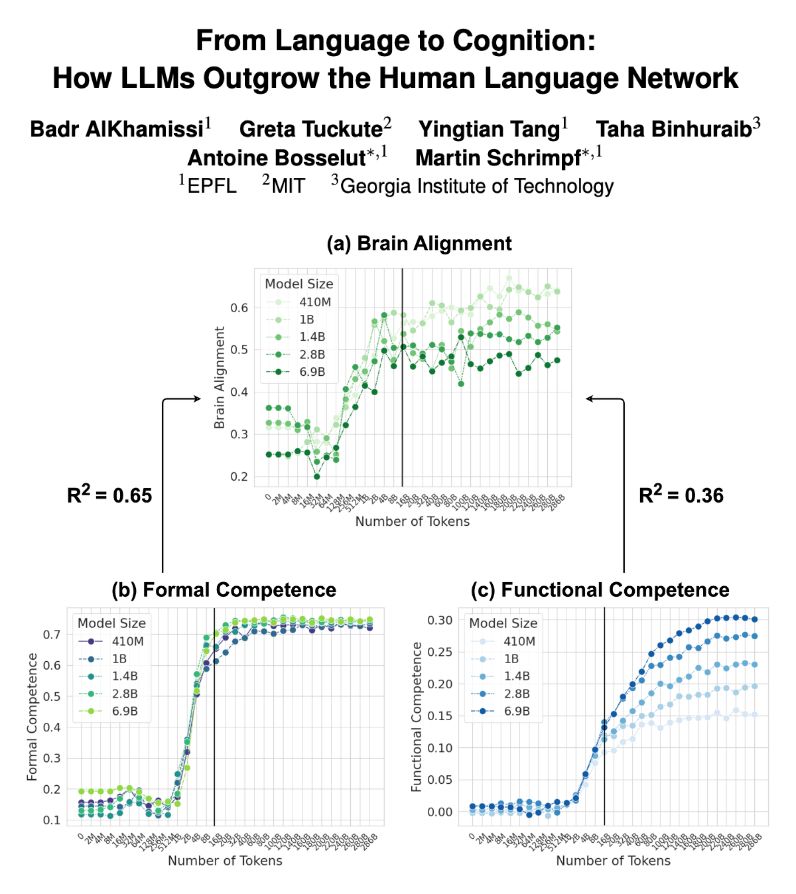

LLMs trained on next-word prediction (NWP) show high alignment with brain recordings. But what drives this alignment—linguistic structure or world knowledge? And how does this alignment evolve during training? Our new paper explores these questions. 👇🧵

LLMs trained on next-word prediction (NWP) show high alignment with brain recordings. But what drives this alignment—linguistic structure or world knowledge? And how does this alignment evolve during training? Our new paper explores these questions. 👇🧵

#neuroskyence

www.thetransmitter.org/neuroai/neur...

#neuroskyence

www.thetransmitter.org/neuroai/neur...

With commentary from several wonderful researchers!

🧠📈 #NeuroAI 🧪

www.thetransmitter.org/neuroai/acce...

With commentary from several wonderful researchers!

🧠📈 #NeuroAI 🧪

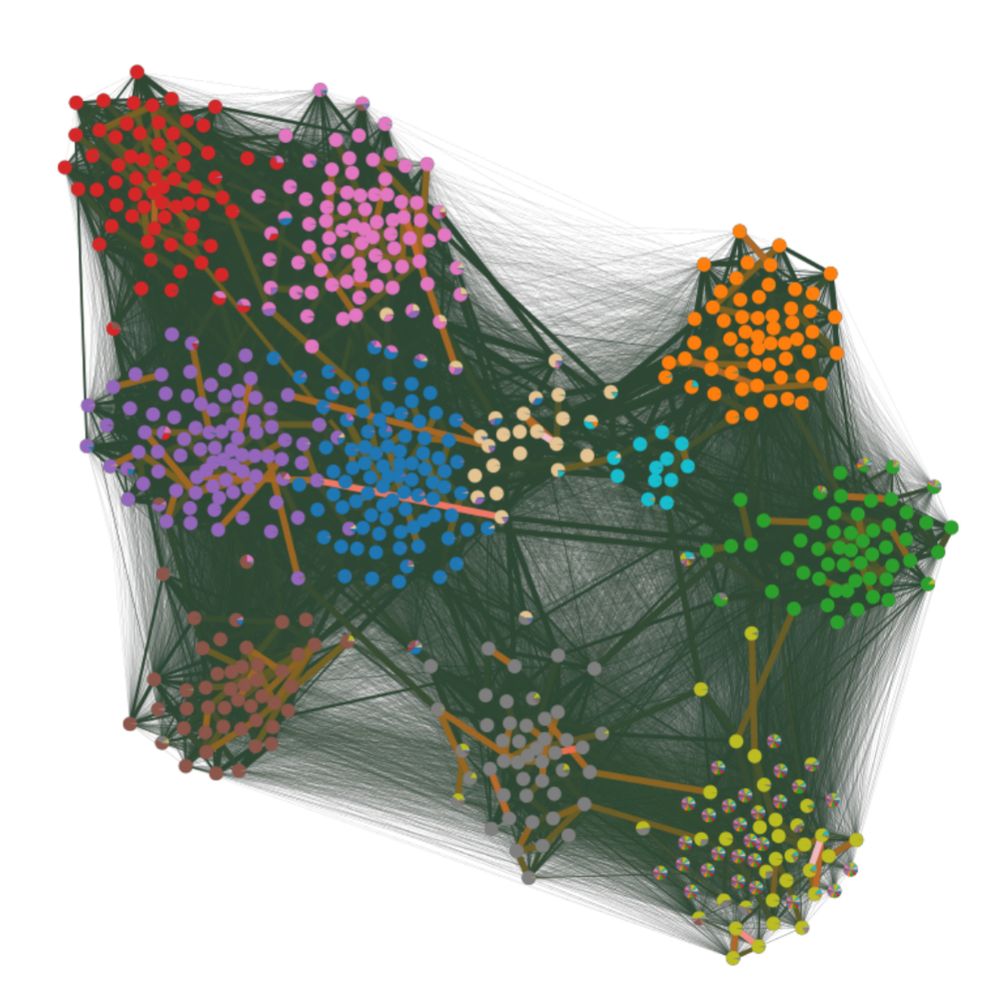

“Uncertainty quantification and posterior sampling for network reconstruction”

TL;DR; We present an efficient method to sample the entire ensemble of possible network reconstructions that are compatible with an indirect observation, e.g. a dynamics.

Short thread: 1/N

“Uncertainty quantification and posterior sampling for network reconstruction”

TL;DR; We present an efficient method to sample the entire ensemble of possible network reconstructions that are compatible with an indirect observation, e.g. a dynamics.

Short thread: 1/N

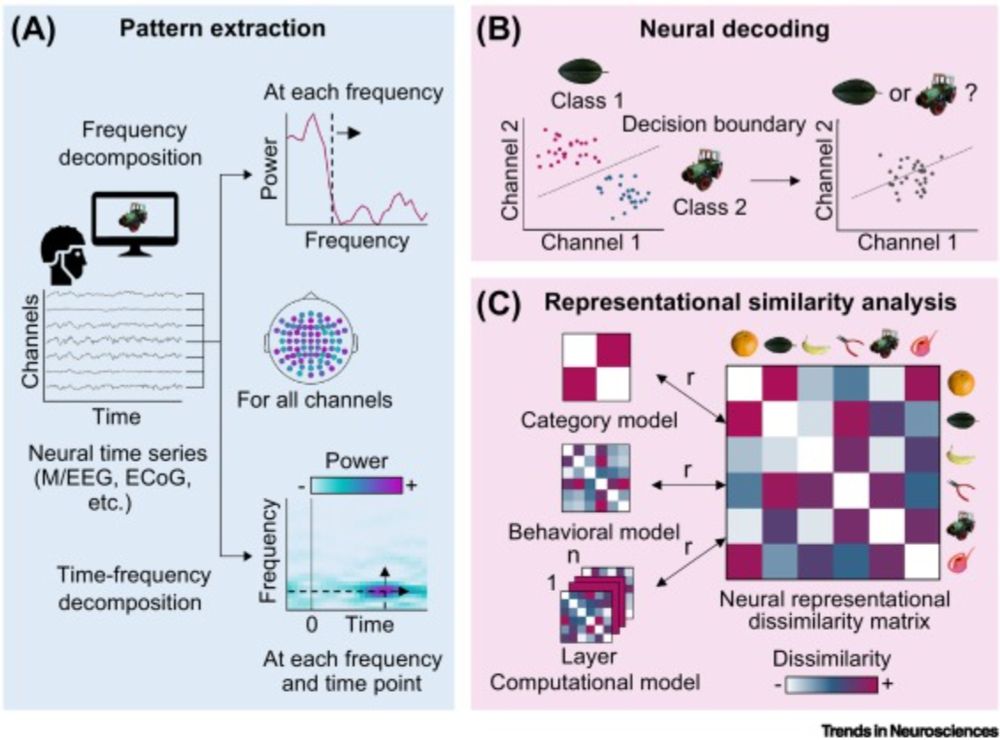

www.cell.com/trends/neuro...

#neuroscience

www.cell.com/trends/neuro...

#neuroscience

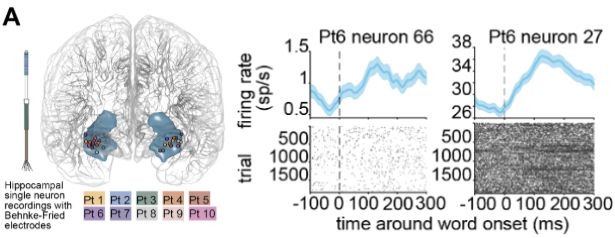

www.biorxiv.org/content/10.1...

www.biorxiv.org/content/10.1...

www.biorxiv.org/content/10.1...

www.biorxiv.org/content/10.1...