chaitanyamalaviya.github.io

Even joint debiasing across multiple biases (length, vagueness, jargon) proved effective with minimal impact on general capabilities.

Even joint debiasing across multiple biases (length, vagueness, jargon) proved effective with minimal impact on general capabilities.

It significantly reduced average miscalibration (e.g., from 39.4% to 32.5%) and brought model skew much closer to human preferences. All this while maintaining overall performance on RewardBench!

It significantly reduced average miscalibration (e.g., from 39.4% to 32.5%) and brought model skew much closer to human preferences. All this while maintaining overall performance on RewardBench!

We synthesize contrastive responses that explicitly magnify biases in dispreferred responses, & further finetune reward models on these responses.

We synthesize contrastive responses that explicitly magnify biases in dispreferred responses, & further finetune reward models on these responses.

Features that only weakly correlate with human preferences (r_human=−0.12) are strongly predictive for models (r_model=0.36). Points above y=x suggest that models overrely on these spurious cues😮

Features that only weakly correlate with human preferences (r_human=−0.12) are strongly predictive for models (r_model=0.36). Points above y=x suggest that models overrely on these spurious cues😮

For eg, humans preferred structured responses >65% of the time when the alternative wasn't structured. This gives an opportunity for models to learn these patterns as heuristics!

For eg, humans preferred structured responses >65% of the time when the alternative wasn't structured. This gives an opportunity for models to learn these patterns as heuristics!

Vagueness & sycophancy are especially problematic!

Vagueness & sycophancy are especially problematic!

We found that they overrely on many idiosyncratic features of AI-generated text, which can lead to reward hacking & unreliable evals. Features like:

We found that they overrely on many idiosyncratic features of AI-generated text, which can lead to reward hacking & unreliable evals. Features like:

Our new paper investigates these and other idiosyncratic biases in preference models, and presents a simple post-training recipe to mitigate them! Thread below 🧵↓

Our new paper investigates these and other idiosyncratic biases in preference models, and presents a simple post-training recipe to mitigate them! Thread below 🧵↓

We can study "default" responses from models. Under what type of context does their response get highest score?

We uncover a bias towards WEIRD contexts (Western, Educated, Industrialized, Rich & Democratic)!

We can study "default" responses from models. Under what type of context does their response get highest score?

We uncover a bias towards WEIRD contexts (Western, Educated, Industrialized, Rich & Democratic)!

We find that (1) presence of context can improve agreement between evaluators and (2) even change model rankings! 🤯

We find that (1) presence of context can improve agreement between evaluators and (2) even change model rankings! 🤯

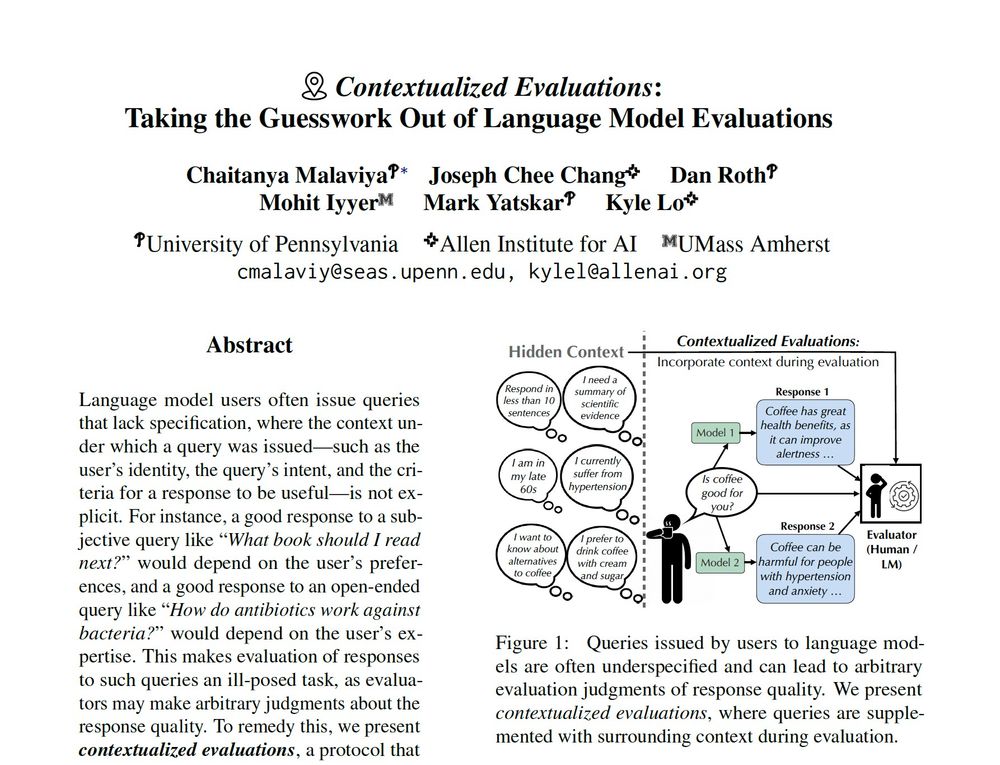

e.g., Given a query “Is coffee good for you?”, how can evaluators accurately judge model responses when they aren't informed about the user’s preferences, background or important criteria?

e.g., Given a query “Is coffee good for you?”, how can evaluators accurately judge model responses when they aren't informed about the user’s preferences, background or important criteria?

These can be ambiguous (e.g., what is a transformer? ... 🤔 for NLP or EE?), subjective (e.g., who is the best? ... 🤔 what criteria?), and more!

These can be ambiguous (e.g., what is a transformer? ... 🤔 for NLP or EE?), subjective (e.g., who is the best? ... 🤔 what criteria?), and more!

Benchmarks like Chatbot Arena contain underspecified queries, which can lead to arbitrary eval judgments. What happens if we provide evaluators with context (e.g who's the user, what's their intent) when judging LM outputs? 🧵↓

Benchmarks like Chatbot Arena contain underspecified queries, which can lead to arbitrary eval judgments. What happens if we provide evaluators with context (e.g who's the user, what's their intent) when judging LM outputs? 🧵↓