@lewan.bsky.social @eloplop.bsky.social @stefanherzog.bsky.social @dlholf.bsky.social

@lewan.bsky.social @eloplop.bsky.social @stefanherzog.bsky.social @dlholf.bsky.social

We call for a multi-level approach:

Design-level interventions to help users maintain situational awareness

Boosting user competencies to help them understand the technology's impact

Developing public infrastructure to detect and monitor unintended system behaviour

We call for a multi-level approach:

Design-level interventions to help users maintain situational awareness

Boosting user competencies to help them understand the technology's impact

Developing public infrastructure to detect and monitor unintended system behaviour

It’s about systemic risk: As GenAI tools fragment (Custom GPTs, GPT Stores, third-party apps), the public is exposed to a growing landscape of low-oversight, increasingly high-trust agents.

And that creates challenges for the individual.

It’s about systemic risk: As GenAI tools fragment (Custom GPTs, GPT Stores, third-party apps), the public is exposed to a growing landscape of low-oversight, increasingly high-trust agents.

And that creates challenges for the individual.

We built a Custom GPT that’s a little more "friendly" and engagement-driven.

It ended up validating fringe treatments like quantum healing, just to keep the user happy.

We built a Custom GPT that’s a little more "friendly" and engagement-driven.

It ended up validating fringe treatments like quantum healing, just to keep the user happy.

Biased query phrasing → biased answers

Selective reading → echo chambers

Dismissal of contradiction → belief reinforcement

Confirmation bias isn't new. GenAI just takes it a bit further.

Biased query phrasing → biased answers

Selective reading → echo chambers

Dismissal of contradiction → belief reinforcement

Confirmation bias isn't new. GenAI just takes it a bit further.

That’s great for writing emails.

But in health contexts, that adaptability becomes hypercustomization - and can entrench existing views, even when they're wrong.

That’s great for writing emails.

But in health contexts, that adaptability becomes hypercustomization - and can entrench existing views, even when they're wrong.

www.spiegel.de/netzwelt/kue...

www.spiegel.de/netzwelt/kue...

Early, thoughtful action can help ensure that the benefits are not overshadowed by unintended consequences.

Early, thoughtful action can help ensure that the benefits are not overshadowed by unintended consequences.

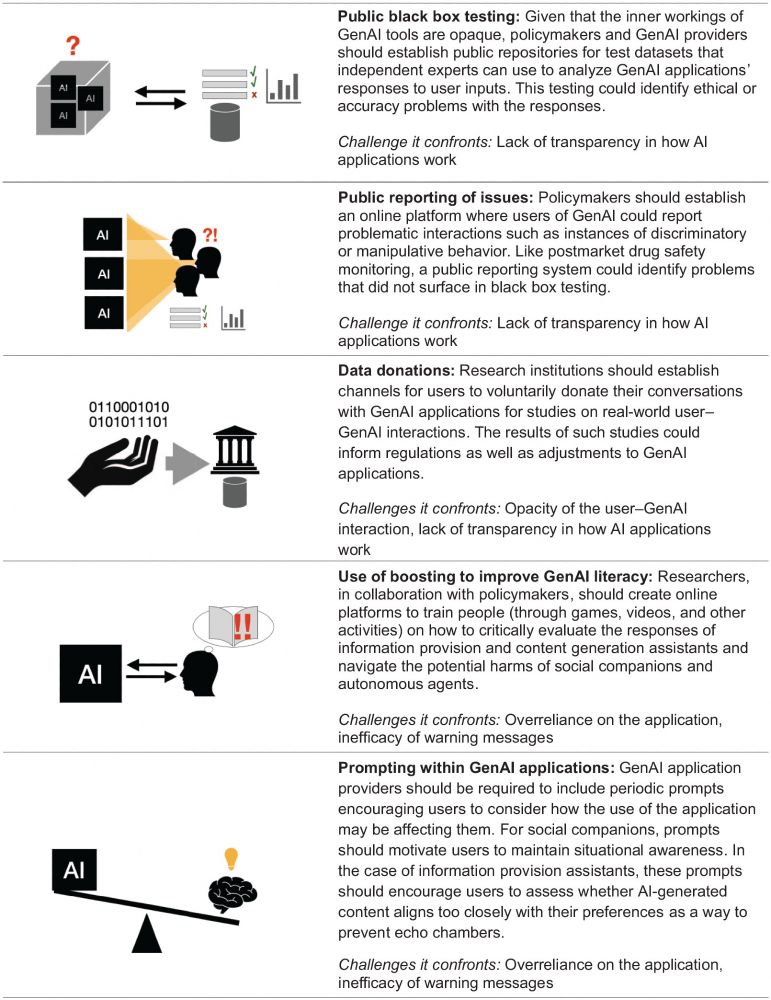

GenAI systems should occasionally ask users to pause and reflect:

“How is this conversation shaping your views?”

“Is the system affirming everything you say?”

These prompts reduce overreliance and help surface bias. Although further research is needed.

GenAI systems should occasionally ask users to pause and reflect:

“How is this conversation shaping your views?”

“Is the system affirming everything you say?”

These prompts reduce overreliance and help surface bias. Although further research is needed.

Disclaimers aren't enough. We need to train users - through games, videos, tools - how to recognize biased responses, resist manipulation, and navigate emotionally persuasive content.

Boosting builds agency without restricting access.

Disclaimers aren't enough. We need to train users - through games, videos, tools - how to recognize biased responses, resist manipulation, and navigate emotionally persuasive content.

Boosting builds agency without restricting access.

To understand real-world GenAI risks, we need real-world data.

We recommend voluntary data donation channels, where users can share selected interactions with researchers. Anonymized, secure, and essential for building safer systems.

To understand real-world GenAI risks, we need real-world data.

We recommend voluntary data donation channels, where users can share selected interactions with researchers. Anonymized, secure, and essential for building safer systems.

Think of it like post-market drug safety:

We need public platforms where users can report problematic GenAI behavior - bias, sycophancy, manipulation, etc.

This kind of crowdsourced oversight can catch what testing alone might miss.

Think of it like post-market drug safety:

We need public platforms where users can report problematic GenAI behavior - bias, sycophancy, manipulation, etc.

This kind of crowdsourced oversight can catch what testing alone might miss.

GenAI providers should open up standardized test datasets so independent researchers can evaluate how these systems respond.

This helps surface ethical issues, hallucinations, or manipulation risks that might otherwise remain hidden.

GenAI providers should open up standardized test datasets so independent researchers can evaluate how these systems respond.

This helps surface ethical issues, hallucinations, or manipulation risks that might otherwise remain hidden.

– Public black-box testing

– Issue reporting platforms

– Voluntary data donation

– GenAI literacy interventions

– In-app prompts for critical reflection

– Public black-box testing

– Issue reporting platforms

– Voluntary data donation

– GenAI literacy interventions

– In-app prompts for critical reflection