#BionicVision #Blindness #NeuroTech #VisionScience #CompNeuro #NeuroAI

PSTR450.20

Nov 19 at 1:00 PM

www.abstractsonline.com/pp8/#!/21171...

#SfN25 #VisionScience #NeuroTechnology

PSTR450.20

Nov 19 at 1:00 PM

www.abstractsonline.com/pp8/#!/21171...

#SfN25 #VisionScience #NeuroTechnology

PSTR341.13

Nov 18 at 1:00 PM

www.abstractsonline.com/pp8/#!/21171...

#SfN25 #VisionScience #Neuroscience

PSTR341.13

Nov 18 at 1:00 PM

www.abstractsonline.com/pp8/#!/21171...

#SfN25 #VisionScience #Neuroscience

PSTR154.08

Nov 17 at 8:00 AM

www.abstractsonline.com/pp8/#!/21171...

#SfN25 #VisionScience #NeuroTechnology

PSTR154.08

Nov 17 at 8:00 AM

www.abstractsonline.com/pp8/#!/21171...

#SfN25 #VisionScience #NeuroTechnology

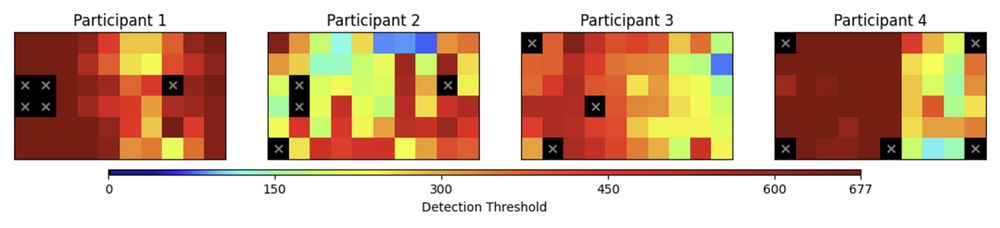

✅ GPR + spatial sampling = fewer trials, same accuracy

🔁 Toward faster, personalized calibration

🔗 bionicvisionlab.org/publications...

#EMBC2025

✅ GPR + spatial sampling = fewer trials, same accuracy

🔁 Toward faster, personalized calibration

🔗 bionicvisionlab.org/publications...

#EMBC2025

bionicvisionlab.org/publications...

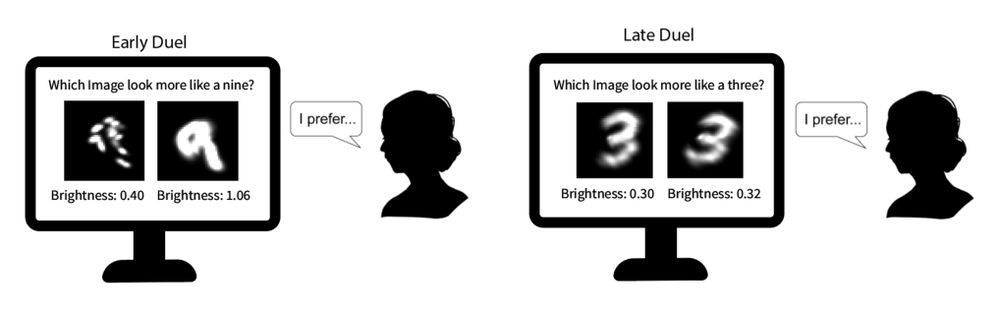

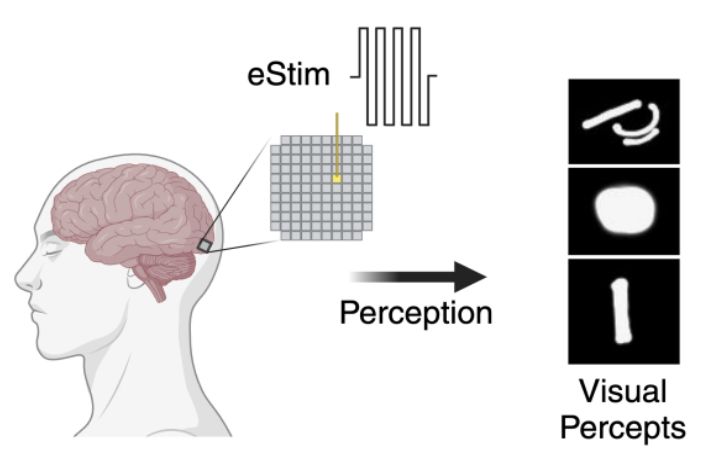

🧠 Human-in-the-loop optimization (HILO) works in silico—but does it hold up with real people?

✅ HILO outperformed naïve and deep encoders

🔁 A step toward personalized #BionicVision

#EMBC2025

bionicvisionlab.org/publications...

🧠 Human-in-the-loop optimization (HILO) works in silico—but does it hold up with real people?

✅ HILO outperformed naïve and deep encoders

🔁 A step toward personalized #BionicVision

#EMBC2025

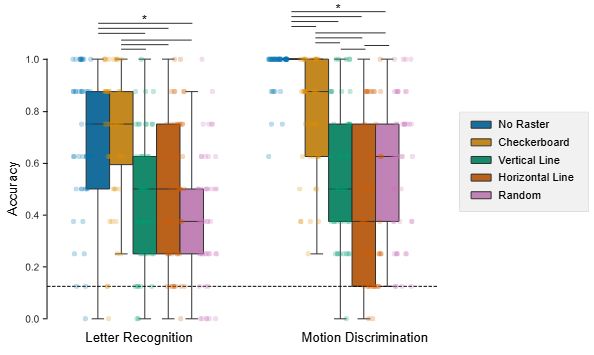

✔️ works across tasks

✔️ requires no fancy calibration

✔️ is hardware-agnostic

A low-cost, high-impact tweak that could make future visual prostheses more usable and more intuitive.

#BionicVision #BCI #NeuroTech

✔️ works across tasks

✔️ requires no fancy calibration

✔️ is hardware-agnostic

A low-cost, high-impact tweak that could make future visual prostheses more usable and more intuitive.

#BionicVision #BCI #NeuroTech

💡 Why? More spatial separation between activations = less perceptual interference.

It even matched performance of the ideal “no raster” condition, without breaking safety rules.

💡 Why? More spatial separation between activations = less perceptual interference.

It even matched performance of the ideal “no raster” condition, without breaking safety rules.

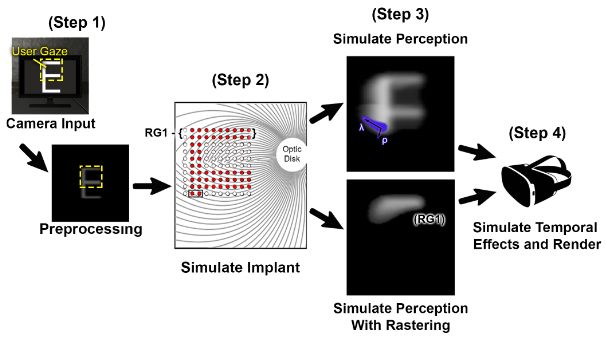

🧪 Powered by BionicVisionXR.

📐 Modeled 100-electrode Argus-like array.

👀 Realistic phosphene appearance, eye/head tracking.

🧪 Powered by BionicVisionXR.

📐 Modeled 100-electrode Argus-like array.

👀 Realistic phosphene appearance, eye/head tracking.

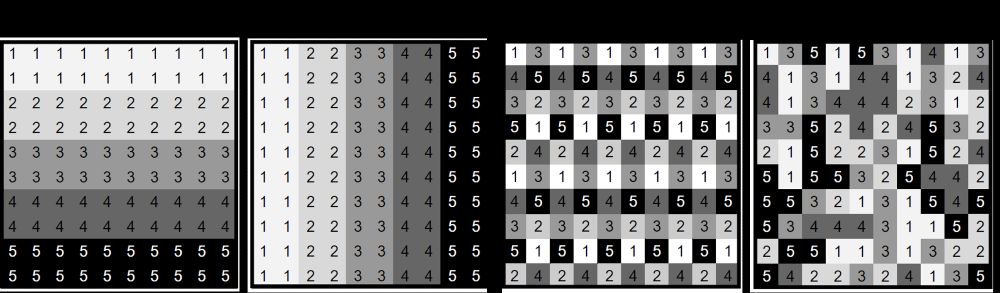

But is it actually better, or just tradition?

No one had rigorously tested how these patterns impact perception in visual prostheses.

So we did.

But is it actually better, or just tradition?

No one had rigorously tested how these patterns impact perception in visual prostheses.

So we did.

Tue, 2:45 - 6:45pm, Pavilion: Poster #56.472

www.visionsciences.org/presentation...

👁️🧪 #XR #VirtualReality #Unity3D #VSS2025

Tue, 2:45 - 6:45pm, Pavilion: Poster #56.472

www.visionsciences.org/presentation...

👁️🧪 #XR #VirtualReality #Unity3D #VSS2025

🕥 Sun 10:45pm · Talk Room 1

🧠 www.visionsciences.org/presentation...

🕥 Sun 10:45pm · Talk Room 1

🧠 www.visionsciences.org/presentation...