https://www.rpisoni.dev/

Read it here :): docs.google.com/document/d/1...

Read it here :): docs.google.com/document/d/1...

Is academic publishing pay to win yet?

Is academic publishing pay to win yet?

I tried replacing dot-product attention with the negative squared KQ-distance and was able to remove the softmax without issues and loss in performance!

I tried replacing dot-product attention with the negative squared KQ-distance and was able to remove the softmax without issues and loss in performance!

Read more in our blog post and on the EurIPS website:

blog.neurips.cc/2025/07/16/n...

eurips.cc

Read more in our blog post and on the EurIPS website:

blog.neurips.cc/2025/07/16/n...

eurips.cc

Thinking about a setup where, within a specific activation range (e.g., right before a ReLU), you'd only permit positive or negative gradients.

Thinking about a setup where, within a specific activation range (e.g., right before a ReLU), you'd only permit positive or negative gradients.

This is the type of long term planning that Seldonian predictions can help improving.

This is the type of long term planning that Seldonian predictions can help improving.

Would be happy to meet some people there so if you're at ICLR as well and want to hang out feel free to pm!🙂

Would be happy to meet some people there so if you're at ICLR as well and want to hang out feel free to pm!🙂

What's driving performance: architecture or data?

To find out we pretrained ModernBERT on the same dataset as CamemBERTaV2 (a DeBERTaV3 model) to isolate architecture effects.

Here are our findings:

What's driving performance: architecture or data?

To find out we pretrained ModernBERT on the same dataset as CamemBERTaV2 (a DeBERTaV3 model) to isolate architecture effects.

Here are our findings:

Anything wrong/missing?

Anything wrong/missing?

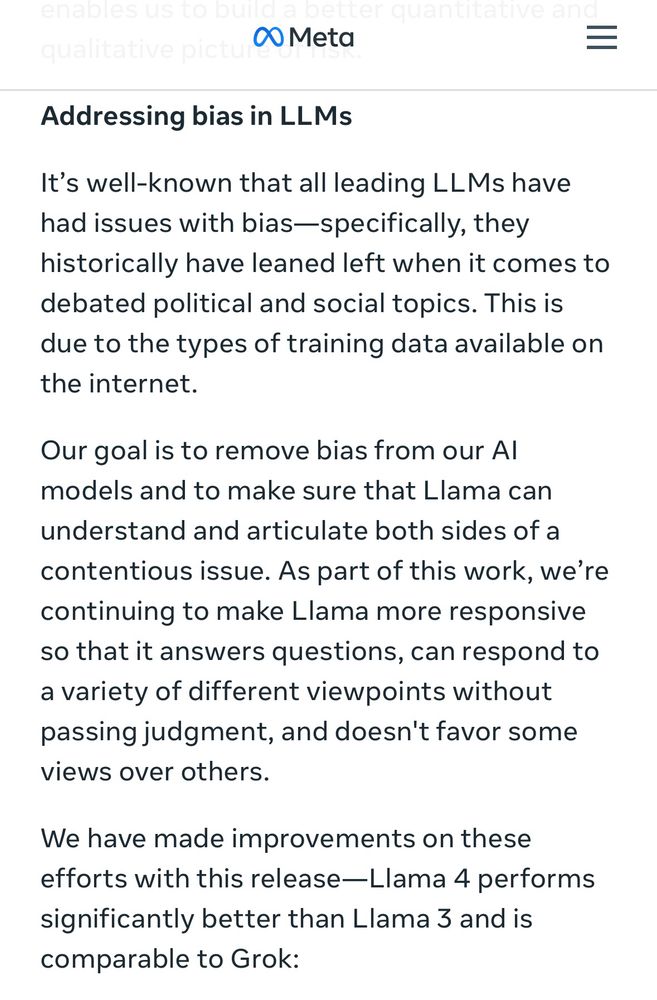

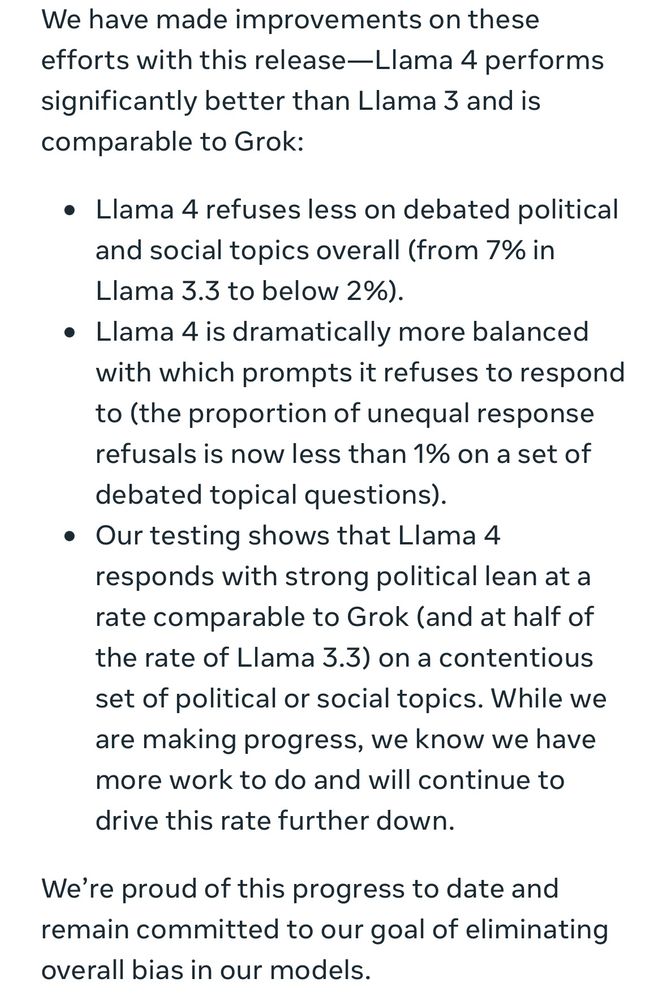

“[LLMs] historically have leaned left when it comes to debated political and social topics.”

ai.meta.com/blog/llama-4...

“[LLMs] historically have leaned left when it comes to debated political and social topics.”

ai.meta.com/blog/llama-4...

@csateth.bsky.social @ethzurich.bsky.social

@csateth.bsky.social @ethzurich.bsky.social

This is the first fruit of our common work. We quantify space folding in relu neural networks with a range based measure. Lots of fun to write and read!😉

Michal Lewandowski, Hamid Eghbalzadeh, Bernhard Heinzl, Raphael Pisoni, Bernhard A. Moser

Action editor: Petar Veličković

https://openreview.net/forum?id=RfFqBXLDQk

#cantornet #similarity #relu

This is the first fruit of our common work. We quantify space folding in relu neural networks with a range based measure. Lots of fun to write and read!😉

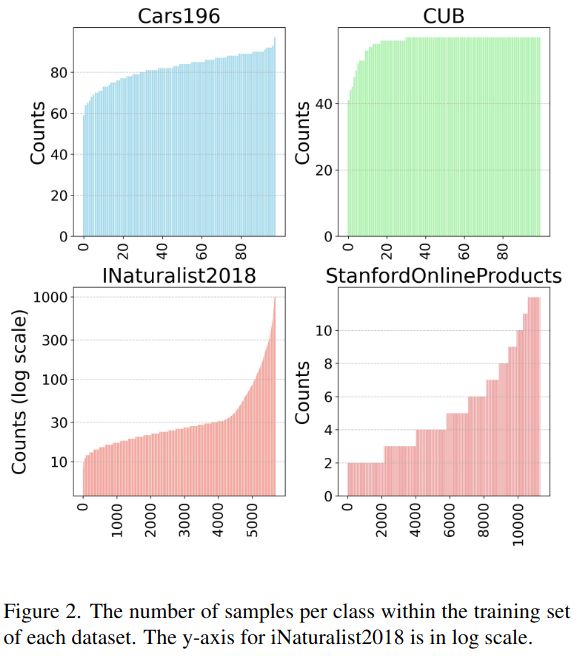

Curious about image retrieval and contrastive learning? We present:

📄 "All You Need to Know About Training Image Retrieval Models"

🔍 The most comprehensive retrieval benchmark—thousands of experiments across 4 datasets, dozens of losses, batch sizes, LRs, data labeling, and more!

Curious about image retrieval and contrastive learning? We present:

📄 "All You Need to Know About Training Image Retrieval Models"

🔍 The most comprehensive retrieval benchmark—thousands of experiments across 4 datasets, dozens of losses, batch sizes, LRs, data labeling, and more!