arxiv.org/abs/2507.02103

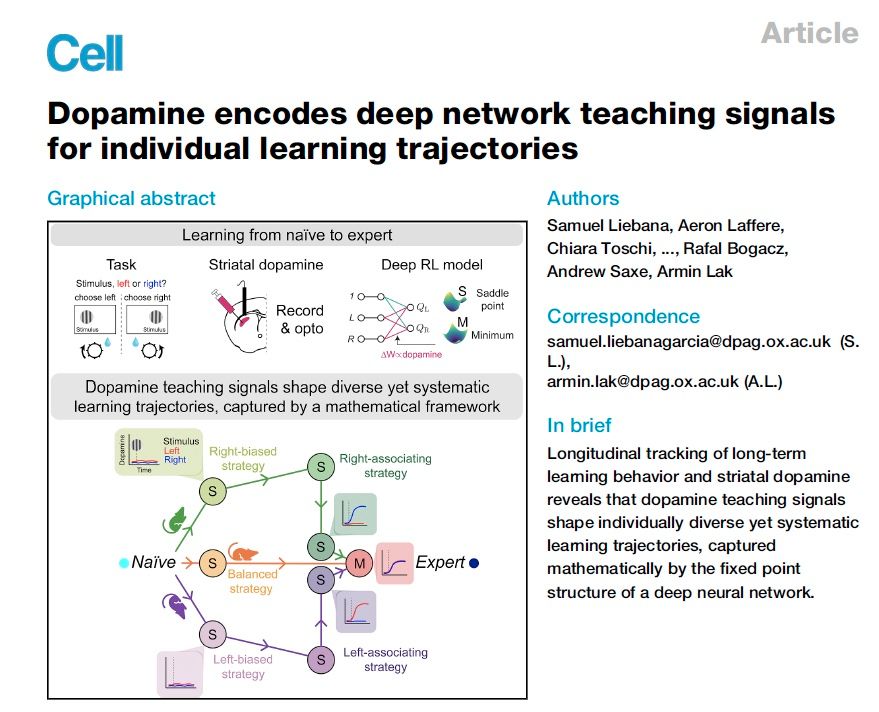

We relate this to non-stationary rule learning tasks with rapid performance jumps.

Feedback welcome!

arxiv.org/abs/2507.02103

We relate this to non-stationary rule learning tasks with rapid performance jumps.

Feedback welcome!

@ryanpaulbadman1.bsky.social & Riley Simmons-Edler show–through cog sci, neuro & ethology–how an AI agent with fewer ‘neurons’ than an insect can forage, find safety & dodge predators in a virtual world. Here's what we built

Preprint: arxiv.org/pdf/2506.06981

@ryanpaulbadman1.bsky.social & Riley Simmons-Edler show–through cog sci, neuro & ethology–how an AI agent with fewer ‘neurons’ than an insect can forage, find safety & dodge predators in a virtual world. Here's what we built

Preprint: arxiv.org/pdf/2506.06981

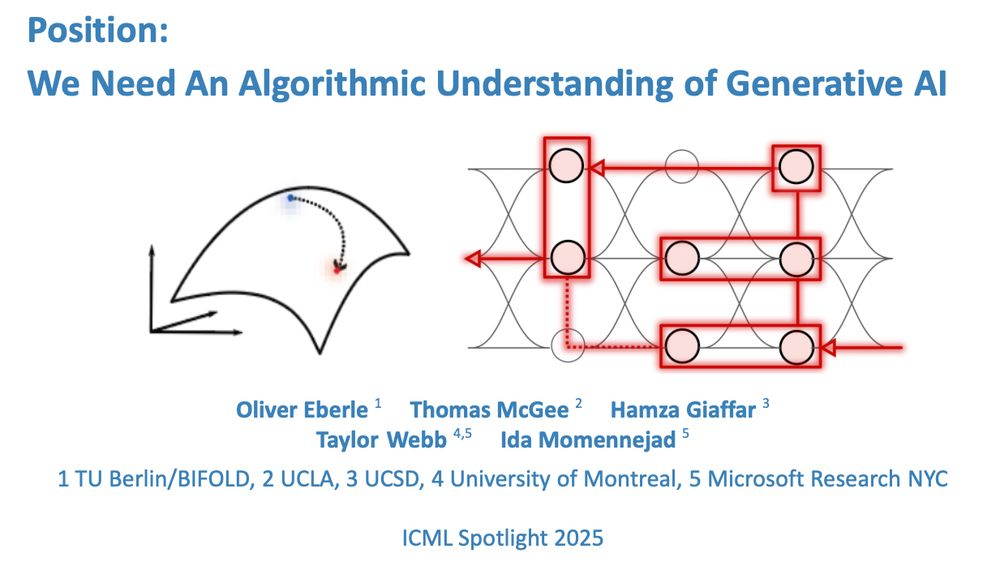

Position: We Need An Algorithmic Understanding of Generative AI

What algorithms do LLMs actually learn and use to solve problems?🧵1/n

openreview.net/forum?id=eax...

Position: We Need An Algorithmic Understanding of Generative AI

What algorithms do LLMs actually learn and use to solve problems?🧵1/n

openreview.net/forum?id=eax...

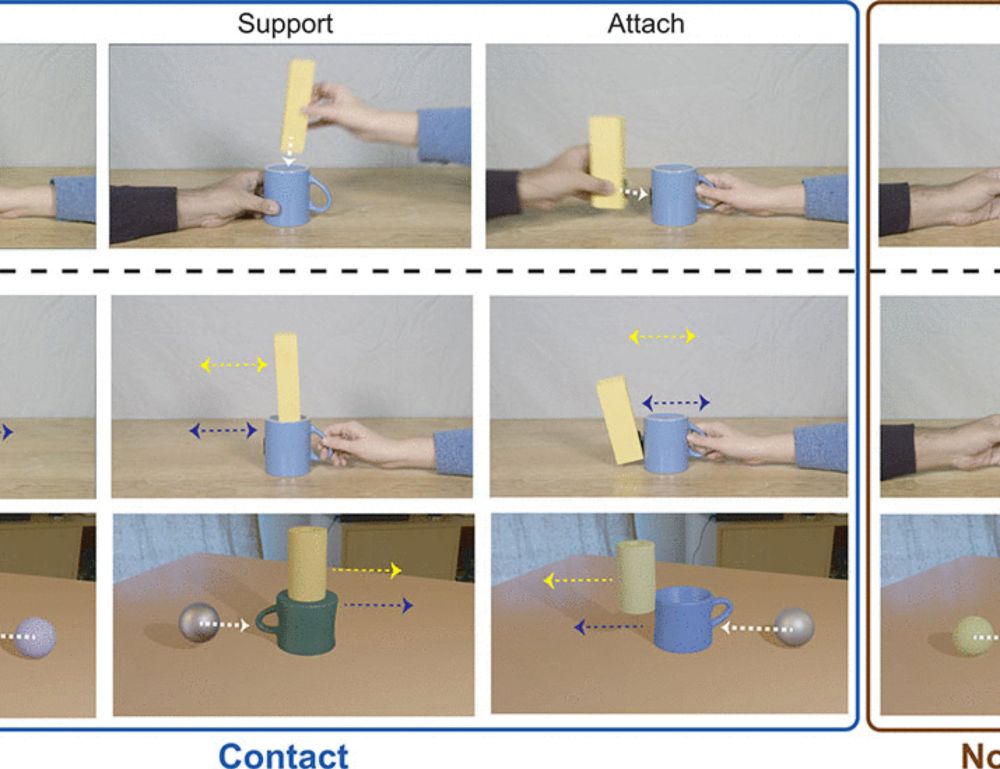

www.science.org/doi/full/10....

(1/n)

Grateful for this careful & honest investigation.

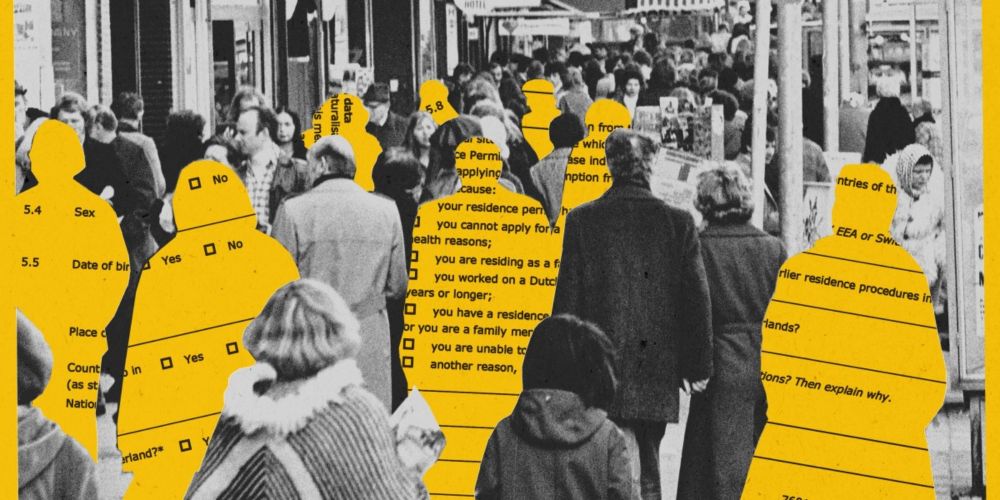

Amsterdam believed that it could build a #predictiveAI for welfare fraud that would ALSO be fair, unbiased, & a positive case study for #ResponsibleAI. It didn't work.

Our deep dive why: www.technologyreview.com/2025/06/11/1...

Grateful for this careful & honest investigation.

"Deep RL Needs Deep Behavior Analysis: Exploring Implicit Planning by Model-Free Agents in Open-Ended Environments"

Sophisticated & sometimes insect-like planning, exploration, predator evasion, and foraging strategies by DRL.

arxiv.org/abs/2506.06981

"Deep RL Needs Deep Behavior Analysis: Exploring Implicit Planning by Model-Free Agents in Open-Ended Environments"

Sophisticated & sometimes insect-like planning, exploration, predator evasion, and foraging strategies by DRL.

arxiv.org/abs/2506.06981

www.nature.com/articles/s41...

www.nature.com/articles/s41...

www.biorxiv.org/content/10.1...

www.biorxiv.org/content/10.1...