✅ efficient training

✅ expressive modeling

✅ inference-time scaling

paper: arxiv.org/abs/2505.22866

code: github.com/nico-espinosadice/SORL

w/ Yiyi Zhang, Yiding Chen, Bradley Guo, Owen Oertell, @gokul.dev, @xkianteb.bsky.social, Wen Sun

✅ efficient training

✅ expressive modeling

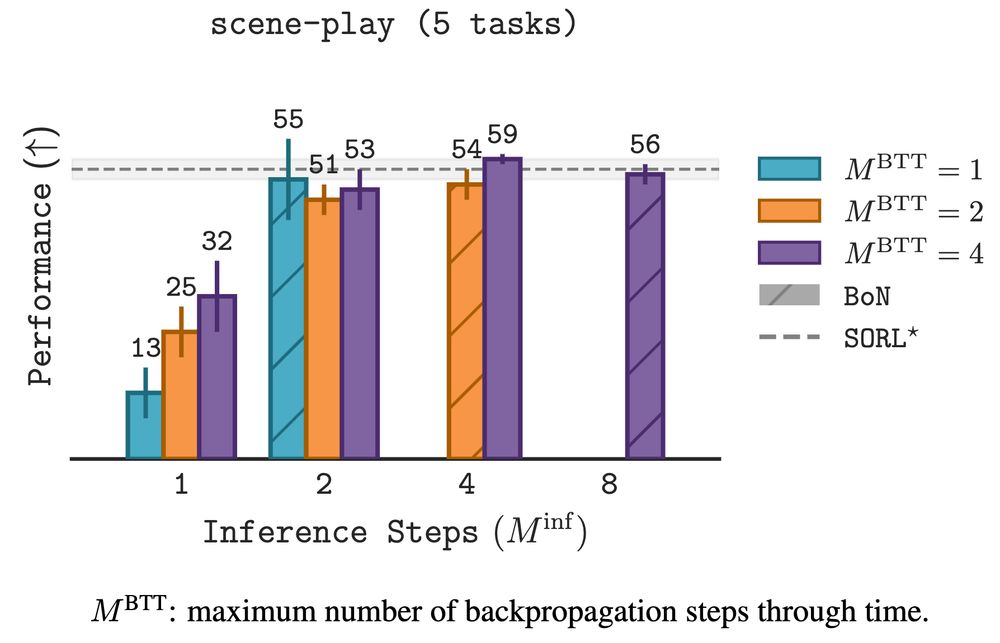

✅ inference-time scaling

paper: arxiv.org/abs/2505.22866

code: github.com/nico-espinosadice/SORL

w/ Yiyi Zhang, Yiding Chen, Bradley Guo, Owen Oertell, @gokul.dev, @xkianteb.bsky.social, Wen Sun

1. *scale expressivity* by fitting complex, multi-modal distributions

2. *scale training* by limiting the amount of backprop through time

3. *scale inference* through sequential and parallel scaling

1. *scale expressivity* by fitting complex, multi-modal distributions

2. *scale training* by limiting the amount of backprop through time

3. *scale inference* through sequential and parallel scaling

pc: @Kevin Frans

pc: @Kevin Frans

paper: arxiv.org/abs/2505.22866

code: github.com/nico-espinosadice/SORL

w/ Yiyi Zhang, Yiding Chen, Bradley Guo, Owen Oertell, @gokul.dev , @xkianteb.bsky.social, Wen Sun

paper: arxiv.org/abs/2505.22866

code: github.com/nico-espinosadice/SORL

w/ Yiyi Zhang, Yiding Chen, Bradley Guo, Owen Oertell, @gokul.dev , @xkianteb.bsky.social, Wen Sun