https://www.goethe-university-frankfurt.de/115948107/Maximilian_Weber

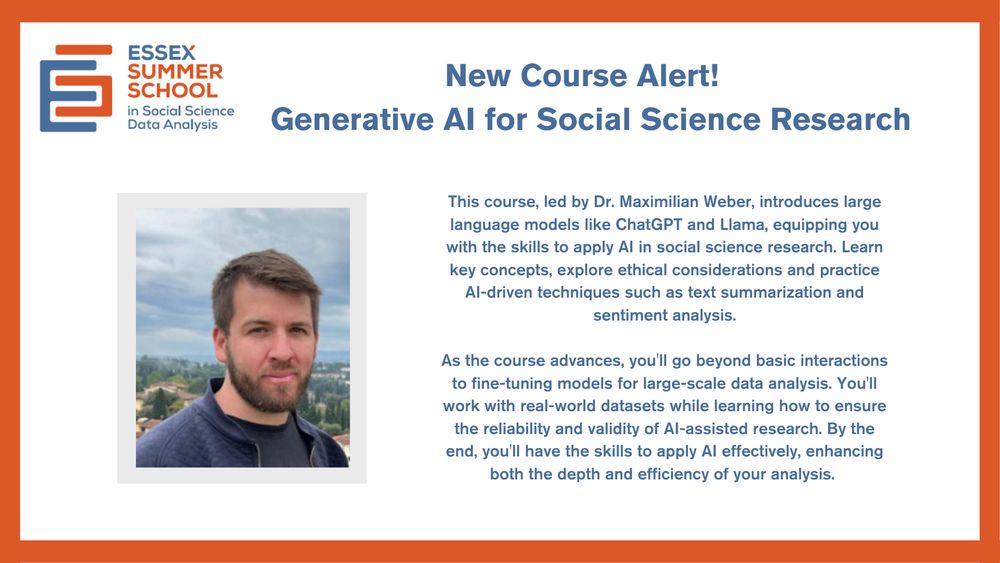

Generative AI for Social Science Research

This course explores how to apply AI in social science research, from basic interactions to fine-tuning models for large-scale data analysis.

Find out more & apply: bit.ly/4l3nnGI

#ESS2025 #GenerativeAI

Generative AI for Social Science Research

This course explores how to apply AI in social science research, from basic interactions to fine-tuning models for large-scale data analysis.

Find out more & apply: bit.ly/4l3nnGI

#ESS2025 #GenerativeAI

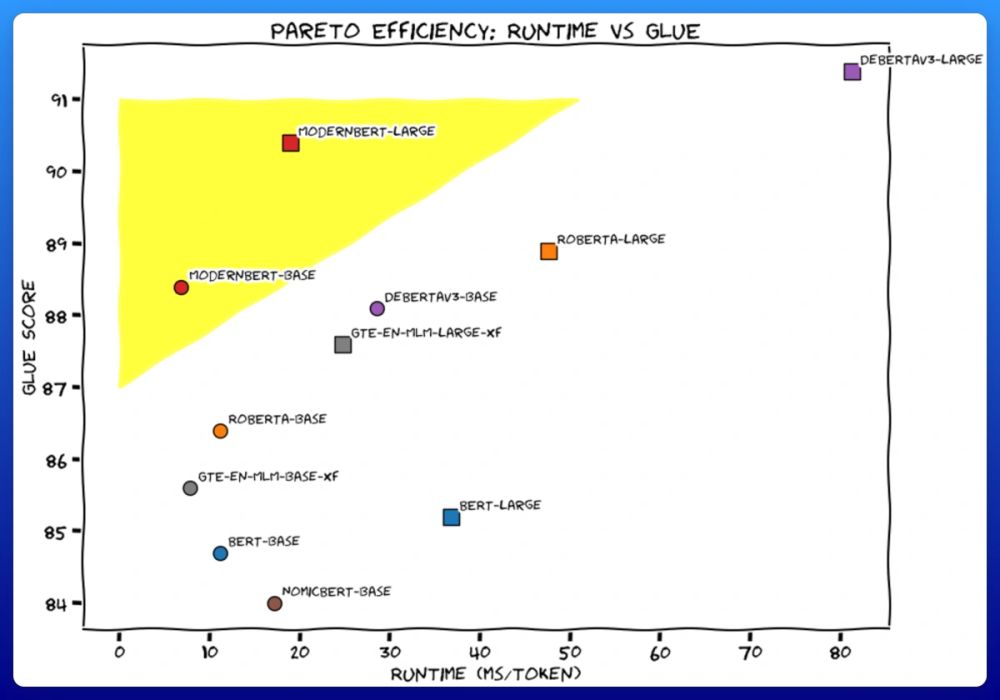

We trained 2 new models. Like BERT, but modern. ModernBERT.

Not some hypey GenAI thing, but a proper workhorse model, for retrieval, classification, etc. Real practical stuff.

It's much faster, more accurate, longer context, and more useful. 🧵

We trained 2 new models. Like BERT, but modern. ModernBERT.

Not some hypey GenAI thing, but a proper workhorse model, for retrieval, classification, etc. Real practical stuff.

It's much faster, more accurate, longer context, and more useful. 🧵

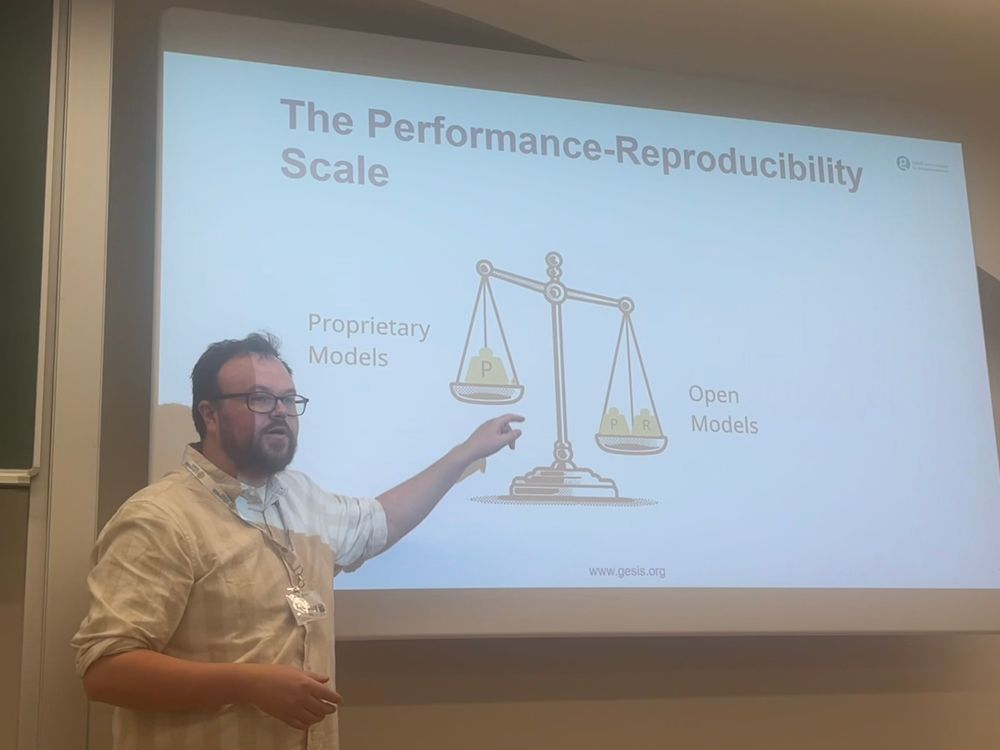

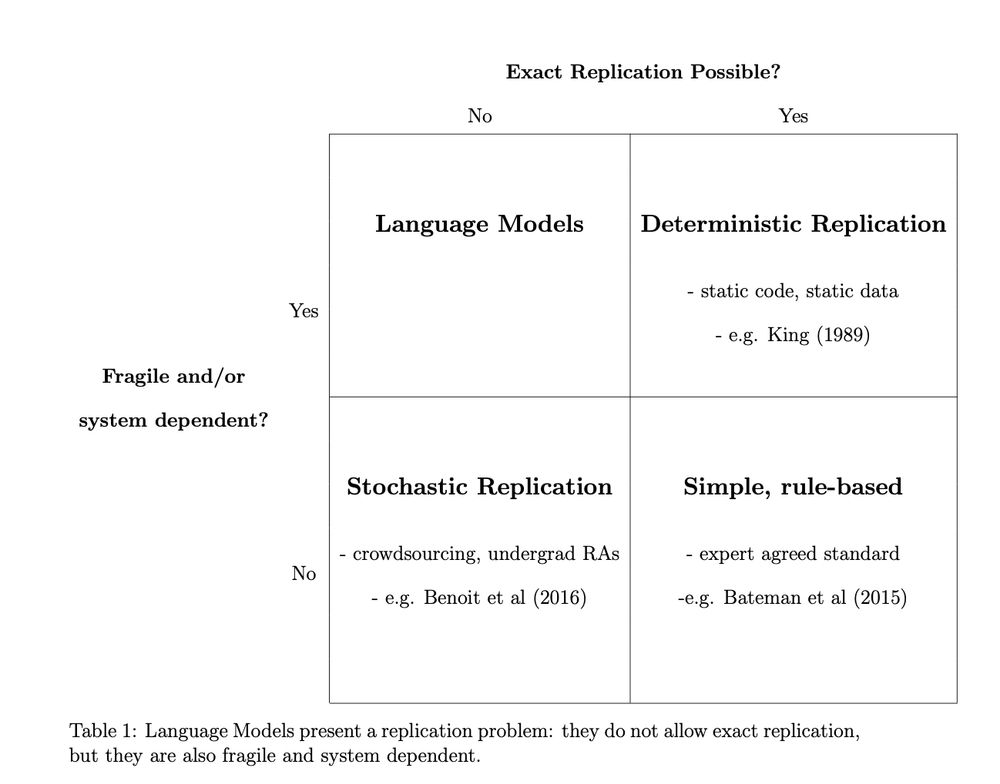

We show:

1. current applications of LMs in political science research *don't* meet basic standards of reproducibility...

@marklutter345.bsky.social and I are looking for contributions presenting new research, discussing CSS methods, giving overviews of applications or ongoing research projects. Please circulate!

@marklutter345.bsky.social and I are looking for contributions presenting new research, discussing CSS methods, giving overviews of applications or ongoing research projects. Please circulate!

www.nber.org/papers/w29724

www.nber.org/papers/w29724

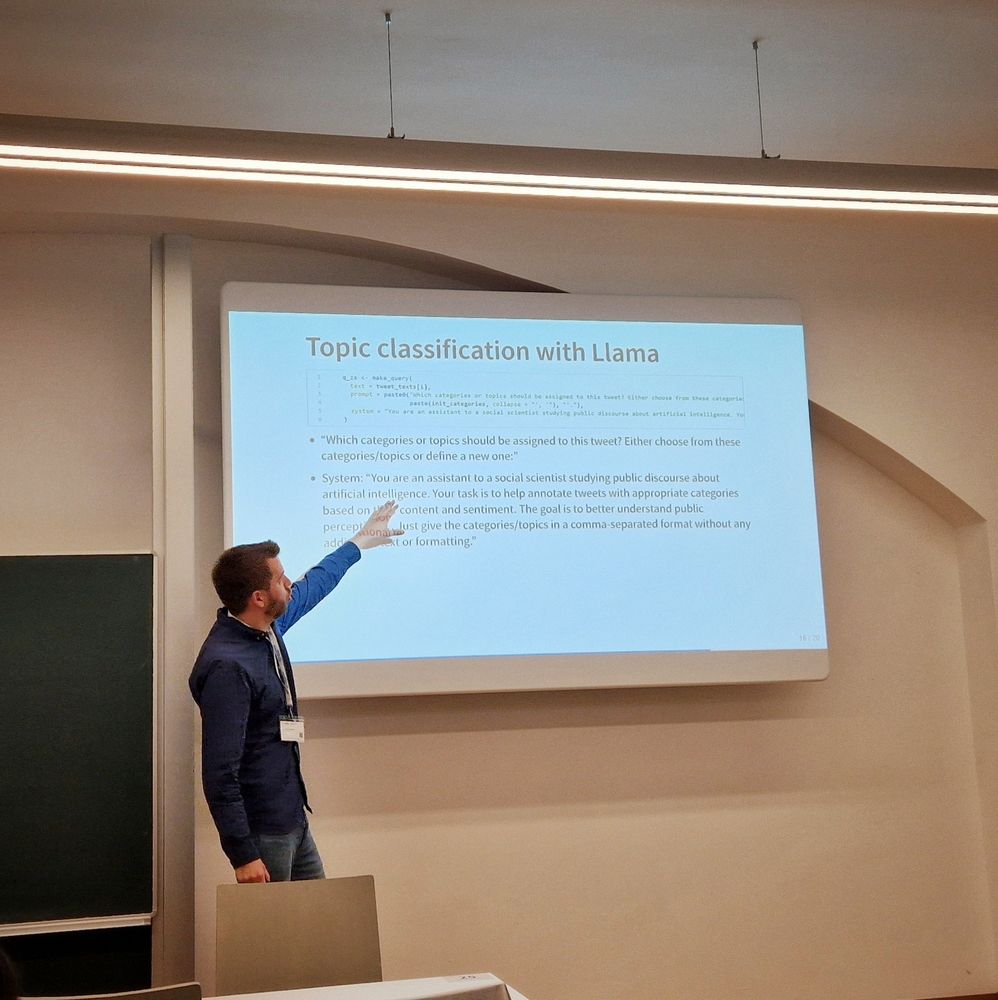

What's new?🧵👇

https://cran.r-project.org/web/packages/rollama/index.html

What's new?🧵👇

https://cran.r-project.org/web/packages/rollama/index.html