“Explain the answer” > “Try again”

Paper: arxiv.org/abs/2507.02834

Joint work with @ruiyang-zhou.bsky.social and Shuozhe Li.

“Explain the answer” > “Try again”

Paper: arxiv.org/abs/2507.02834

Joint work with @ruiyang-zhou.bsky.social and Shuozhe Li.

• DPO (preference-based)

• GRPO (verifier-based RL)

→ No architecture changes

→ No expert supervision

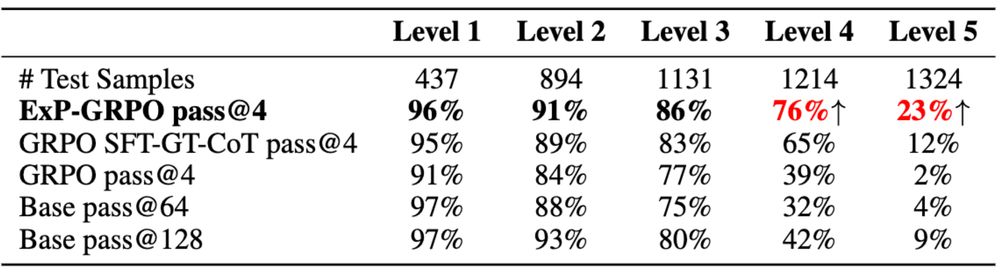

→ Big gains on hard tasks

Results (Qwen2.5-3B-Instruct, MATH level-5):

• DPO (preference-based)

• GRPO (verifier-based RL)

→ No architecture changes

→ No expert supervision

→ Big gains on hard tasks

Results (Qwen2.5-3B-Instruct, MATH level-5):

Ask the model to explain the correct answer — even when it couldn’t solve the problem.

These self-explanations are:

✅ in-distribution

✅ richer than failed CoTs

✅ Offer better guidance than expert-written CoTs

We train on them. We call it ExPO.

Ask the model to explain the correct answer — even when it couldn’t solve the problem.

These self-explanations are:

✅ in-distribution

✅ richer than failed CoTs

✅ Offer better guidance than expert-written CoTs

We train on them. We call it ExPO.

NO correct trajectory sampled -> NO learning signal -> Model stays the same and unlearns due to KL constraint

This happens often in hard reasoning tasks.

NO correct trajectory sampled -> NO learning signal -> Model stays the same and unlearns due to KL constraint

This happens often in hard reasoning tasks.

Paper: arxiv.org/abs/2506.00653

Joint work with Femi Bello, @anubrata.bsky.social, Fanzhi Zeng, @fcyin.bsky.social

Paper: arxiv.org/abs/2506.00653

Joint work with Femi Bello, @anubrata.bsky.social, Fanzhi Zeng, @fcyin.bsky.social

The result? Steering vectors(directions for specific behaviors) from the 2B model successfully guided 9B's outputs.

For example, a "dog-saying" steering vector from 2B made 9B talk more about dogs!

The result? Steering vectors(directions for specific behaviors) from the 2B model successfully guided 9B's outputs.

For example, a "dog-saying" steering vector from 2B made 9B talk more about dogs!

This means representations learned across models are transferable!

This means representations learned across models are transferable!

Paper: arxiv.org/abs/2410.13828

Check out our work at the NeurIPS AFM workshop, Exhibit Hall A, 12/14, 4:30 - 5:30 pm #NeurIPS2024

Paper: arxiv.org/abs/2410.13828

Check out our work at the NeurIPS AFM workshop, Exhibit Hall A, 12/14, 4:30 - 5:30 pm #NeurIPS2024

- Normalized preference optimization: normalize the chosen and rejected gradient

- Sparse token masking: impose sparsity on the tokens for calculating the margins.

- Normalized preference optimization: normalize the chosen and rejected gradient

- Sparse token masking: impose sparsity on the tokens for calculating the margins.

@zacharylipton.bsky.social

Paper: arxiv.org/abs/2402.05133, Code: github.com/HumainLab/Personalized_RLHF

Check out our work at the NeurIPS AFM workshop, Exhibit Hall A, 12/14, 4:30 - 5:30 pm #NeurIPS2024

@zacharylipton.bsky.social

Paper: arxiv.org/abs/2402.05133, Code: github.com/HumainLab/Personalized_RLHF

Check out our work at the NeurIPS AFM workshop, Exhibit Hall A, 12/14, 4:30 - 5:30 pm #NeurIPS2024

Paper: arxiv.org/abs/2402.05133, Code: github.com/HumainLab/Pe...

Check our work at the NeurIPS AFM workshop, Exhibit Hall A, 12/14, 4:30 - 5:30 pm #NeurIPS2024

Paper: arxiv.org/abs/2402.05133, Code: github.com/HumainLab/Pe...

Check our work at the NeurIPS AFM workshop, Exhibit Hall A, 12/14, 4:30 - 5:30 pm #NeurIPS2024