Prev: @CornellORIE @MSFTResearch, @IBMResearch, @uoftmie 🌈

(Joint work with @ellen-v.bsky.social @hzhai.bsky.social and @leqiliu.bsky.social )

🔗: arxiv.org/abs/2502.14760

(Joint work with @ellen-v.bsky.social @hzhai.bsky.social and @leqiliu.bsky.social )

🔗: arxiv.org/abs/2502.14760

I'll be chatting about this project at the INFORMs Computing Society Conference in the debate room at 3. Come say hi!

I'll be chatting about this project at the INFORMs Computing Society Conference in the debate room at 3. Come say hi!

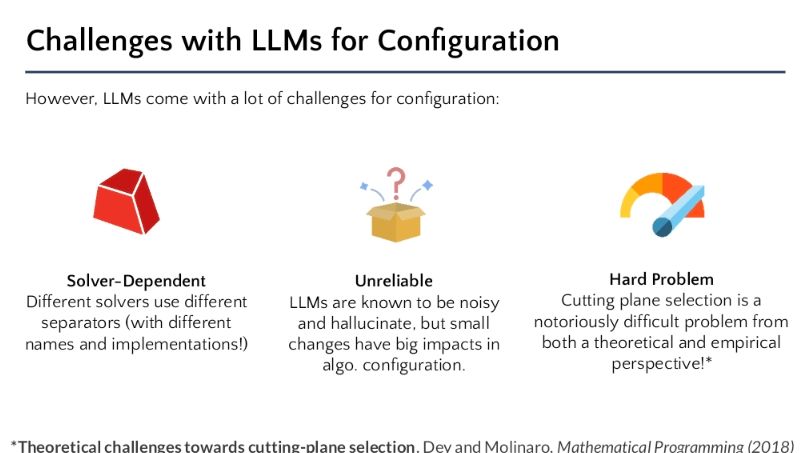

In this paper we show that we can thanks to Large Language Models! Why LLMs? They can identify useful optimization structure and have a lot of built in math programming knowledge!

In this paper we show that we can thanks to Large Language Models! Why LLMs? They can identify useful optimization structure and have a lot of built in math programming knowledge!