at Emre Neftci's lab (@fz-juelich.de).

ktran.de

And this is with end-to-end backprop (for now).

And this is with end-to-end backprop (for now).

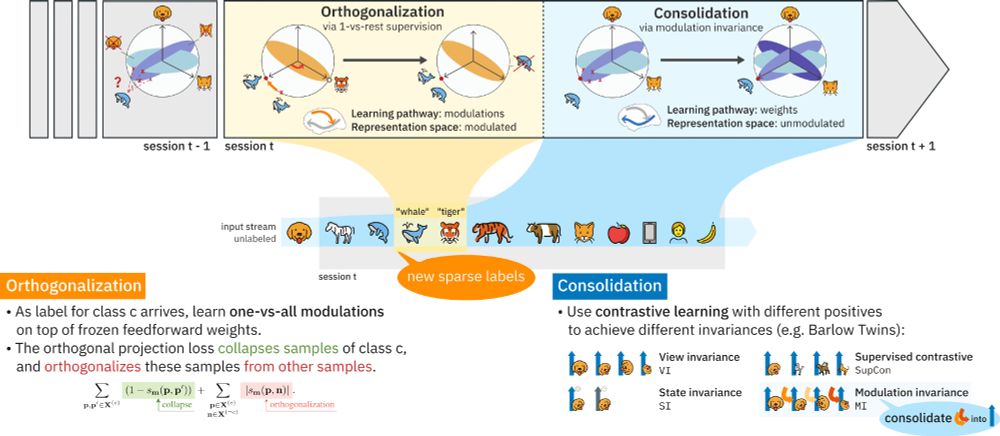

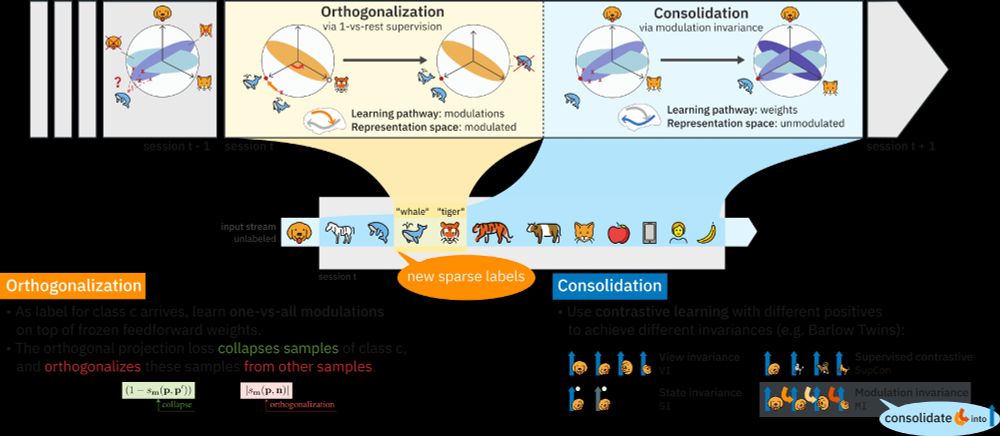

Exactly, only the ff params are learned during contrastive learning, and we "replay" different, frozen modulations for different positives, as we expect that an unlabeled class-c sample would yield an is-c positive under modulation c, and a is-not-c' positive under modulation c'.

Exactly, only the ff params are learned during contrastive learning, and we "replay" different, frozen modulations for different positives, as we expect that an unlabeled class-c sample would yield an is-c positive under modulation c, and a is-not-c' positive under modulation c'.

A huge thanks to my supervisor Willem Wybo and our institute head Emre Neftci!

📄 Preprint: arxiv.org/abs/2505.14125

🚀 Project page: ktran.de/papers/tmcl/

Supported by (@fzj-jsc.bsky.social) and WestAI.

(6/6)

A huge thanks to my supervisor Willem Wybo and our institute head Emre Neftci!

📄 Preprint: arxiv.org/abs/2505.14125

🚀 Project page: ktran.de/papers/tmcl/

Supported by (@fzj-jsc.bsky.social) and WestAI.

(6/6)

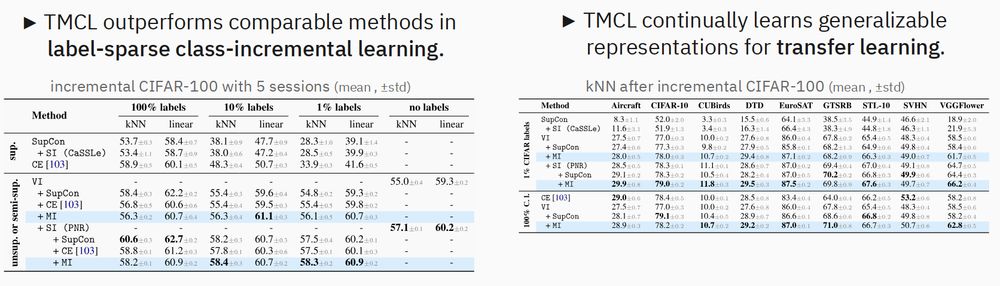

Therefore, we continually learn generalizable representations, unlike conventional, class-collapsing methods (e.g. Cross-Entropy). (3/6)

Therefore, we continually learn generalizable representations, unlike conventional, class-collapsing methods (e.g. Cross-Entropy). (3/6)