at Emre Neftci's lab (@fz-juelich.de).

ktran.de

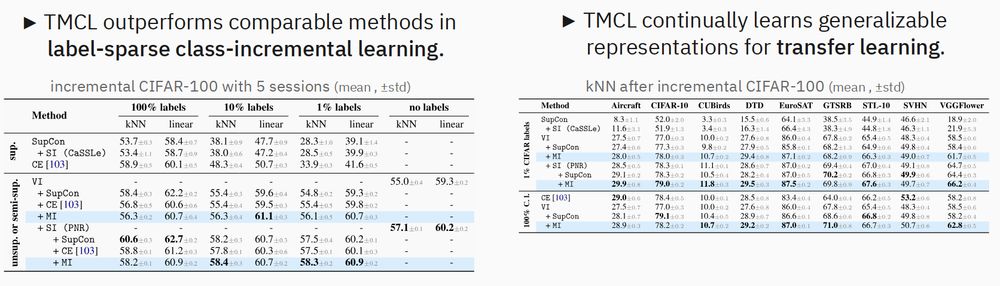

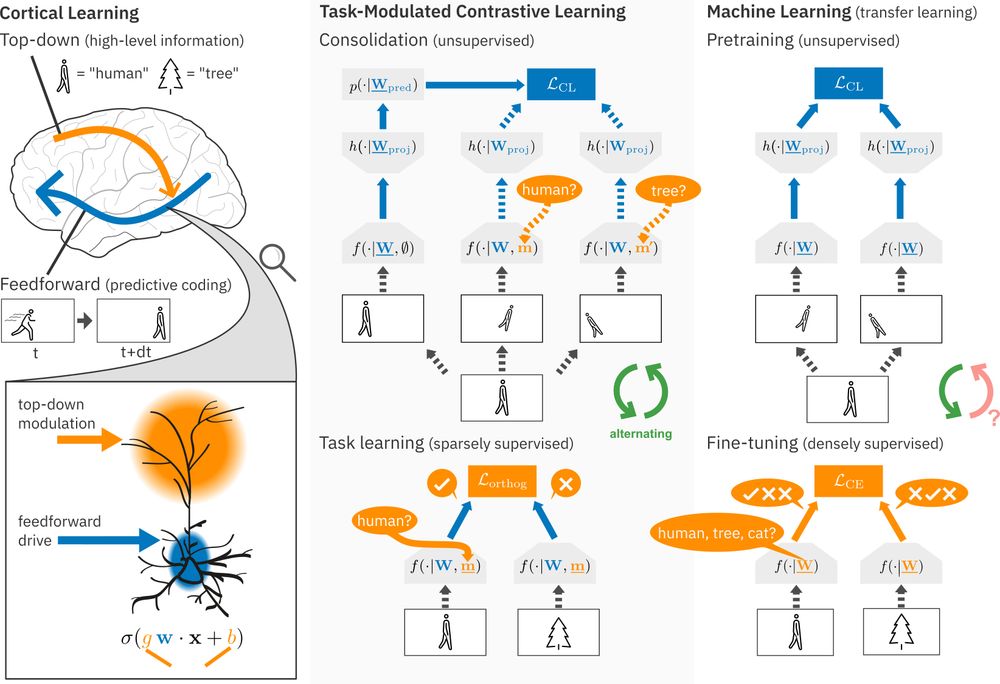

Therefore, we continually learn generalizable representations, unlike conventional, class-collapsing methods (e.g. Cross-Entropy). (3/6)

Therefore, we continually learn generalizable representations, unlike conventional, class-collapsing methods (e.g. Cross-Entropy). (3/6)

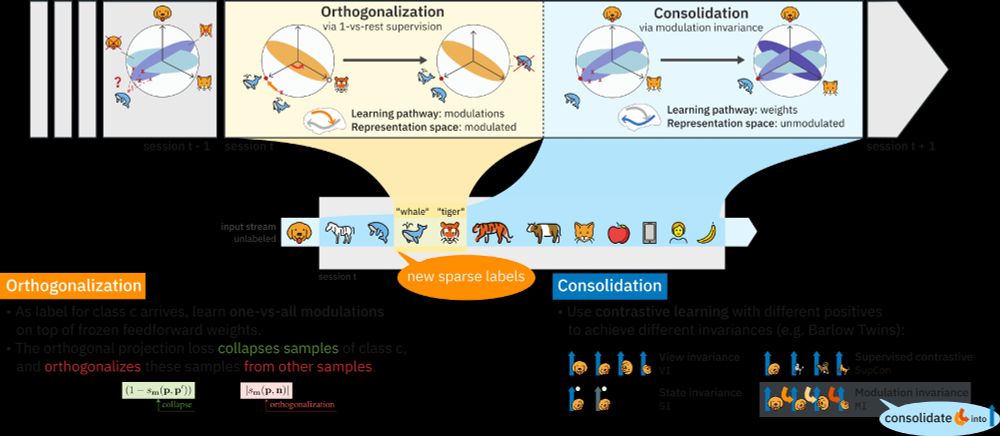

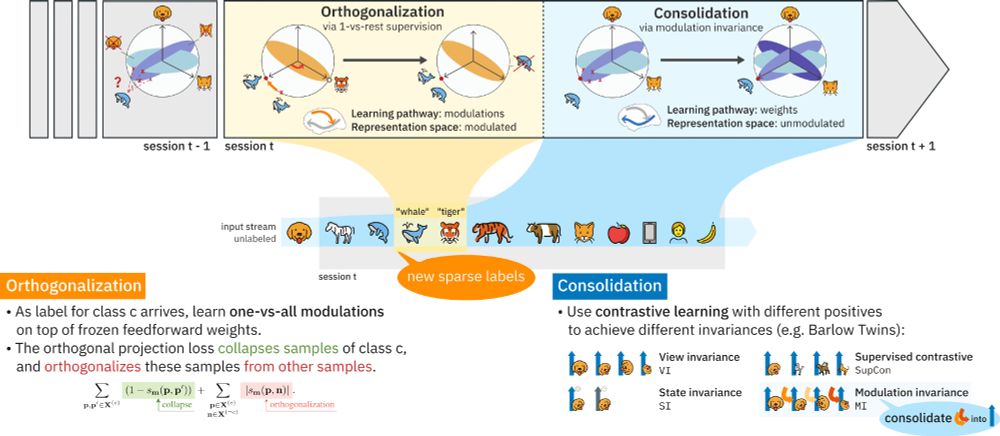

Continual learning methods struggle in mostly unsupervised environments with sparse labels (e.g. parents telling their child the object is an 'apple').

We propose that in the cortex, predictive coding of high-level top-down modulations solves this! (1/6)

Continual learning methods struggle in mostly unsupervised environments with sparse labels (e.g. parents telling their child the object is an 'apple').

We propose that in the cortex, predictive coding of high-level top-down modulations solves this! (1/6)