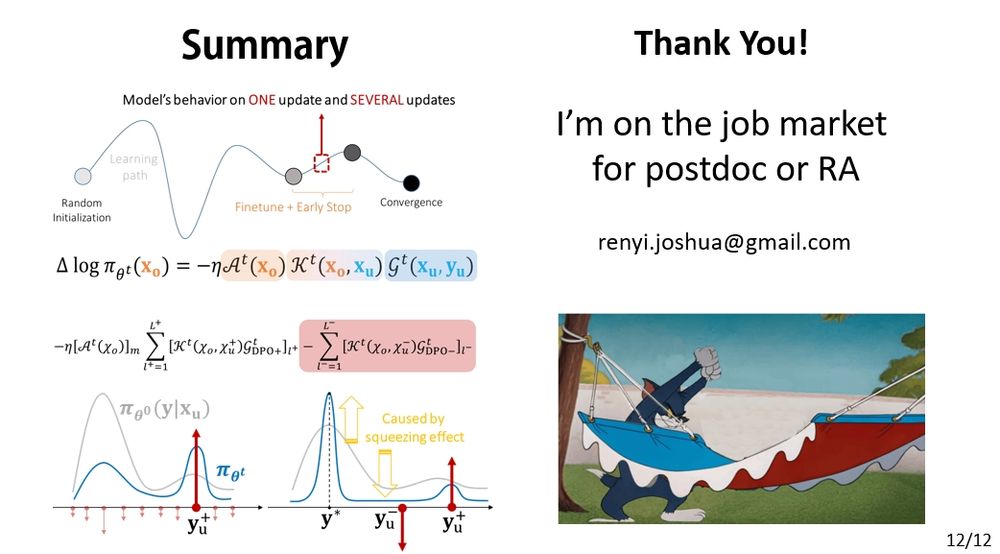

But there’s more coming—

🧠 Many RL + LLM methods (like GRPO) also involve negative gradients.

🎯 And a token-level AKG decomposition is even more suitable for real-world LLMs.

Please stay tuned. 🚀

(11/12)

But there’s more coming—

🧠 Many RL + LLM methods (like GRPO) also involve negative gradients.

🎯 And a token-level AKG decomposition is even more suitable for real-world LLMs.

Please stay tuned. 🚀

(11/12)

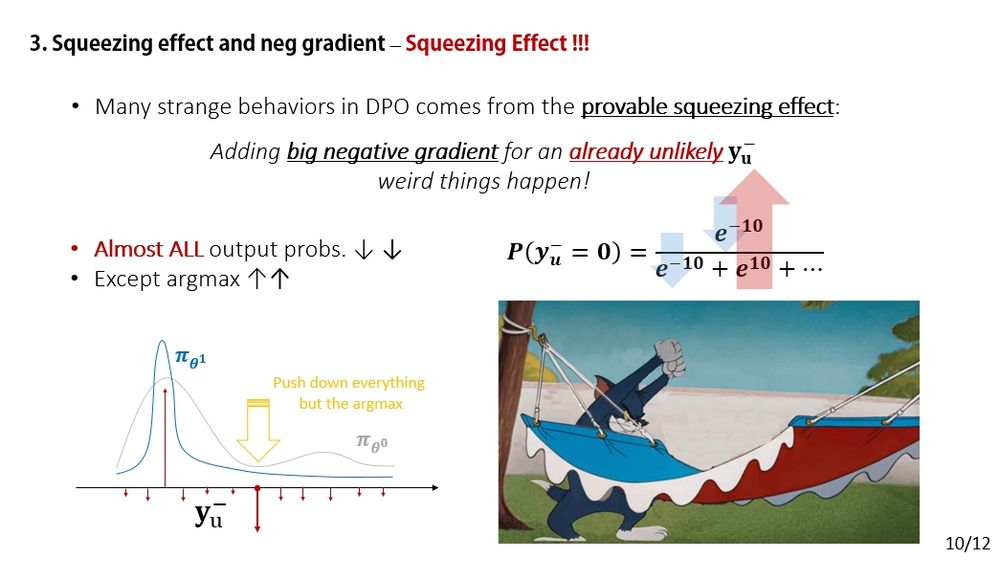

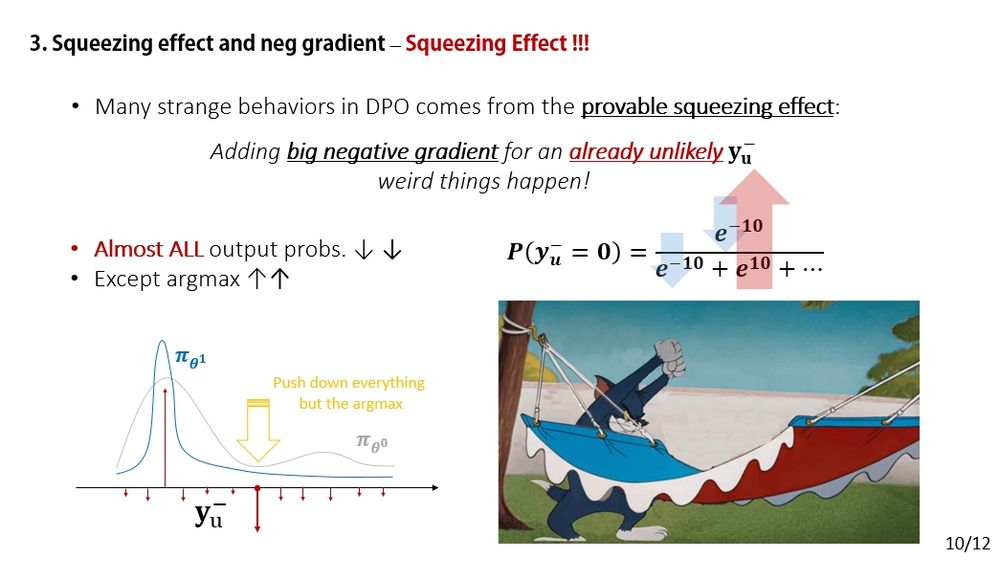

Just apply force analysis and remember: the smaller p(y-), the stronger the squeezing effect.

(10/12)

Just apply force analysis and remember: the smaller p(y-), the stronger the squeezing effect.

(10/12)

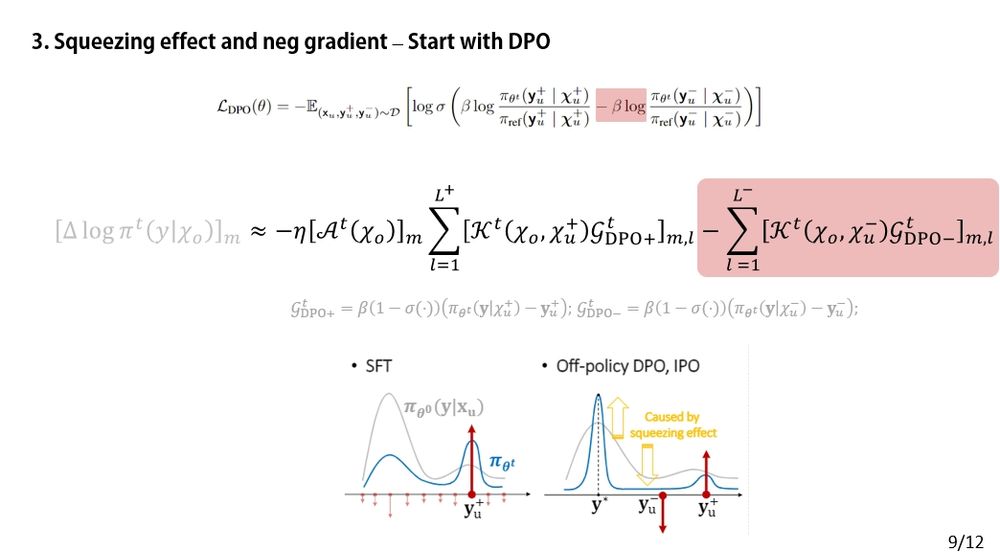

(9/12)

(9/12)

(8/12)

(8/12)

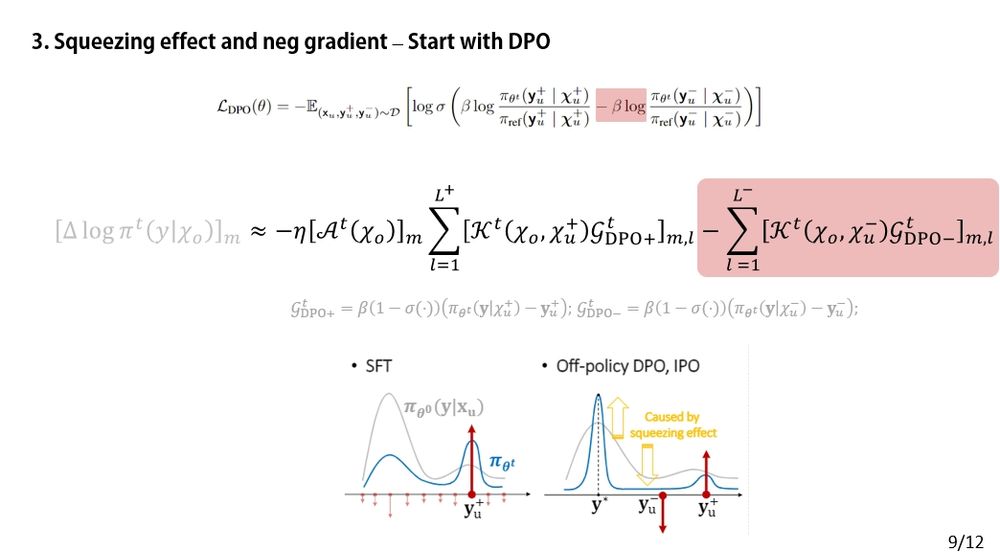

⚖️ The key difference? DPO introduces a negative gradient term — that’s where the twist comes in.

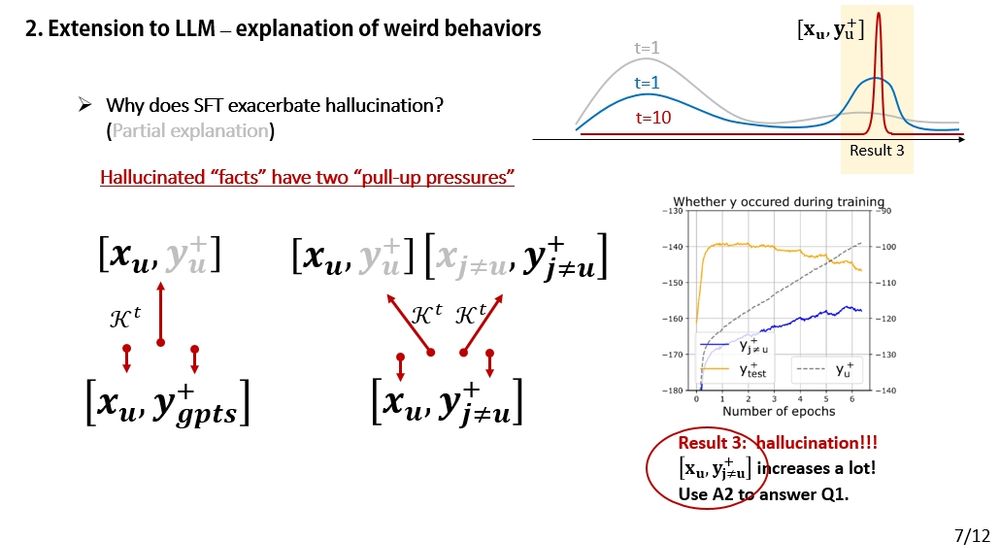

(7/12)

⚖️ The key difference? DPO introduces a negative gradient term — that’s where the twist comes in.

(7/12)

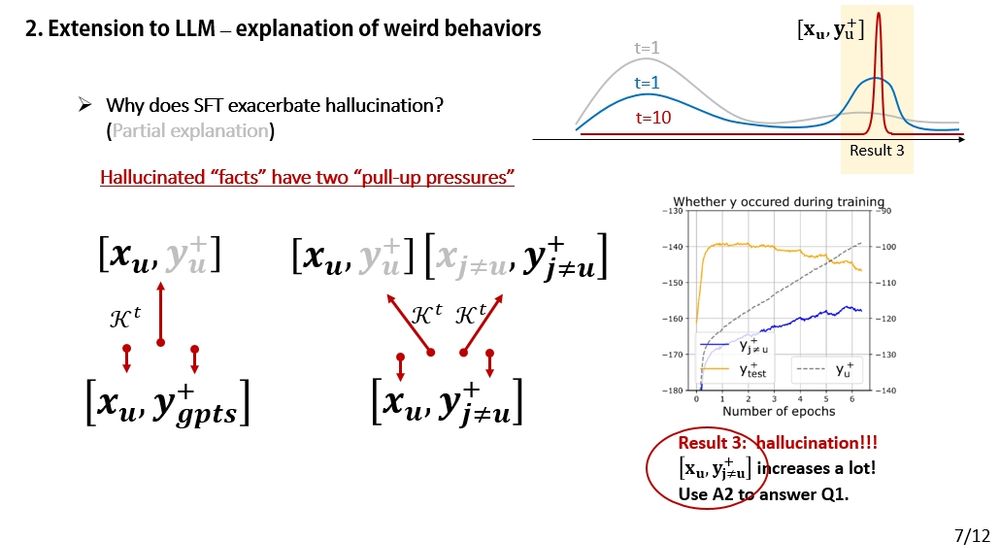

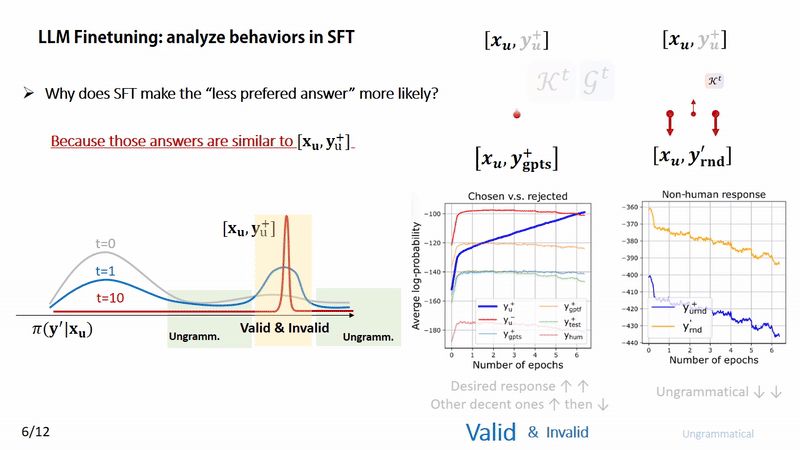

🔍 The model uses facts or phrases from A2 when answering an unrelated Q1.

Why does this happen?

Just do a force analysis — the answer emerges naturally. 💡

(6/12)

🔍 The model uses facts or phrases from A2 when answering an unrelated Q1.

Why does this happen?

Just do a force analysis — the answer emerges naturally. 💡

(6/12)

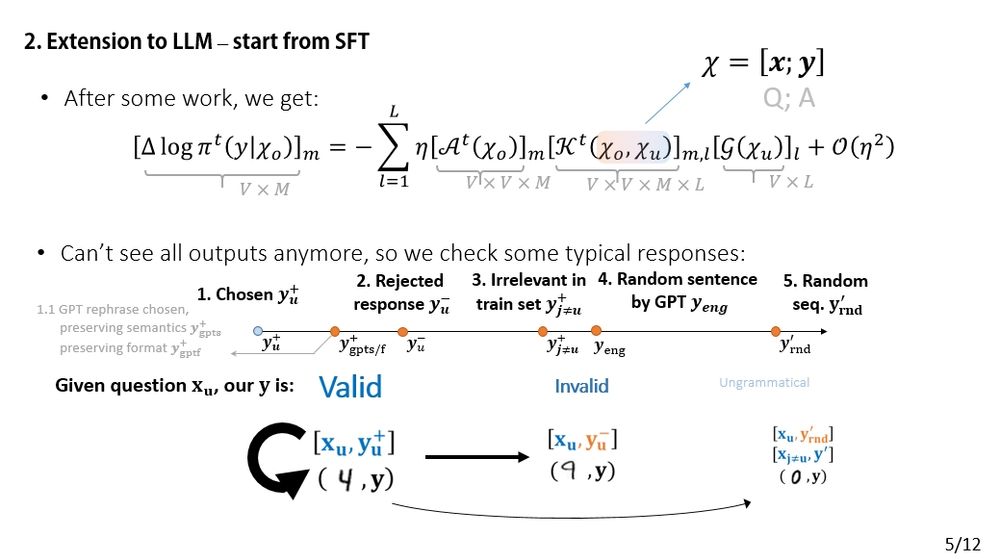

📈📉 This aligns well with the force analysis perspective. (More supporting experiments in the paper).

(5/12)

📈📉 This aligns well with the force analysis perspective. (More supporting experiments in the paper).

(5/12)

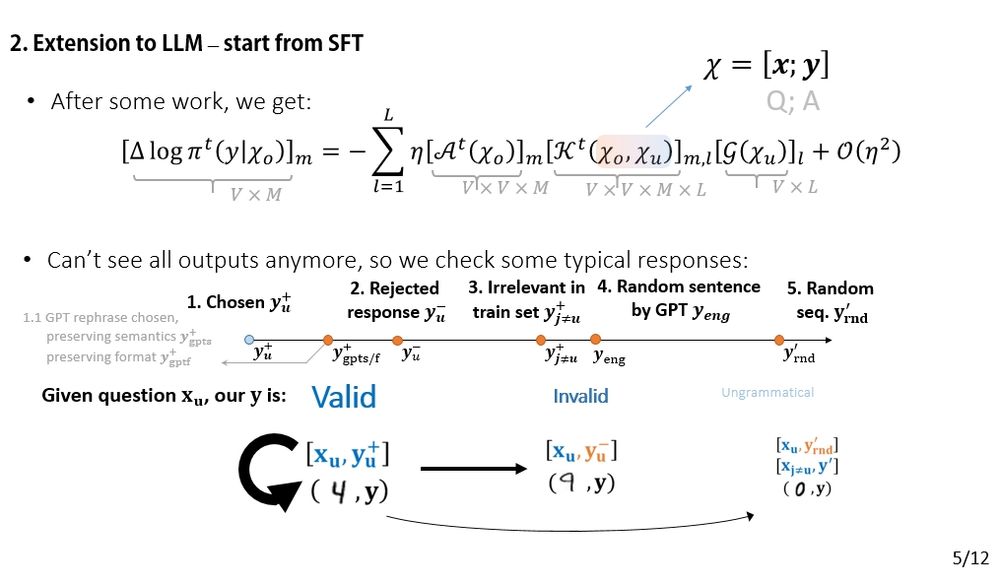

The change in the model’s prediction can be decomposed (AKG-style). The input is a concatenation: [x; y]. This lets us ask questions like: “How does the model’s confidence in 'y-' change if we fine-tune on 'y+'?”

(4/12)

The change in the model’s prediction can be decomposed (AKG-style). The input is a concatenation: [x; y]. This lets us ask questions like: “How does the model’s confidence in 'y-' change if we fine-tune on 'y+'?”

(4/12)

(3/12)

(3/12)

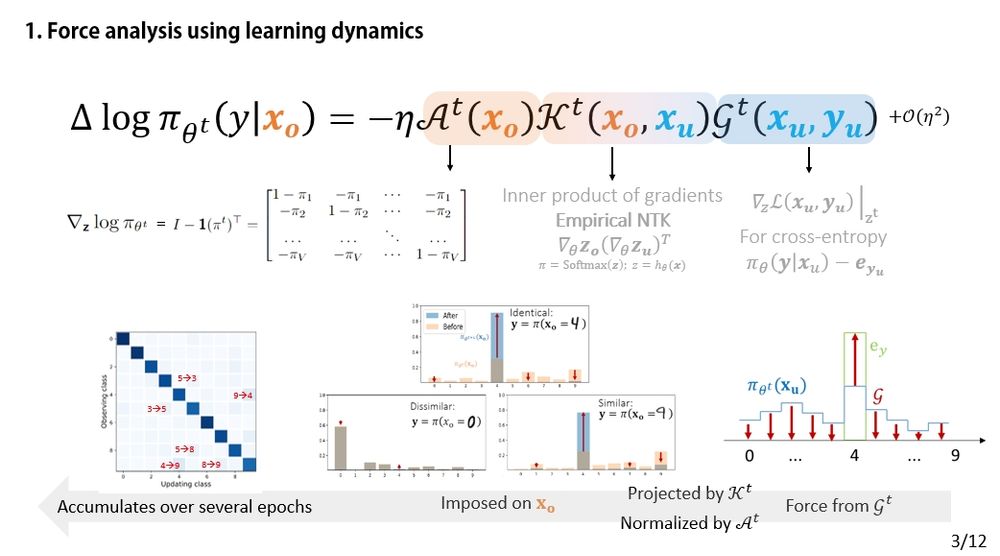

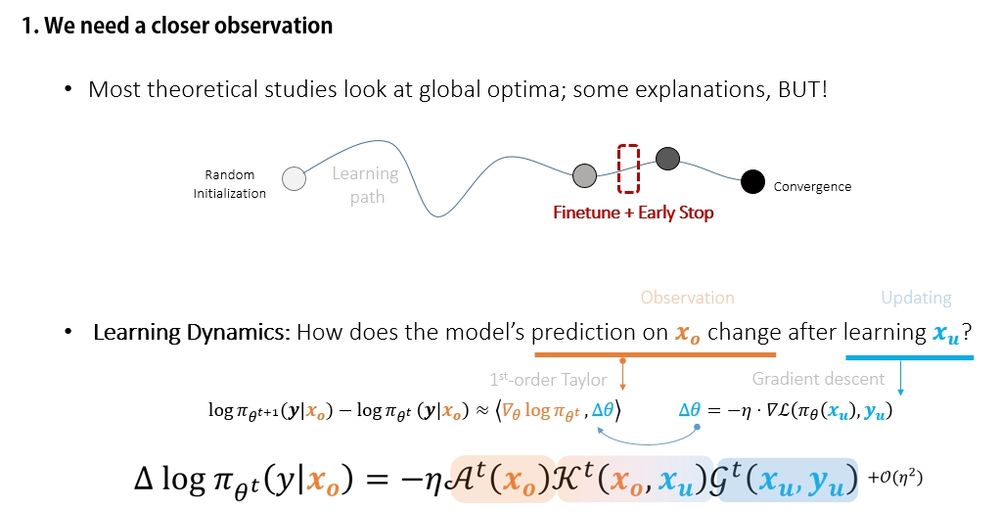

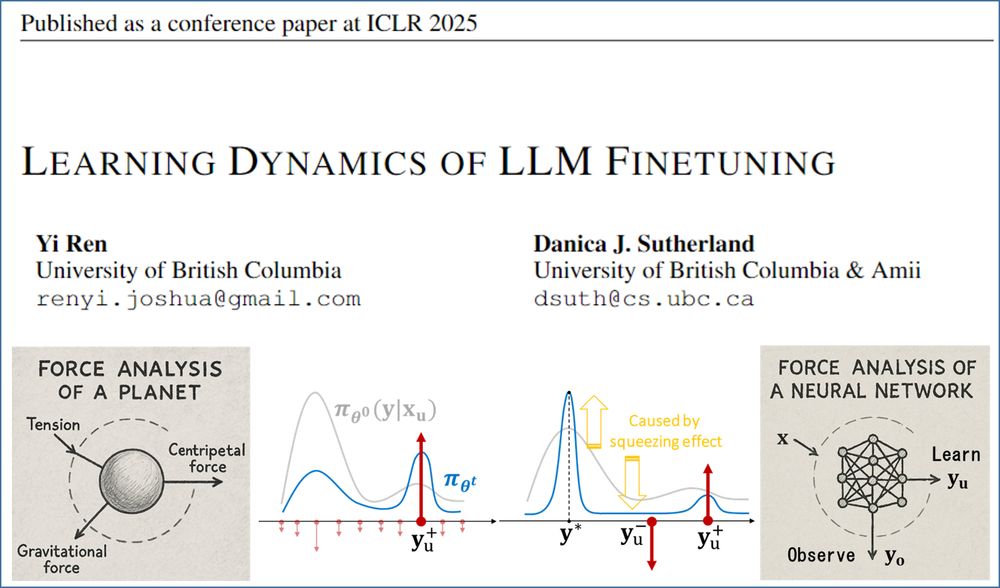

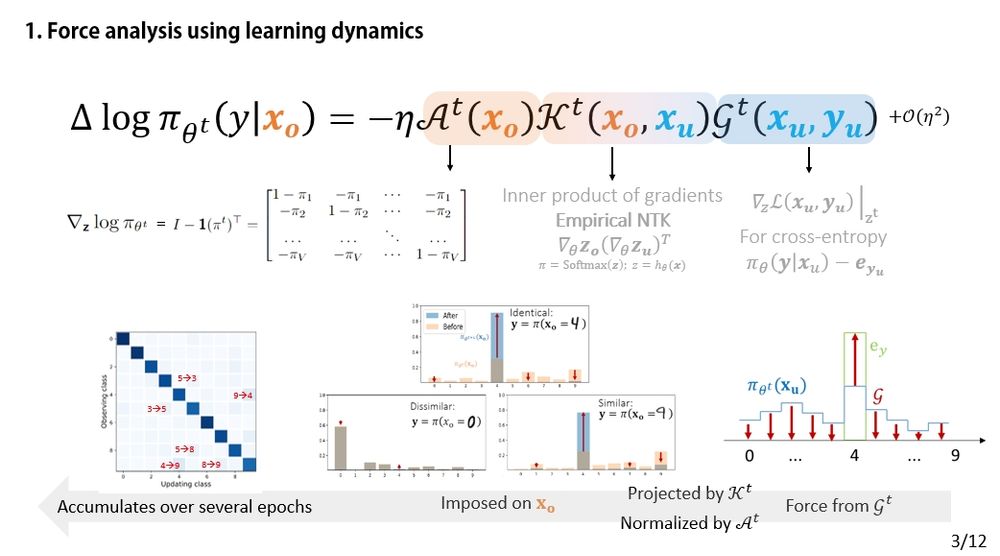

🧠 Think of the model's prediction as an object and each gradient update as a force acting on it.

(2/12)

🧠 Think of the model's prediction as an object and each gradient update as a force acting on it.

(2/12)

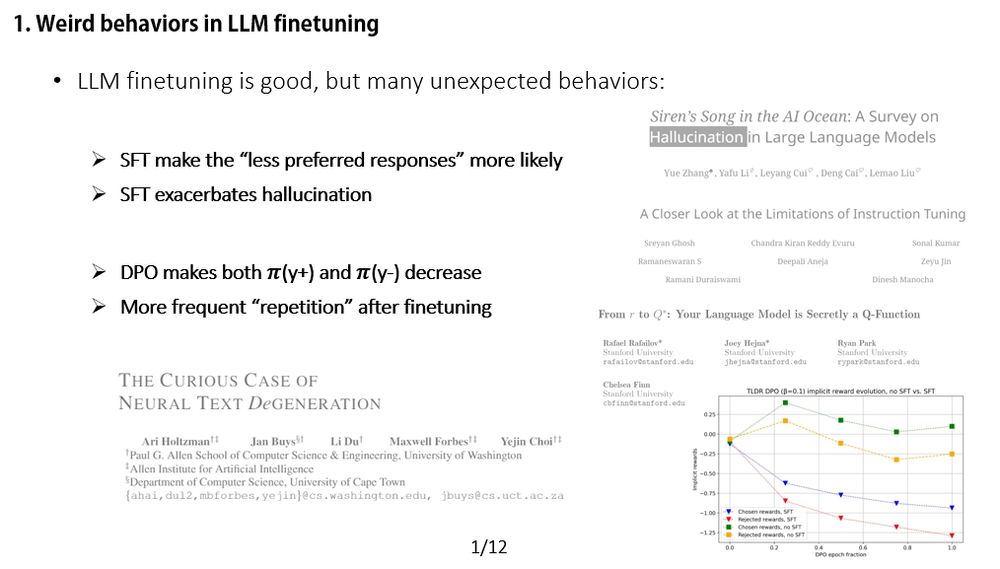

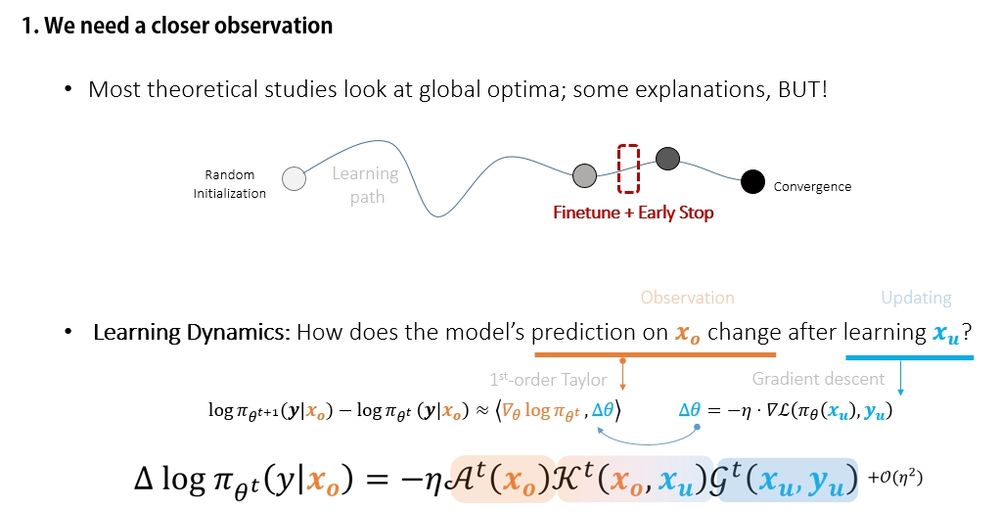

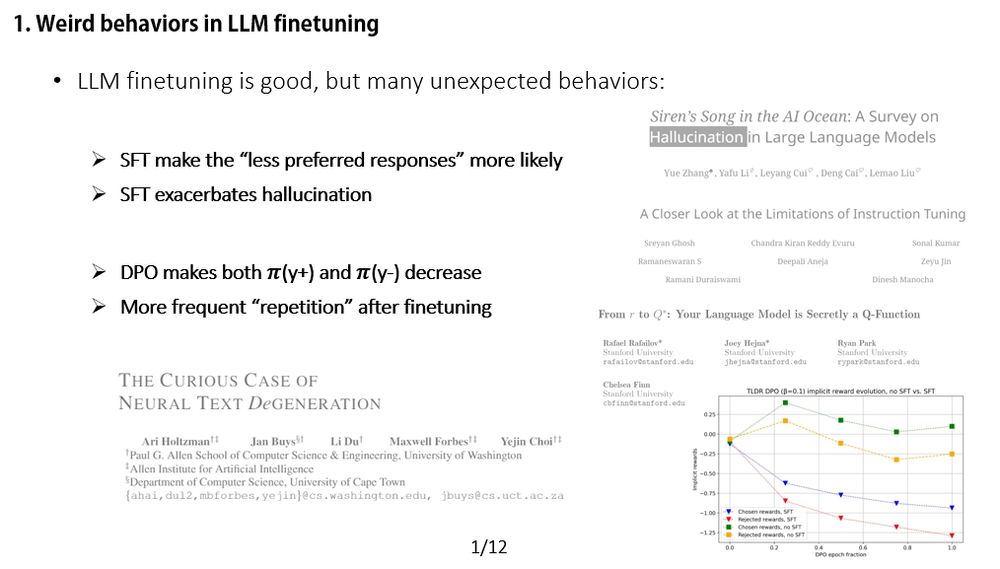

🧩Prior work offers great insights, but we take a different angle: We dive into the dynamics behind these changes, step by step, like force analysis in physics. ⚙️

(1/12)

🧩Prior work offers great insights, but we take a different angle: We dive into the dynamics behind these changes, step by step, like force analysis in physics. ⚙️

(1/12)

We offer a fresh perspective—consider doing a "force analysis" on your model’s behavior.

Check out our #ICLR2025 Oral paper:

Learning Dynamics of LLM Finetuning!

(0/12)

We offer a fresh perspective—consider doing a "force analysis" on your model’s behavior.

Check out our #ICLR2025 Oral paper:

Learning Dynamics of LLM Finetuning!

(0/12)

(8/12)

(8/12)

⚖️ The key difference? DPO introduces a negative gradient term — that’s where the twist comes in.

(7/12)

⚖️ The key difference? DPO introduces a negative gradient term — that’s where the twist comes in.

(7/12)

🔍 The model uses facts or phrases from A2 when answering an unrelated Q1.

Why does this happen?

Just do a force analysis — the answer emerges naturally. 💡

(6/12)

🔍 The model uses facts or phrases from A2 when answering an unrelated Q1.

Why does this happen?

Just do a force analysis — the answer emerges naturally. 💡

(6/12)

📈📉 This aligns well with the force analysis perspective. (More supporting experiments in the paper).

(5/12)

📈📉 This aligns well with the force analysis perspective. (More supporting experiments in the paper).

(5/12)

The change in the model’s prediction can be decomposed (AKG-style). The input is a concatenation: [x; y]. This lets us ask questions like: “How does the model’s confidence in 'y-' change if we fine-tune on 'y+'?”

(4/12)

The change in the model’s prediction can be decomposed (AKG-style). The input is a concatenation: [x; y]. This lets us ask questions like: “How does the model’s confidence in 'y-' change if we fine-tune on 'y+'?”

(4/12)

(3/12)

(3/12)

🧠 Think of the model's prediction as an object and each gradient update as a force acting on it.

(2/12)

🧠 Think of the model's prediction as an object and each gradient update as a force acting on it.

(2/12)

🧩Prior work offers great insights, but we take a different angle: We dive into the dynamics behind these changes, step by step, like force analysis in physics. ⚙️

(1/12)

🧩Prior work offers great insights, but we take a different angle: We dive into the dynamics behind these changes, step by step, like force analysis in physics. ⚙️

(1/12)