Jaso ditugun eskaerei erantzunez zuen eskura jarri dugu Latxaren bertsio ahaltsuena, chatGPT-tik gertu dabilena, baina euskara txukunagoa sortuz.

Jaso ditugun eskaerei erantzunez zuen eskura jarri dugu Latxaren bertsio ahaltsuena, chatGPT-tik gertu dabilena, baina euskara txukunagoa sortuz.

t.co/OPVNnBG2xW?utm_...

t.co/OPVNnBG2xW?utm_...

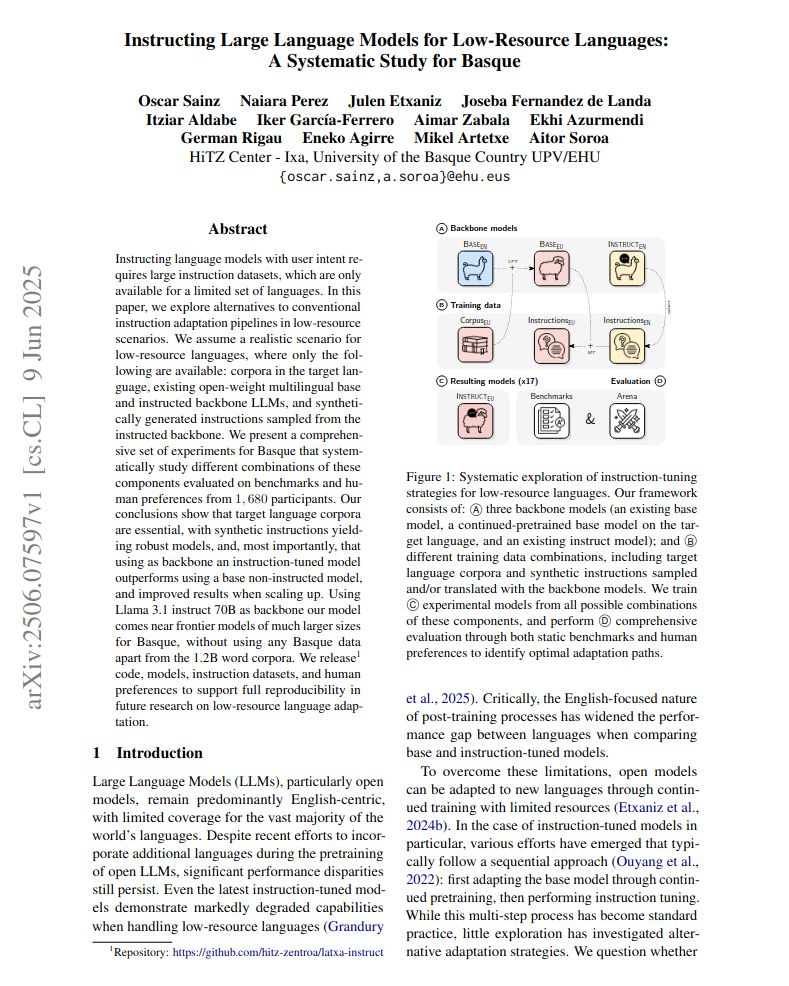

We did so to teach Basque to Llama models with promising results!

Interestingly, you only need English instructions and target language corpora 🤯

1/3

Key findings:

1️⃣Language corpora is essential: models need exposure to plain Basque text

2️⃣Starting from instructed models beats the standard base→instruct pipeline

3️⃣English-only instructions work well, but combining with Basque instructions yields the most robust models

We did so to teach Basque to Llama models with promising results!

Interestingly, you only need English instructions and target language corpora 🤯

1/3

#newHitzPaper

Many languages are underserved by open LLMs, and face the following question: Which is the best way to produce open instruction-tuned LLMs for low-resource languages?

We obtained great results for a cost-effective option!

📄Paper: arxiv.org/abs/2506.07597

#newHitzPaper

Many languages are underserved by open LLMs, and face the following question: Which is the best way to produce open instruction-tuned LLMs for low-resource languages?

We obtained great results for a cost-effective option!

📄Paper: arxiv.org/abs/2506.07597